- The Beginning: A New Set of Requirements

- Network Management Is Dead, Long Live Network Management

- YANG: The Data Modeling Language

- The Key to Automation? Data Models

- The Management Architecture

- Data Model-Driven Management Components

- The Encoding (Protocol Binding and Serialization)

- The Server Architecture: Datastore

- The Protocols

- The Programming Language

- Telemetry

- The Bigger Picture: Using NETCONF to Manage a Network

- Interview with the Experts

- Summary

- References in This Chapter

The Bigger Picture: Using NETCONF to Manage a Network

It happens so easily when talking about management protocols that the conversation ends up being about its components—the client, server, and protocol details. The most important topic is somehow lost. The core of the issue is really the use cases you want to implement and how they can be realized. The overarching goal is to simplify the life of the network operator. “Ease of use is a key requirement for any network management technology from the operators point of view” (RFC 3535, Requirement #1).

Network operators say they would want to “concentrate on the configuration of the network as a whole rather than individual devices” (RFC 3535, Requirement #4). Since the building blocks of networks are devices and cabling, there is really no way to avoid managing devices. The point the operators are trying to make, however, is that a raised abstraction level is convenient when managing networks. They would like to do their management using network-level concepts rather than device-level commands.

This is seen as a good case for network management system (NMS) vendors, but in order for the NMS systems to be reasonably small, simple, and inexpensive, great responsibility falls on the management protocol. Thirty years of industry NMS experience has taught us time after time that with poorly designed management protocols, NMS vendors routinely fail on all three accounts.

What does NETCONF do to support the NMS development? Let’s have a look at the typical use case in network management: how to provision an additional leg on an L3VPN.

At the very least, a typical L3VPN consists of the following:

Consumer Edge (CE) devices located near the endpoints of the VPN, such as a store location, branch office, or someone’s home

Provider Edge (PE) devices located on the outer rim of the provider organization’s core network

A core network connecting all the hub locations and binding to all PE devices

A monitoring solution to ensure the L3VPN is performing according to expectations and promises

A security solution to ensure privacy and security

In order to add an L3VPN leg to the network, the L3VPN application running in the NMS must touch at least the CE device on the new site, the PE device to which the CE device is connected, the monitoring system, and probably a few devices related to security. It could happen that the CE is a virtual device, in which case the NMS may have to speak to some container manager or virtual infrastructure manager (VIM) to spin up the virtual machine (VM). Sometimes 20 devices or so must be touched in order to spin up a single L3VPN leg. All of them are required for the leg to be functional. All firewalls and routers with access control lists (ACLs) need to get their updates, or traffic does not flow. Encryption needs to be set up properly at both ends, or traffic is not safe. Monitoring needs to be set up, or loss of service is not detected.

To implement the new leg in the network using NETCONF, the manager runs a network-wide transaction toward the relevant devices, updating the candidate datastore on them and validating it; if everything is okay, the manager then commits that change to the :running datastore. “It is important to distinguish between the distribution of configurations and the activation of a certain configuration. Devices should be able to hold multiple configurations.” (RFC 3535, Requirement #13). Here are the steps the manager takes in more detail:

STEP 1. Figure out which devices need to be involved to implement the new leg, according to topology and requested endpoints.

STEP 2. Connect to all relevant devices over NETCONF and then lock (<lock>) the NETCONF datastores :running and :candidate on those devices.

STEP 3. Clear (<discard-changes>) the :candidate datastore on the devices.

STEP 4. Compute the required configuration change for each device.

STEP 5. Edit (<edit-config>) each device’s :candidate datastore with the computed change.

STEP 6. Validate (<validate>) the :candidate datastore.

In transaction theory, transactions have two (or three) phases when successful. All the actions up until this point were in the transaction’s PREPARE phase. At the end of the PREPARE phase, all devices must report either <ok> or <rpc-error>. This is a critical decision point. Transaction theorists often call this the “point of no return.”

If any participating device reports <rpc-error> up to this point, the transaction has failed and goes to the ABORT phase. Nothing happens to the network. The NMS safely drops the connection to all devices. This means the changes were never activated and the locks now released.

In case all devices report <ok> here, the NMS proceeds to the COMMIT phase.

Next, commit (<commit>) each device’s :candidate datastore. This activates the change.

Splitting the work to activate a change into a two-phase commit with validation in between may sound easy and obvious when described this way. At the same time, you must acknowledge that this is quite revolutionary in the network management context—not because it’s hard to do, but because of what it enables.

Unless you have programmed NMS solutions, it’s hard to imagine the amount of code required in the NMS to detect and resolve any errors if the devices do not support transactions individually. In the example, you even had network-wide transactions. In a mature NMS, about half the code is devoted to error detection and recovery from a great number of situations. This recovery code is also the most expensive part to develop since it is all about corner cases and situations that are supposed not to happen. Such situations are complicated to re-create for testing, and even to think up.

The cost of a software project is largely proportional to the amount of code written, so this means more than half of the cost of the traditional NMS is removed when the devices support network-wide transactions.

The two-phase, network-wide transaction just described is widely used with NETCONF devices today. This saves a lot of code but is not failsafe. The <commit> operation could fail, the connection to a device could be lost, or a device might crash or not respond while sending out the <commit> to all devices. This would lead to some devices activating the change, while others do not. In order to tighten this up even more, NETCONF also specifies a three-phase network-wide transaction that managers may want to use.

By supplying the flag <confirmed> in the preceding <commit> stage, the transaction enters the third CONFIRM phase (going from PREPARE to COMMIT followed by CONFIRM). If the NMS sends this flag, the NMS must come back within a given time limit to reconfirm the change.

If no confirmation is received at the end of the time limit, or if the connection to the NMS is lost, each device rolls back to the previous configuration state. While the transaction timer is running, the NMS indulges in all sorts of testing and measurement operations, to verify that the L3VPN leg it just created functions as intended. And if not, simply close the connections to all the devices involved in the transaction to make it all go away. If all looks good, commit and unlock, as follows:

STEP 1. Give another <commit>, this time without the <confirmed> flag.

STEP 2. Unlock (<unlock>) the :running and :candidate datastores.

There are a lot more options and details on NETCONF network-wide transactions that could be discussed here, but the important points were made, so let’s tie this off. With this sort of underlying technology, the NMS developers can become real slackers. Well, not really, but they do get twice as many use cases completed compared to life without transactions. Those use cases also work a lot more reliably. This discussion highlights the value of network-wide transactions. “Support for configuration transactions across a number of devices would significantly simplify network configuration management” (RFC 3535, Requirement #5).

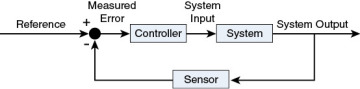

Let’s zoom out one level more and see how the network-wide transactions fit into the bigger picture. Look at network management from a control-theory perspective. As any electrical or mechanical engineer knows, the proper way to build a control system that works well in a complex environment is to get a feedback loop into the design. The traditional control-theory picture is shown in Figure 2-6.

Figure 2.6 Feedback Loop

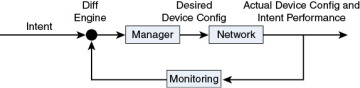

Translating that into your network management context, the picture becomes what is shown in Figure 2-7.

Figure 2.7 Feedback Loop in Network Management

As you can see in this figure, a mechanism to push and pull configurations to and from the network is obviously required. “A mechanism to dump and restore configurations is a primitive operation needed by operators. Standards for pulling and pushing configurations from/to devices are desirable” (RFC 3535, Requirement #7).

The network-wide transaction is an important mechanism for the manager to control the network. Without it, the manager would become both very much more complex and less efficient (in other words, the network would be consistent with the intent a lesser portion of the time). In order to close the loop, each of the other steps is just as important, however. When the monitoring reads the state of the network, it leverages the NETCONF capability to separate configuration from other data. “It is necessary to make a clear distinction between configuration data, data that describes operational state, and statistics. Some devices make it very hard to determine which parameters were administratively configured and which were obtained via other mechanisms such as routing protocols” (RFC 3535, Requirement #2).

With NETCONF, this data is delivered using standardized operations (<get> and <get-config>) with semantics consistent across devices. “It is required to be able to fetch separately configuration data, operational state data, and statistics from devices, and to be able to compare these between devices” (RFC 3535, Requirement #3). The data structure is consistent, too, through the use of standardized YANG models on the devices.

The diff engine then compares the intent performance to the original intent to see how well the current strategy to implement the intent works (remember the intent-based networking trend in Chapter 1), and it compares the actual network configuration with the desired one. If a change in strategy is required or desired (for example, because a peer went down, or the price of computing is lower in a different data center now), the manager computes a new desired configuration and sends it to the network. “Given configuration A and configuration B, it should be possible to generate the operations necessary to get from A to B with minimal state changes and effects on network and systems. It is important to minimize the impact caused by configuration changes” (RFC 3535, Requirement #6).

Clearly, for this to work, the definition of a transaction needs to be pretty strong. The diff engine computes an arbitrary bag of changes in no particular order. The complexity of the manager increases steeply if it has to sequence all of the diffs in some particular way. And unless that particular way is described in machine-readable form for every device in the network, that NMS remains a dream.

Therefore, it follows that the transaction definition used with NETCONF must describe a set of changes, that taken together and when applied to the current configuration must be consistent and make sense. It’s about consistent configurations, not about atomic sequences of changes. “It must be easy to do consistency checks of configurations over time and between the ends of a link in order to determine the changes between two configurations and whether those configurations are consistent” (RFC 3535, Requirement #8).

This is not the same as a sequence of operations that are carried out in the order they are given, and where each intermediate step must be a valid configuration in itself. The server (device) side is clearly easier to implement if the proper sequencing comes from the manager, like the tradition in the SNMP world, which is why many implementers are tempted to go with this interpretation. Let’s state clearly then that NETCONF transactional consistency is at the end of the transaction. Otherwise, the feedback controller use case dies, and you would be back to simple scripts shooting configurations at a network in the dark.

The same feedback capability is essential in those networks where human operators are allowed or required to meddle with the network at the same time as the manager. This is a common operational reality today and invariably leads to unforeseen situations. Unless there is a mechanism with a feedback loop that can compute new configurations and adjust to the ever-changing landscape, the more sophisticated use cases will never emerge.