3.4 Linux

Linux was created in 1991 by Linus Torvalds as a free operating system for Intel personal computers. He announced the project in a Usenet post:

I’m doing a (free) operating system (just a hobby, won’t be big and professional like gnu) for 386(486) AT clones. This has been brewing since April, and is starting to get ready. I’d like any feedback on things people like/dislike in minix, as my OS resembles it somewhat (same physical layout of the file-system (due to practical reasons) among other things).

This refers to the MINIX operating system, which was being developed as a free and small (mini) version of Unix for small computers. BSD was also aiming to provide a free Unix version although at the time it had legal troubles.

The Linux kernel was developed taking general ideas from many ancestors, including:

Unix (and Multics): Operating system layers, system calls, multitasking, processes, process priorities, virtual memory, global file system, file system permissions, device nodes, buffer cache

BSD: Paged virtual memory, demand paging, fast file system (FFS), TCP/IP network stack, sockets

Solaris: VFS, NFS, page cache, unified page cache, slab allocator

Plan 9: Resource forks (rfork), for creating different levels of sharing between processes and threads (tasks)

Linux now sees widespread use for servers, cloud instances, and embedded devices including mobile phones.

3.4.1 Linux Kernel Developments

Linux kernel developments, especially those related to performance, include the following (many of these descriptions include the Linux kernel version where they were first introduced):

CPU scheduling classes: Various advanced CPU scheduling algorithms have been developed, including scheduling domains (2.6.7) to make better decisions regarding non-uniform memory access (NUMA). See Chapter 6, CPUs.

I/O scheduling classes: Different block I/O scheduling algorithms have been developed, including deadline (2.5.39), anticipatory (2.5.75), and completely fair queueing (CFQ) (2.6.6). These are available in kernels up to Linux 5.0, which removed them to support only newer multi-queue I/O schedulers. See Chapter 9, Disks.

TCP congestion algorithms: Linux allows different TCP congestion control algorithms to be configured, and supports Reno, Cubic, and more in later kernels mentioned in this list. See also Chapter 10, Network.

Overcommit: Along with the out-of-memory (OOM) killer, this is a strategy for doing more with less main memory. See Chapter 7, Memory.

Futex (2.5.7): Short for fast user-space mutex, this is used to provide high-performing user-level synchronization primitives.

Huge pages (2.5.36): This provides support for preallocated large memory pages by the kernel and the memory management unit (MMU). See Chapter 7, Memory.

OProfile (2.5.43): A system profiler for studying CPU usage and other events, for both the kernel and applications.

RCU (2.5.43): The kernel provides a read-copy update synchronization mechanism that allows multiple reads to occur concurrently with updates, improving performance and scalability for data that is mostly read.

epoll (2.5.46): A system call for efficiently waiting for I/O across many open file descriptors, which improves the performance of server applications.

Modular I/O scheduling (2.6.10): Linux provides pluggable scheduling algorithms for scheduling block device I/O. See Chapter 9, Disks.

DebugFS (2.6.11): A simple unstructured interface for the kernel to expose data to user level, which is used by some performance tools.

Cpusets (2.6.12): exclusive CPU grouping for processes.

Voluntary kernel preemption (2.6.13): This process provides low-latency scheduling without the complexity of full preemption.

inotify (2.6.13): A framework for monitoring file system events.

blktrace (2.6.17): A framework and tool for tracing block I/O events (later migrated into tracepoints).

splice (2.6.17): A system call to move data quickly between file descriptors and pipes, without a trip through user-space. (The sendfile(2) syscall, which efficiently moves data between file descriptors, is now a wrapper to splice(2).)

Delay accounting (2.6.18): Tracks per-task delay states. See Chapter 4, Observability Tools.

IO accounting (2.6.20): Measures various storage I/O statistics per process.

DynTicks (2.6.21): Dynamic ticks allow the kernel timer interrupt (clock) to not fire during idle, saving CPU resources and power.

SLUB (2.6.22): A new and simplified version of the slab memory allocator.

CFS (2.6.23): Completely fair scheduler. See Chapter 6, CPUs.

cgroups (2.6.24): Control groups allow resource usage to be measured and limited for groups of processes.

TCP LRO (2.6.24): TCP Large Receive Offload (LRO) allows network drivers and hardware to aggregate packets into larger sizes before sending them to the network stack. Linux also supports Large Send Offload (LSO) for the send path.

latencytop (2.6.25): Instrumentation and a tool for observing sources of latency in the operating system.

Tracepoints (2.6.28): Static kernel tracepoints (aka static probes) that instrument logical execution points in the kernel, for use by tracing tools (previously called kernel markers). Tracing tools are introduced in Chapter 4, Observability Tools.

perf (2.6.31): Linux Performance Events (perf) is a set of tools for performance observability, including CPU performance counter profiling and static and dynamic tracing. See Chapter 6, CPUs, for an introduction.

No BKL (2.6.37): Final removal of the big kernel lock (BKL) performance bottleneck.

Transparent huge pages (2.6.38): This is a framework to allow easy use of huge (large) memory pages. See Chapter 7, Memory.

KVM: The Kernel-based Virtual Machine (KVM) technology was developed for Linux by Qumranet, which was purchased by Red Hat in 2008. KVM allows virtual operating system instances to be created, running their own kernel. See Chapter 11, Cloud Computing.

BPF JIT (3.0): A Just-In-Time (JIT) compiler for the Berkeley Packet Filter (BPF) to improve packet filtering performance by compiling BPF bytecode to native instructions.

CFS bandwidth control (3.2): A CPU scheduling algorithm that supports CPU quotas and throttling.

TCP anti-bufferbloat (3.3+): Various enhancements were made from Linux 3.3 onwards to combat the bufferbloat problem, including Byte Queue Limits (BQL) for the transmission of packet data (3.3), CoDel queue management (3.5), TCP small queues (3.6), and the Proportional Integral controller Enhanced (PIE) packet scheduler (3.14).

uprobes (3.5): The infrastructure for dynamic tracing of user-level software, used by other tools (perf, SystemTap, etc.).

TCP early retransmit (3.5): RFC 5827 for reducing duplicate acknowledgments required to trigger fast retransmit.

TFO (3.6, 3.7, 3.13): TCP Fast Open (TFO) can reduce the TCP three-way handshake to a single SYN packet with a TFO cookie, improving performance. It was made the default in 3.13.

NUMA balancing (3.8+): This added ways for the kernel to automatically balance memory locations on multi-NUMA systems, reducing CPU interconnect traffic and improving performance.

SO_REUSEPORT (3.9): A socket option to allow multiple listener sockets to bind to the same port, improving multi-threaded scalability.

SSD cache devices (3.9): Device mapper support for an SSD device to be used as a cache for a slower rotating disk.

bcache (3.10): An SSD cache technology for the block interface.

TCP TLP (3.10): TCP Tail Loss Probe (TLP) is a scheme to avoid costly timer-based retransmits by sending new data or the last unacknowledged segment after a shorter probe timeout, to trigger faster recovery.

NO_HZ_FULL (3.10, 3.12): Also known as timerless multitasking or a tickless kernel, this allows non-idle threads to run without clock ticks, avoiding workload perturbations [Corbet 13a].

Multiqueue block I/O (3.13): This provides per-CPU I/O submission queues rather than a single request queue, improving scalability especially for high IOPS SSD devices [Corbet 13b].

SCHED_DEADLINE (3.14): An optional scheduling policy that implements earliest deadline first (EDF) scheduling [Linux 20b].

TCP autocorking (3.14): This allows the kernel to coalesce small writes, reducing the sent packets. An automatic version of the TCP_CORK setsockopt(2).

MCS locks and qspinlocks (3.15): Efficient kernel locks, using techniques such as per-CPU structures. MCS is named after the original lock inventors (Mellor-Crummey and Scott) [Mellor-Crummey 91] [Corbet 14].

Extended BPF (3.18+): An in-kernel execution environment for running secure kernel-mode programs. The bulk of extended BPF was added in the 4.x series. Support for attached to kprobes was added in 3.19, to tracepoints in 4.7, to software and hardware events in 4.9, and to cgroups in 4.10. Bounded loops were added in 5.3, which also increased the instruction limit to allow complex applications. See Section 3.4.4, Extended BPF.

Overlayfs (3.18): A union mount file system included in Linux. It creates virtual file systems on top of others, which can also be modified without changing the first. Often used for containers.

DCTCP (3.18): The Data Center TCP (DCTCP) congestion control algorithm, which aims to provide high burst tolerance, low latency, and high throughput [Borkmann 14a].

DAX (4.0): Direct Access (DAX) allows user space to read from persistent-memory storage devices directly, without buffer overheads. ext4 can use DAX.

Queued spinlocks (4.2): Offering better performance under contention, these became the default spinlock kernel implementation in 4.2.

TCP lockless listener (4.4): The TCP listener fast path became lockless, improving performance.

cgroup v2 (4.5, 4.15): A unified hierarchy for cgroups was in earlier kernels, and considered stable and exposed in 4.5, named cgroup v2 [Heo 15]. The cgroup v2 CPU controller was added in 4.15.

epoll scalability (4.5): For multithreaded scalability, epoll(7) avoids waking up all threads that are waiting on the same file descriptors for each event, which caused a thundering-herd performance issue [Corbet 15].

KCM (4.6): The Kernel Connection Multiplexor (KCM) provides an efficient message-based interface over TCP.

TCP NV (4.8): New Vegas (NV) is a new TCP congestion control algorithm suited for high-bandwidth networks (those that run at 10+ Gbps).

XDP (4.8, 4.18): eXpress Data Path (XDP) is a BPF-based programmable fast path for high-performance networking [Herbert 16]. An AF_XDP socket address family that can bypass much of the network stack was added in 4.18.

TCP BBR (4.9): Bottleneck Bandwidth and RTT (BBR) is a TCP congestion control algorithm that provides improved latency and throughput over networks suffering packet loss and bufferbloat [Cardwell 16].

Hardware latency tracer (4.9): An Ftrace tracer that can detect system latency caused by hardware and firmware, including system management interrupts (SMIs).

perf c2c (4.10): The cache-to-cache (c2c) perf subcommand can help identify CPU cache performance issues, including false sharing.

Intel CAT (4.10): Support for Intel Cache Allocation Technology (CAT) allowing tasks to have dedicated CPU cache space. This can be used by containers to help with the noisy neighbor problem.

Multiqueue I/O schedulers: BPQ, Kyber (4.12): The Budget Fair Queueing (BFQ) multiqueue I/O scheduler provides low latency I/O for interactive applications, especially for slower storage devices. BFQ was significantly improved in 5.2. The Kyber I/O scheduler is suited for fast multiqueue devices [Corbet 17].

Kernel TLS (4.13, 4.17): Linux version of kernel TLS [Edge 15].

MSG_ZEROCOPY (4.14): A send(2) flag to avoid extra copies of packet bytes between an application and the network interface [Linux 20c].

PCID (4.14): Linux added support for process-context ID (PCID), a processor MMU feature to help avoid TLB flushes on context switches. This reduced the performance cost of the kernel page table isolation (KPTI) patches needed to mitigate the meltdown vulnerability. See Section 3.4.3, KPTI (Meltdown).

PSI (4.20, 5.2): Pressure stall information (PSI) is a set of new metrics to show time spent stalled on CPU, memory, or I/O. PSI threshold notifications were added in 5.2 to support PSI monitoring.

TCP EDT (4.20): The TCP stack switched to Early Departure Time (EDT): This uses a timing-wheel scheduler for sending packets, providing better CPU efficiency and smaller queues [Jacobson 18].

Multi-queue I/O (5.0): Multi-queue block I/O schedulers became the default in 5.0, and classic schedulers were removed.

UDP GRO (5.0): UDP Generic Receive Offload (GRO) improves performance by allowing packets to be aggregated by the driver and card and passed up stack.

io_uring (5.1): A generic asynchronous interface for fast communication between applications and the kernel, making use of shared ring buffers. Primary uses include fast disk and network I/O.

MADV_COLD, MADV_PAGEOUT (5.4): These madvise(2) flags are hints to the kernel that memory is needed but not anytime soon. MADV_PAGEOUT is also a hint that memory can be reclaimed immediately. These are especially useful for memory-constrained embedded Linux devices.

MultiPath TCP (5.6): Multiple network links (e.g., 3G and WiFi) can be used to improve the performance and reliability of a single TCP connection.

Boot-time tracing (5.6): Allows Ftrace to trace the early boot process. (systemd can provide timing information on the late boot process: see Section 3.4.2, systemd.)

Thermal pressure (5.7): The scheduler accounts for thermal throttling to make better placement decisions.

perf flame graphs (5.8): perf(1) support for the flame graph visualization.

Not listed here are the many small performance improvements for locking, drivers, VFS, file systems, asynchronous I/O, memory allocators, NUMA, new processor instruction support, GPUs, and the performance tools perf(1) and Ftrace. System boot time has also been improved by the adoption of systemd.

The following sections describe in more detail three Linux topics important to performance: systemd, KPTI, and extended BPF.

3.4.2 systemd

systemd is a commonly used service manager for Linux, developed as a replacement for the original UNIX init system. systemd has various features including dependency-aware service startup and service time statistics.

An occasional task in systems performance is to tune the system’s boot time, and the systemd time statistics can show where to tune. The overall boot time can be reported using systemd-analyze(1):

# systemd-analyze Startup finished in 1.657s (kernel) + 10.272s (userspace) = 11.930s graphical.target reached after 9.663s in userspace

This output shows that the system booted (reached the graphical.target in this case) in 9.663 seconds. More information can be seen using the critical-chain subcommand:

# systemd-analyze critical-chain

The time when unit became active or started is printed after the "@" character.

The time the unit took to start is printed after the "+" character.

graphical.target @9.663s

└─multi-user.target @9.661s

└─snapd.seeded.service @9.062s +62ms

└─basic.target @6.336s

└─sockets.target @6.334s

└─snapd.socket @6.316s +16ms

└─sysinit.target @6.281s

└─cloud-init.service @5.361s +905ms

└─systemd-networkd-wait-online.service @3.498s +1.860s

└─systemd-networkd.service @3.254s +235ms

└─network-pre.target @3.251s

└─cloud-init-local.service @2.107s +1.141s

└─systemd-remount-fs.service @391ms +81ms

└─systemd-journald.socket @387ms

└─system.slice @366ms

└─-.slice @366ms

This output shows the critical path: the sequence of steps (in this case, services) that causes the latency. The slowest service was systemd-networkd-wait-online.service, taking 1.86 seconds to start.

There are other useful subcommands: blame shows the slowest initialization times, and plot produces an SVG diagram. See the man page for systemd-analyze(1) for more information.

3.4.3 KPTI (Meltdown)

The kernel page table isolation (KPTI) patches added to Linux 4.14 in 2018 are a mitigation for the Intel processor vulnerability called “meltdown.” Older Linux kernel versions had KAISER patches for a similar purpose, and other kernels have employed mitigations as well. While these work around the security issue, they also reduce processor performance due to extra CPU cycles and additional TLB flushing on context switches and syscalls. Linux added process-context ID (PCID) support in the same release, which allows some TLB flushes to be avoided, provided the processor supports pcid.

I evaluated the performance impact of KPTI as between 0.1% and 6% for Netflix cloud production workloads, depending on the workload’s syscall rate (higher costs more) [Gregg 18a]. Additional tuning will further reduce the cost: the use of huge pages so that a flushed TLB warms up faster, and using tracing tools to examine syscalls to identify ways to reduce their rate. A number of such tracing tools are implemented using extended BPF.

3.4.4 Extended BPF

BPF stands for Berkeley Packet Filter, an obscure technology first developed in 1992 that improved the performance of packet capture tools [McCanne 92]. In 2013, Alexei Starovoitov proposed a major rewrite of BPF [Starovoitov 13], which was further developed by himself and Daniel Borkmann and included in the Linux kernel in 2014 [Borkmann 14b]. This turned BPF into a general-purpose execution engine that can be used for a variety of things, including networking, observability, and security.

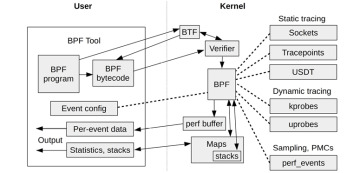

BPF itself is a flexible and efficient technology composed of an instruction set, storage objects (maps), and helper functions. It can be considered a virtual machine due to its virtual instruction set specification. BPF programs run in kernel mode (as pictured earlier in Figure 3.2) and are configured to run on events: socket events, tracepoints, USDT probes, kprobes, uprobes, and perf_events. These are shown in Figure 3.16.

Figure 3.16 BPF components

BPF bytecode must first pass through a verifier that checks for safety, ensuring that the BPF program will not crash or corrupt the kernel. It may also use a BPF Type Format (BTF) system for understanding data types and structures. BPF programs can output data via a perf ring buffer, an efficient way to emit per-event data, or via maps, which are suited for statistics.

Because it is powering a new generation of efficient, safe, and advanced tracing tools, BPF is important for systems performance analysis. It provides programmability to existing kernel event sources: tracepoints, kprobes, uprobes, and perf_events. A BPF program can, for example, record a timestamp on the start and end of I/O to time its duration, and record this in a custom histogram. This book contains many BPF-based programs using the BCC and bpftrace front-ends. These front-ends are covered in Chapter 15.