Preparing Geometry for UE4

Unreal Engine 4 refers to 3D Objects as either Static Meshes or Skeletal Meshes. A single Mesh can have Smoothing Groups, multiple materials, and vertex colors. Meshes can be rigid (Static Meshes) or deforming (Skeletal Meshes). You export each Mesh as an FBX file from your 3D application, and then import it into your UE4 Project.

Architecture and Prop Meshes

I think of my Static Mesh assets in two broad categories: Architecture and Props. Each follows similar rules but both have considerations that can make dealing with each much easier.

Architecture

Architecture Meshes are objects that are unique and are placed in a specific location, such as walls and floors, terrain, roads, and so on. Typically, only one copy of these objects is in your scene, and they need to be in a specific location.

You will usually keep a 1:1 relationship between the objects in your source art, your Content folder, and your UE4 Level. (For example, you’ll have one SM_Wall01 in each in the exact same place.)

You export these objects in place, import them, and place them into the scene at 0,0,0 (or another defined origin point). Keeping your UE4 content in sync with your scene using this method is easy because you can import or reimport Meshes or entire scenes without needing to reposition them.

Props

Prop Meshes are repeating or reusable Static Meshes that you place in or on your Architecture within your Level. An example is plates on a table. You could have a unique Static Mesh asset for each one in your scene or, because they are all exactly the same, you can reference a single asset and move it into position in the Viewport. The table would also be a Prop and could also be moved about easily.

Having multiple Props reference a single asset reduces memory overhead and makes updating and iterating on content easy.

Naming

Like all digital projects, developing and sticking to a solid naming convention for your projects is an important task for anybody developing with UE4. Projects end up with thousands of individual assets and they all have the extension of .uasset. Naming your objects in your 3D application is easy and fast—but this isn’t quite the case in UE4.

You will undoubtedly come up with your own standards and system to accommodate your specific data pipelines, but it helps to be on the same page as the rest of the Unreal Engine 4 community as you will likely use and share content with them.

Basics

Don’t use spaces or special/Unicode characters when naming anything (Meshes, files, variables, and so on). Sometimes you can get away with breaking these rules, but it makes things tough later when you encounter a system that won’t accept the space or special character.

UE4 Naming Convention

A basic naming scheme for Unreal content has been used over the last 15 years by Epic Games and developers using Unreal Engine. It follows the basic convention of

Prefix_AssetName_Suffix

If you looked through any of the content included with UE4, you have already seen this convention.

Prefix

The Prefix is a short one- or two-letter code that identifies the type of content the asset is. Common examples are M_ for Materials and SM_ for Static Meshes. You can see that it’s usually a simple acronym or abbreviation, but sometimes conflicts or tradition require others like SK_ for Skeletal Meshes. (A complete list is available on the companion website at www.TomShannon3D.com/unreal4Viz)

AssetName

BaseName describes the object in a simple, understandable manner. WoodFloor, Stone, Concrete, Asphalt, and Leather are all great examples.

Suffix

A few classes of assets have some subtle variations that are important to note in the name. The most common example is Texture assets. Although they all share the same T_ prefix, different kinds of textures are intended for specific uses in the Engine such as normal maps and roughness maps. Using a suffix helps describe these differences while keeping all the Texture objects grouped neatly.

Examples

You could find the examples shown in Table 3.1 in any common UE4 project.

Table 3.1 Example Asset Names

Asset Name |

Note |

T_Flooring_OakBig_D |

Base Color (diffuse) Texture of big, oak flooring |

T_Flooring_OakBig_N |

Normal map Texture of big, oak flooring |

MI_Flooring_OakBig |

Material Instance using the big, oak flooring Textures |

M_Flooring_MasterMaterial |

The Material that the big, oak flooring Material Instance is parented to |

SM_Floor_1stFloor |

Static Mesh with the MI_Flooring_OakBig Material Instance is applied to |

There are dozens of content types and variations in UE4, each with a different possible naming scheme. You can go to www.TomShannon3D.com/UnrealForViz for a direct link to a community-driven list of all the suggested prefixes and suffixes.

Although this list is exhaustive, none of these naming rules are enforced in anyway. What’s most important is consistency. Develop a system and stick to it.

UV Mapping

UV mapping is a challenge for all 3D artists and even more so for visualization. Most visualization rendering can make do with some very poor UVW mapping, and sometimes none. UE4 simply cannot.

UVW coordinates are used for a litany of features and effects; from the obvious such as applying texturing to surfaces to the less obvious but equally important things such as Lightmap coordinates.

All of your 3D assets will need quality, consistent UVW coordinates. For simple geometry, this can be as easy as applying a UVW Map modifier to your geometry; more complicated geometry will be a more involved, manual process. Don’t fear; most of the time you can get good results easily. Just keep an eye on your UV coordinates when you are working with UE4. If you have rendering issues that are difficult to diagnose, check your UV coordinates.

Real-World Scale

Many 3D applications can use a real-world scale UV coordinate system, where the texture is scaled in the material, rather than the UV coordinates. Although this is completely possible to accomplish in UE4, it’s not the best option.

If your scenes are already mapped to real-world scale, you should scale your UV coordinates using a modifier (Scale UVW in Max) or manually scale the UV verticies in a UV Editor.

World-Projection Mapping

A common “cheat” for visualization is to use world-projected textures (textures projected along the XYZ world coordinates) to cover complex, static models quickly with tiling textures. This method doesn’t require well-authored UV coordinates for good results. UE4 has methods for doing this and I rely on them heavily in my own projects.

It’s important to note that if you are planning to use pre-calculated lighting with Lightmass, you still need to provide good, explicit Lightmap UV coordinates, even when using world-projection mapping. This is because the Lightmass stores the lighting information in texture maps that need unique UC space to render correctly.

Tiling Versus Unique Coordinates

Most visualizations make extensive use of tiling textures. In truth, the texture does not tile; rather the UV coordinates are set or being modified by the material to allow tiling. A tiled UVW coordinate set allows UV faces to overlap and for vertices to go outside the 0–1 UV space. By the nature of UV coordinates, going outside the 0–1 range results in tiling. Essentially, 0.2, 1.2, and 2.2 all sample the same pixel.

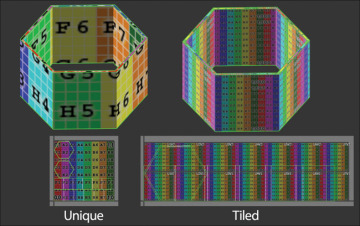

Unique UV coordinates are defined by having no coordinates outside 0–1 and each face occupies a unique area in the UV map space without overlaps. This is useful when you are “baking” information into the texture map, because each pixel can correspond to a specific location on the surface of the model (see Figure 3.3).

Figure 3.3 Unique versus tiled UV coordinates

Common examples of baking data into textures are normal maps generated from high-polygon models using reprojection or, in UE4, using Lightmass to record lighting and Global Illumination (GI) information into texture maps called Lightmaps.

Multiple UV Channels

Unreal Engine 4 supports and even encourages and requires multiple UV channels to allow texture blending and other Engine-level features such as baked lighting with Lightmass.

3D applications can often have trouble visualizing multiple UV channels in the Viewport, and the workflow is typically clunky and often left unused in favor of using larger, more detailed textures. This isn’t an option; massive textures can consume huge amounts of VRAM and make scenes bog down.

Instead, make use of multiple UV channels and layered UV coordinates in your materials to add detail to large objects.

Lightmap Coordinates

Making Lightmap UV coordinates can seem like an onerous task. Have no fear: Lightmap coordinates are easy to author and are simply a second set of UV coordinates that enforce a few rules:

If your project uses dynamic lighting exclusively, you probably don’t need to worry about Lightmapping coordinates at all!

Coordinates need to be unique and not overlap or tile. If faces overlap, Lightmass can’t decide what face’s lighting information to record into the pixel, creating terrible-looking errors.

A space must exist between UV charts (groups of attached faces in the UV Editor). This is called padding and ensures that pixels from one triangle don’t bleed into adjacent triangles.

The last major rule is twofold: Avoid splitting your Lightmap UVs across smooth faces because this can cause an unsightly seam and split your coordinates along Smoothing Group boundaries to avoid light bleeding across Smoothing Groups.

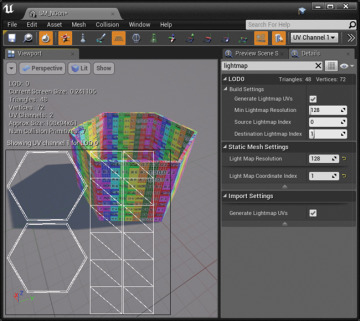

Auto Generate Lightmap UVs

The Auto Generate Lightmap UVs import option is one of the biggest timesavers for preparing models for lighting with Lightmass. I can’t recommend it enough. It generates high-quality Lightmap coordinates quickly on import (or after) and is artist-tweakable for optimal results (see Figure 3.4).

Figure 3.4 Autogenerated UV coordinates with Lightmap UV coordinates overlaid (note the efficiency versus Figure 3.2)

In a broad sense, the Auto Generate Lightmap UVs system is simply a repack and normalization operation that takes a source channel and packs the existing UV charts into the 0–1 UV space as efficiently as possible. It also adds the correct amount of pixel padding between the charts based on the intended Lightmap resolution as set by the Source Lightmap Index. (Lower resolution Lightmaps have larger pixels requiring larger spaces between charts; higher resolution inversely has smaller pixels requiring smaller spaces.)

This only requires that the coordinates in your base UV channel follow the rules about being split along Smoothing Groups and don’t have stretching. They can be any scale, be overlapped, and more, if they are cleanly mapped to begin with.

Level of Detail (LOD)

UE4 has fantastic LOD support. LOD is the process of switching out 3D models with lower-detail versions as they recede into the distance and become smaller on-screen.

If you are developing content for mobile or VR, LOD and model optimization are incredibly important. Even if you’re running on high-end hardware, consider creating LOD models for your high-polygon props. For Meshes like vehicles, people, trees, and other vegetation that are used hundreds of times each in a large scene, having efficient LOD models will mean you can keep more assets on-screen, creating a more detailed simulation.

UE4 fully supports LOD chains from applications that support exporting these to FBX. That means you can author your LODs in your favorite 3D package and import them all at once into UE4. You can also manually import LODs by exporting several FBX files and importing them from the Editor.

Automatic LOD Creation

UE4 supports creating LODs directly in the Editor. Much like Lightmap generation, these LODs are specifically created by and for UE4 and can produce great-looking LODs with almost no artist intervention needed.

To generate LOD Meshes in the Editor, simply assign an LOD Group in either the Static Mesh Editor or by assigning an LOD Group at import. I recommend taking a look at it for your higher-density Meshes, especially if you are targeting mobile or VR platforms.

Several third-party plugins, such as Simplygon (https://www.simplygon.com) and InstaLOD (http://www.instalod.io/), can further automate the entire LOD generation process and have more powerful reduction abilities than UE4’s built-in solution.

If you regularly deal with heavily tessellated Meshes, I recommend considering integrating these plugins into your UE4 pipeline, because they are far more robust than the optimization systems in most 3D applications, and the time saved using automatic LOD generation can be significant, not to mention the obvious performance benefits of reducing the total number of vertices being transformed and rendered each frame.

Collision

Collision is a complex subject in UE4. Interactive actors collide and simulate physics using a combination of per-polygon and low-resolution proxy geometry (for speed) and various settings to determine what they collide with and what they can pass through (for example, a rocket being blocked by a shield your character can pass through).

Fortunately, because most visualization does not require complex physics interactions between warring factions, you can use a simplified approach to collision preparation.

Architecture Mesh Collision

For large, unique architecture you can simply use per-polygon (complex) collision. Walls, floors, roads, terrains, sidewalks, and so on can use this easily without incurring a huge performance penalty. Keep in mind that the denser the Mesh the more expensive this operation is, so use good judgment, and break apart larger Meshes to avoid performance issues.

Prop Mesh Collision

For smaller or more detailed Meshes, per-polygon collision is too memory and performance intensive. You should instead use a low-resolution Collision Proxy Mesh or Simple collision.

You might opt to generate your own, low-polygon proxy Collision Meshes1 in a 3D package, or in the UE4 Editor using manually placed primitives (boxes, spheres, capsules). You should also take a look at the automatic collision generation options the Editor provides.

Convex Decomposition (Auto Convex Collision)

UE4 also offers a great automatic collision generation system in the Editor called Convex Decomposition. This feature uses a fancy voxelization system that breaks down polygon objects into 3D grids to create good quality collision primitives. It even works on complicated Meshes, and I highly recommend it for reducing the polygon count on your props.

Do not confuse convex decomposition with the option to automatically generate collision during the import process. That system is a legacy system from UE3 and is unusable for most visualization purposes because it doesn’t handle large, irregular, or elongated shapes well.

Convex Decomposition is not enabled on import because it can be very slow for large Meshes or Meshes with lots of triangles. For these, you should author a traditional collision shell in your 3D application and import it with your model.

You can find more information about generating collisions in the official documentation and at www.TomShannon3d.com/UnrealForViz.

Pivot Point

UE4 imports pivots differently than you expect. UE4 lets you choose between two options for your pivot points on import via the Transform Vertex to Absolute option in the Static Mesh importer. You can either have the pivot set where it is in your 3D application (by setting Transform Vertex to Absolute to false) or you can force the pivot point to be imported at the scene’s 0,0,0 origin, ignoring the defined pivot point completely (by setting Transform Vertex to Absolute to true).

You might wonder why in the world you would want to override your object’s pivot point, but doing can be a huge time saver. By using a common pivot point, you can place all Architecture Meshes in your scene easily and reliably by putting them into the Level and setting the position to 0,0,0; they will all line up perfectly.

This works because you don’t typically move your Architecture Meshes around, so where they rotate and scale from is irrelevant. What’s important is their accurate position in 3D space.

Prop Meshes are the opposite. As you place props in your scenes, the pivot point is essential for moving, rotating, and scaling them into place.

To get the pivot point in UE4 to match your 3D application, you have a couple options. You can model all your props at 0,0,0, or move them each as you export. Newer versions of UE4 (4.13 and up) allow you to override the default import behavior and use the Meshes’ authored pivot point as well. This is usually the best bet to ensure your pivot points match.