1.7 Speed

So far we have elaborated on many of the considerations involved in designing large distributed systems. For web and other interactive services, one item may be the most important: speed. It takes time to get information, store information, compute and transform information, and transmit information. Nothing happens instantly.

An interactive system requires fast response times. Users tend to perceive anything faster than 200 ms to be instant. They also prefer fast over slow. Studies have documented sharp drops in revenue when delays as little as 50 ms were artificially added to web sites. Time is also important in batch and non-interactive systems where the total throughput must meet or exceed the incoming flow of work.

The general strategy for designing a system that is performant is to design a system using our best estimates of how quickly it will be able to process a request and then to build prototypes to test our assumptions. If we are wrong, we go back to step one; at least the next iteration will be informed by what we have learned. As we build the system, we are able to remeasure and adjust the design if we discover our estimates and prototypes have not guided us as well as we had hoped.

At the start of the design process we often create many designs, estimate how fast each will be, and eliminate the ones that are not fast enough. We do not automatically select the fastest design. The fastest design may be considerably more expensive than one that is sufficient.

How do we determine if a design is worth pursuing? Building a prototype is very time consuming. Much can be deduced with some simple estimating exercises. Pick a few common transactions and break them down into smaller steps, and then estimate how long each step will take.

Two of the biggest consumers of time are disk access and network delays.

Disk accesses are slow because they involve mechanical operations. To read a block of data from a disk requires the read arm to move to the right track; the platter must then spin until the desired block is under the read head. This process typically takes 10 ms. Compare this to reading the same amount of information from RAM, which takes 0.002 ms, which is 5,000 times faster. The arm and platters (known as a spindle) can process only one request at a time. However, once the head is on the right track, it can read many sequential blocks. Therefore reading two blocks is often nearly as fast as reading one block if the two blocks are adjacent. Solid-state drives (SSDs) do not have mechanical spinning platters and are much faster, though more expensive.

Network access is slow because it is limited by the speed of light. It takes approximately 75 ms for a packet to get from California to the Netherlands. About half of that journey time is due to the speed of light. Additional delays may be attributable to processing time on each router, the electronics that convert from wired to fiber-optic communication and back, the time it takes to assemble and disassemble the packet on each end, and so on.

Two computers on the same network segment might seem as if they communicate instantly, but that is not really the case. Here the time scale is so small that other delays have a bigger factor. For example, when transmitting data over a local network, the first byte arrives quickly but the program receiving the data usually does not process it until the entire packet is received.

In many systems computation takes little time compared to the delays from network and disk operation. As a result you can often estimate how long a transaction will take if you simply know the distance from the user to the datacenter and the number of disk seeks required. Your estimate will often be good enough to throw away obviously bad designs.

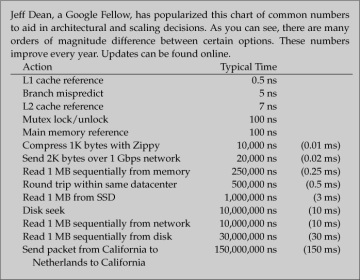

To illustrate this, imagine you are building an email system that needs to be able to retrieve a message from the message storage system and display it within 300 ms. We will use the time approximations listed in Figure 1.10 to help us engineer the solution.

Figure 1.10: Numbers every engineer should know

First we follow the transaction from beginning to end. The request comes from a web browser that may be on another continent. The request must be authenticated, the database index is consulted to determine where to get the message text, the message text is retrieved, and finally the response is formatted and transmitted back to the user.

Now let’s budget for the items we can’t control. To send a packet between California and Europe typically takes 75 ms, and until physics lets us change the speed of light that won’t change. Our 300 ms budget is reduced by 150 ms since we have to account for not only the time it takes for the request to be transmitted but also the reply. That’s half our budget consumed by something we don’t control.

We talk with the team that operates our authentication system and they recommend budgeting 3 ms for authentication.

Formatting the data takes very little time—less than the slop in our other estimates—so we can ignore it.

This leaves 147 ms for the message to be retrieved from storage. If a typical index lookup requires 3 disk seeks (10 ms each) and reads about 1 megabyte of information (30 ms), that is 60 ms. Reading the message itself might require 4 disk seeks and reading about 2 megabytes of information (100 ms). The total is 160 ms, which is more than our 147 ms remaining budget.

While disappointed that our design did not meet the design parameters, we are happy that disaster has been averted. Better to know now than to find out when it is too late.

It seems like 60 ms for an index lookup is a long time. We could improve that considerably. What if the index was held in RAM? Is this possible? Some quick calculations estimate that the lookup tree would have to be 3 levels deep to fan out to enough machines to span this much data. To go up and down the tree is 5 packets, or about 2.5 ms if they are all within the same datacenter. The new total (150 ms+3 ms+2.5 ms+100 ms = 255.5 ms) is less than our total 300 ms budget.

We would repeat this process for other requests that are time sensitive. For example, we send email messages less frequently than we read them, so the time to send an email message may not be considered time critical. In contrast, deleting a message happens almost as often reading messages. We might repeat this calculation for a few deletion methods to compare their efficiency.

One design might contact the server and delete the message from the storage system and the index. Another design might have the storage system simply mark the message as deleted in the index. This would be considerably faster but would require a new element that would reap messages marked for deletion and occasionally compact the index, removing any items marked as deleted.

Even faster response time can be achieved with an asynchronous design. That means the client sends requests to the server and quickly returns control to the user without waiting for the request to complete. The user perceives this system as faster even though the actual work is lagging. Asynchronous designs are more complex to implement. The server might queue the request rather than actually performing the action. Another process reads requests from the queue and performs them in the background. Alternatively, the client could simply send the request and check for the reply later, or allocate a thread or subprocess to wait for the reply.

All of these designs are viable but each offers different speed and complexity of implementation. With speed and cost estimates, backed by prototypes, the business decision of which to implement can be made.