- Essential Activities Overview

- Architectural Decisions

- Quality Attributes

- Technical Debt

- Feedback Loops: Evolving an Architecture

- Common Themes in Today's Software Architecture Practice

- Summary

Feedback Loops: Evolving an Architecture

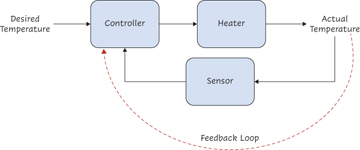

Feedback loops exist in all complex systems from biological systems such as the human body to electrical control systems. The simplest way to think about feedback loops is that the output of any process is fed back as an input into the same process. An extremely simplified example is an electrical system that is used to control the temperature of a room (see Figure 2.8).

Figure 2.8 Feedback loop example

In this simple example, a sensor provides a reading of the actual temperature, which allows the system to keep the actual temperature as close as possible to the desired temperature.

Let us consider software development as a process, with the output being a system that ideally meets all functional requirements and desired quality attributes. The key goal of agile and DevOps has been to achieve greater flow of change while increasing the number of feedback loops in this process and minimizing the time between change happening and feedback being received. The ability to automate development, deployment, and testing activities is a key to this success. In Continuous Architecture, we emphasize the importance of frequent and effective feedback loops. Feedback loops are the only way that we can respond to the increasing demand to deliver software solutions in a rapid manner while addressing all quality attribute requirements.

What is a feedback loop? In simple terms, a process has a feedback loop when the results of running the process are used to improve how the process itself works in the future.

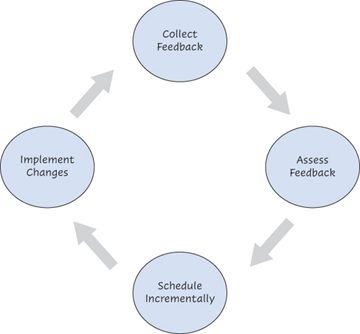

The steps of implementing a continuous feedback loop can be summarized as follows and are shown in Figure 2.9:

Figure 2.9 Continuous architecture feedback loop

Collect measurements: Metrics can be gathered from many sources, including fitness functions, deployment pipelines, production defects, testing results, or direct feedback from the users of the system. The key is to not start the process by implementing a complex dashboard that may take a significant amount of time and money to get up and running. The point is to collect a small number of meaningful measurements that are important for the architecture.

Assess: Form a multidisciplinary team that includes developers, operations, architects, and testers. The goal of this team is to analyze the output of the feedback—for example, why a certain quality attribute is not being addressed.

Schedule incrementally: Determine incremental changes to the architecture based on the analysis. These changes can be categorized as either defects or technical debt. Again, this step is a joint effort involving all the stakeholders.

Implement changes: Go back to step 1 (collect measurement).

Feedback is essential for effective software delivery. Agile processes use some of the following tools to obtain feedback:

Pair programming

Unit tests

Continuous integration

Daily Scrums

Sprints

Demonstrations for product owners

From an architectural perspective, the most important feedback loop we are interested in is the ability to measure the impact of architectural decisions on the production environment. Additional measurements that will help improve the software system include the following:

Amount of technical debt being introduced/reduced over time or with each release

Number of architectural decisions being made and their impact on quality attributes

Adherence to existing guidelines or standards

Interface dependencies and coupling between components

This is not an exhaustive list, and our objective is not to develop a full set of measurements and associated feedback loops. Such an exercise would end up in a generic model that would be interesting but not useful outside of a specific context. We recommend that you think about what measurement and feedback loops you want to focus on that are important in your context. It is important to remember that a feedback loop measures some output and takes action to keep the measurement in some allowable range.

As architectural activities get closer to the development life cycle and are owned by the team rather than a separate group, it is important to think about how to integrate them as much as possible into the delivery life cycle. Linking architectural decisions and technical debt into the product backlogs, as discussed earlier, is one technique. Focus on measurement and automation of architectural decisions; quality attributes is another aspect that is worthwhile to investigate.

One way to think about architectural decisions is look at every decision as an assertion about a possible solution that needs to be tested and proved valid or rejected. The quicker we can validate the architectural decision, ideally by executing tests, the more efficient we become. This activity in its own is another feedback loop. Architectural decisions that are not validated quickly are at risk of causing challenges as the system evolves.

Fitness Functions

A key challenge for architects is an effective mechanism to provide feedback loops into the development process of how the architecture is evolving to address quality attributes. In Building Evolutionary Architectures,28 Ford, Parsons, and Kua introduced the concept of the fitness function to address this challenge. They define fitness functions as “an architectural fitness function provides an objective integrity assessment of some architectural characteristics”—where architectural characteristics are what we have defined as quality attributes of a system. These are like the architecturally significant quality attribute scenarios discussed earlier in the chapter.

In their book, they go into detail on how to define and automate fitness functions so that a continuous feedback loop regarding the architecture can be created.

The recommendation is to define the fitness functions as early as possible. Doing so enables the team to determine the quality attributes that are relevant to the software product. Building capabilities to automate and test the fitness functions also enables the team to test out different options for the architectural decisions it needs to make.

Fitness functions are inherently interlinked with the four essential activities we have discussed. They are a powerful tool that should be visible to all stakeholders involved in the software delivery life cycle.

Continuous Testing

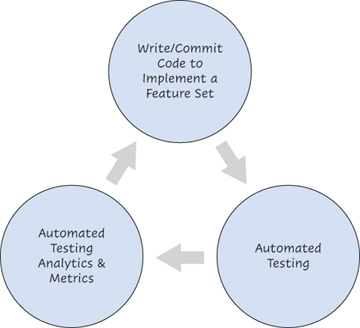

As previously mentioned, testing and automation are key to implementing effective feedback loops. Continuous testing implements a shift-left approach, which uses automated processes to significantly improve the speed of testing. This approach integrates the quality assurance and development phases. It includes a set of automated testing activities, which can be combined with analytics and metrics to provide a clear, fact-based picture of the quality attributes of the software being delivered. This process is illustrated in Figure 2.10.

Figure 2.10 Sample automated testing process

Leveraging a continuous testing approach provides project teams with feedback loops for the quality attributes of the software that they are building. It also allows them to test earlier and with greater coverage by removing testing bottlenecks, such as access to shared testing environments and having to wait for the user interface to stabilize. Some of the benefits of continuous testing include the following:

Shifting performance testing activities to the “left” of the software development life cycle (SDLC) and integrating them into software development activities

Integrating the testing, development, and operations teams in each step of the SDLC

Automating quality attribute testing (e.g., for performance) as much as possible to continuously test key capabilities being delivered

Providing business partners with early and continuous feedback on the quality attributes of a system

Removing test environment availability bottlenecks so that those environments are continuously available

Actively and continuously managing quality attributes across the whole delivery pipeline

Some of the challenges of continuous testing include creation and maintenance of test data sets, setup and updating of environments, time taken to run the tests, and stability of results during development.

Continuous testing relies on extensive automation of the testing and deployment processes and on ensuring that every component of the software system can be tested as soon as it is developed. For example, the following tactics29 can be used by the TFX team for continuous performance testing:

Designing API-testable services and components. Services need to be fully tested independently of the other TFX software system components. The goal is to fully test each service as it is built, so that there are very few unpleasant surprises when the services are put together during the full system testing process. The key question for architects following the Continuous Architecture approach when creating a new service should be, “Can this service be easily and fully tested as a standalone unit?”

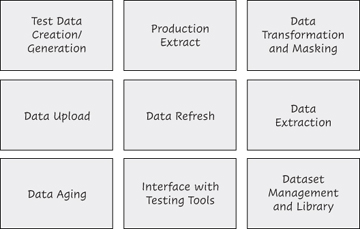

Architecting test data for continuous testing. Having a robust and fully automated test data management solution in place is a prerequisite for continuous testing. That solution needs to be properly architected as part of the Continuous Architecture approach. An effective test data management solution needs to include several key capabilities, summarized in Figure 2.11.

Figure 2.11 Test data management capabilities

Leveraging an interface-mocking approach when some of the TFX services have not been delivered yet. Using an interface-mocking tool, the TFX team can create a virtual service by analyzing its service interface definition (inbound/outbound messages) as well as its runtime behavior. Once a mock interface has been created, it can be deployed to test environments and used to test the TFX software system until the actual service becomes available.