- Avoid Functional Decomposition

- Volatility-Based Decomposition

- Identifying Volatility

Volatility-Based Decomposition

The Method’s design directive is:

Decompose based on volatility.

Volatility-based decomposition identifies areas of potential change and encapsulates those into services or system building blocks. You then implement the required behavior as the interaction between the encapsulated areas of volatility.

The motivation for volatility-based decomposition is simplicity itself: any change is encapsulated, containing the effect on the system.

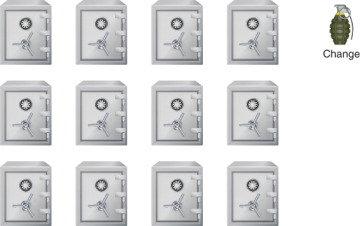

When you use volatility-based decomposition, you start thinking of your system as a series of vaults, as in Figure 2-9.

FIGURE 2-9 Encapsulated areas of volatility (Images: media500/Shutterstock; pikepicture/Shutterstock)

Any change is potentially very dangerous, like a hand grenade with the pin pulled out. Yet, with volatility-based decomposition, you open the door of the appropriate vault, toss the grenade inside, and close the door. Whatever was inside the vault may be destroyed completely, but there is no shrapnel flying everywhere, destroying everything in its path. You have contained the change.

With functional decomposition, your building blocks represent areas of functionality, not volatility. As a result, when a change happens, by the very definition of the decomposition, it affects multiple (if not most) of the components in your architecture. Functional decomposition therefore tends to maximize the effect of the change. Since most software systems are designed functionally, change is often painful and expensive, and the system is likely to resonate with the change. Changes made in one area of functionality trigger other changes and so on. Accommodating change is the real reason you must avoid functional decomposition.

All the other problems with functional decomposition pale when compared with the poor ability and high cost of handling change. With functional decomposition, a change is like swallowing a live hand grenade.

What you choose to encapsulate can be functional in nature, but hardly ever is it domain-functional, meaning it has no meaning for the business. For example, the electricity that powers a house is indeed an area of functionality but is also an important area to encapsulate for two reasons. The first reason is that power in a house is highly volatile: power can be AC or DC; 110 volts or 220 volts; single phase or three phases; 50 hertz or 60 hertz; produced by solar panels on the roof, a generator in the backyard, or plain grid connectivity; delivered on wires with different gauges; and on and on. All that volatility is encapsulated behind a receptacle. When it is time to consume power, all the user sees is an opaque receptacle, encapsulating the power volatility. This decouples the power-consuming appliances from the power volatility, increasing reuse, safety, and extensibility while reducing overall complexity. It makes using power in one house indistinguishable from using it in another, highlighting the second reason it is valid to identify power as something to encapsulate in the house. While powering a house is an area of functionality, in general, the use of power is not specific to the domain of the house (the family living in the house, their relationships, their wellbeing, property, etc.).

What would it be like to live in a house where the power volatility was not encapsulated? Whenever you wanted to consume power, you would have to first expose the wires, measure the frequency with an oscilloscope, and certify the voltage with a voltmeter. While you could use power that way, it is far easier to rely on the encapsulation of that volatility behind the receptacle, allowing you instead to add value by integrating power into your tasks or routine.

Decomposition, Maintenance, and Development

As explained previously, functional decomposition drastically increases the system’s complexity. Functional decomposition also makes maintenance a nightmare. Not only is the code in such systems complex, changes are spread across multiple services. This makes maintaining the code labor intensive, error prone, and very time-consuming. Generally, the more complex the code, the lower its quality, and low quality makes maintenance even more challenging. You must contend with high complexity and avoid introducing new defects while resolving old ones. In a functionally decomposed system, it is common for new changes to result in new defects due to the confluence of low quality and complexity. Extending the functional system often requires effort disproportionally expensive with respect to the benefit to the customer.

Even before maintenance ever starts, when the system is under development, functional decomposition harbors danger. Requirements will change throughout development (as they invariably do), and the cost of each change is huge, affecting multiple areas, forcing considerable rework, and ultimately endangering the deadline.

Systems designed with volatility-based decomposition present a stark contrast in their ability to respond to change. Since changes are contained in each module, there is at least a hope for easy maintenance with no side effects outside the module boundary. With lower complexity and easier maintenance, quality is much improved. You have a chance at reuse if something is encapsulated the same way in another system. You can extend the system by adding more areas of encapsulated volatility or integrate existing areas of volatility in a different way. Encapsulating volatility means far better resiliency to feature creep during development and a chance of meeting the schedule, since changes will be contained.

Universal Principle

The merits of volatility-based decomposition are not specific to software systems. They are universal principles of good design, from commerce to business interactions to biology to physical systems and great software. Universal principles, by their very nature, apply to software too (else they would not be universal). For example, consider your own body. A functional decomposition of your own body would have components for every task you are required to do, from driving to programming to presenting, yet your body does not have any such components. You accomplish a task such as programming by integrating areas of volatility. For example, your heart provides an important service for your system: pumping blood. Pumping blood has enormous volatility to it: high blood pressure and low pressure, salinity, viscosity, pulse rate, activity level (sitting or running), with and without adrenaline, different blood types, healthy and sick, and so on. Yet all that volatility is encapsulated behind the service called the heart. Would you be able to program if you had to care about the volatility involved in pumping blood?

You can also integrate into your implementation external areas of encapsulated volatility. Consider your computer, which is different from literally any other computer in the world, yet all that volatility is encapsulated. As long as the computer can send a signal to the screen, you do not care what happens behind the graphic port. You perform the task of programming by integrating encapsulated areas of volatility, some internal, some external. You can reuse the same areas of volatility (such as the heart) while performing other functionalities such as driving a car or presenting your work to customers. There is simply no other way of designing and building a viable system.

Decomposing based on volatility is the essence of system design. All well-designed systems, software and physical systems alike, encapsulate their volatility inside the system’s building blocks.

Volatility-Based Decomposition and Testing

Volatility-based decomposition lends well to regression testing. The reduction in the number of components, the reduction in the size of components, and the simplification of the interactions between components all drastically reduce the complexity of the system. This makes it feasible to write regression testing that tests the system end to end, tests each subsystem individually, and eventually tests independent components. Since volatility-based decomposition contains the changes inside the building blocks of the system, once the inevitable changes do happen, they do not disrupt the regression testing in place. You can test the effect of a change in a component in isolation from the rest of the system without interfering with the inter-components and inter-subsystems testing.

The Volatility Challenge

The ideas and motivations behind volatility-based decomposition are simple, practical, and consistent with reality and common sense. The main challenges in performing a volatility-based decomposition have to do with time, communication, and perception. You will find that volatility is often not self-evident. No customer or product manager at the onset of a project will ever present you the requirements for the system the following way: “This could change, we will change that one later, and we will never change those.” The outside world (be it customers, management, or marketing) always presents you with requirements in terms of functionality: “The system should do this and that.” Even you, reading these pages, are likely struggling to wrap your head around this concept as you to try to identify the areas of volatility in your current system. Consequently, volatility-based decomposition takes longer compared with functional decomposition.

Note that volatility-based decomposition does not mean you should ignore the requirements. You must analyze the requirements to recognize the areas of volatility. Arguably, the whole purpose of requirements analysis is to identify the areas of volatility, and this analysis requires effort and sweat. This is actually great news because now you are given a chance to comply with the first law of thermodynamics. Sadly, merely sweating on the problem does not mean a thing. The first law of thermodynamics does not state that if you sweat on something, you will add value. Adding value is much more difficult. This book provides you with powerful mental tools for design and analysis, including structure, guidelines, and a sound engineering methodology. These tools give you a fighting chance in your quest to add value. You still must practice and fight.

The 2% Problem

With every knowledge-intensive subject, it takes time to become proficient and effective and even more to excel at it. This is true in areas as varied as kitchen plumbing, internal medicine, and software architecture. In life, you often choose not to pursue certain areas of expertise because the time and cost required to master them would dwarf the time and cost required to utilize an expert. For example, precluding any chronic health problem, a working-age person is sick for about a week a year. A week a year of downtime due to illness is roughly 2% of the working year. So, when you are sick, do you open up medicine books and start reading, or do you go and see a doctor? At only 2% of your time, the frequency is low enough (and the specialty bar high enough) that there is little sense in doing anything other than going to the doctor. It is not worth your while to become as good as a doctor. If, however, you were sick 80% of the time, you might spend a considerable portion of your time educating yourself about your condition, possible complications, treatments, and options, often to the point of sparring with your doctor. Your innate propensity for anatomy and medicine has not changed; only your degree of investment has (hopefully, you will never have to be really good at medicine).

Similarly, when your kitchen sink is clogged somewhere behind the garbage disposal and the dishwasher, do you go to the hardware store, purchase a P-trap, an S-trap, various adapters, three different types of wrenches, various O-rings and other accessories, or do you call a plumber? It is the 2% problem again: it is not worth your while learning how to fix that sink if it is clogged less than 2% of the time. The moral is that when you spend 2% of your time on any complex task, you will never be any good at it.

With software system architecture, architects get to decompose a complete system into modules only on major revolutions of the cycle. Such events happen, on average, every few years. All other designs in the interim between clean slates are at best incremental and at worse detrimental to the existing systems. How much time will the manager allow the architect to invest in architecture for the next project? One week? Two weeks? Three weeks?? Six weeks??? The exact answer is irrelevant. On one hand, you have cycles measured in years and, on the other, activities measured in weeks. The week-to-year ratio is roughly 1:50, or 2% again. Architects have learned the hard way that they need to hone their skills getting ready for that 2% window. Now consider the architect’s manager. If the architect spends 2% of the time architecting the system, what percentage of the time does that architect’s manager spend managing said architect? The answer is probably a small fraction of that time. Therefore, the manager is never going to be good at managing architects at that critical phase. The manager is constantly going to exclaim, “I don’t understand why this is taking so long! Why can’t we just do A, B, C?”

Gaining the time to do decomposition correctly will likely be as much of a challenge as doing the decomposition, if not more so. However, the difficulty of a task should not preclude it from being done. Precisely because it is difficult, it must be done. You will see later on in this book several techniques for gaining the time.

The Dunning-Kruger Effect

In 1999, David Dunning and Justin Kruger published their research2 demonstrating conclusively that people unskilled in a domain tend to look down on it, thinking it is less complex, risky, or demanding than it truly is. This cognitive bias has nothing to do with intelligence or expertise in other domains. If you are unskilled in something, you never assume it is more complex than it is, you assume it is less!

2. Justin Kruger and David Dunning, “Unskilled and Unaware of It: How Difficulties in Recognizing One’s Own Incompetence Lead to Inflated Self-Assessments,” Journal of Personality and Social Psychology 77, no. 6 (1999): 1121–1134.

When the manager is throwing hands in the air saying, “I don't understand why this is taking so long,” the manager really does not understand why you cannot just do the A, then B, and then C. Do not be upset. You should expect this behavior and resolve it correctly by educating your manager and peers who, by their own admission, do not understand.

Fighting Insanity

Albert Einstein is attributed with saying that doing things the same way but expecting better results is the definition of insanity. Since the manager typically expects you to do better than last time, you must point out the insanity of pursuing functional decomposition yet again and explain the merits of volatility-based decomposition. In the end, even if you fail to convince a single person, you should not simply follow orders and dig the project into an early grave. You must still decompose based on volatility. Your professional integrity (and ultimately your sanity and long-term peace of mind) is at stake.