6.5 Network Architecture

In Actor-Critic algorithms, we learn two parametrized functions—π (actor) and Vπ(s) (critic). This makes it different from all of the other algorithms we have seen so far which only learned one function. Naturally, there are more factors to consider when designing the neural networks. Should they share any parameters? And if so, how many?

Sharing some parameters has its pros and cons. Conceptually, it is more appealing because learning π and learning Vπ(s) for the same task are related. Learning Vπ(s) is concerned with evaluating states effectively, and learning π is concerned with understanding how to take good actions in states. They share the same input. In both cases, good function approximations will involve learning how to represent the state space so that similar states are clustered together. It is therefore likely that both approximations could benefit by sharing the lower-level learning about the state space. Additionally, sharing parameters helps reduce the total number of learnable parameters, so may improve the sample efficiency of the algorithm. This is particularly relevant for Actor-Critic algorithms since the policy is being reinforced by a learned critic. If the actor and critic networks are separate, the actor may not learn anything useful until the critic has become reasonable, and this may take many training steps. However, if they share parameters, the actor can benefit from the state representation being learning by the critic.

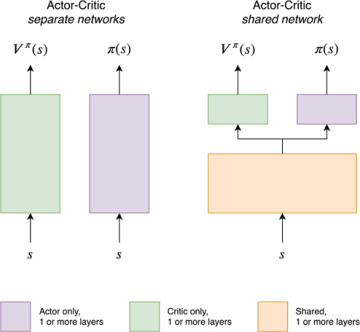

The high-level shared network architecture, shown on the right-hand side of Figure 6.1, demonstrates how parameter sharing typically works for Actor-Critic algorithms. The Actor and the Critic share the lower layers of the network. This can be interpreted as learning a common representation of the state space. They also have one or more separate layers at the top of the network. This is because the output space for each network is different—for the actor, it’s a probability distribution over actions, and for the critic, it’s a single scalar value representing Vπ(s). However, since both networks also have different tasks, we should also expect that they need a number of specialized parameters to perform well. This could be a single layer on top of the shared body or a number of layers. Furthermore, the actor-specific and critic-specific layers do not have to be the same in number or type.

FIGURE 6.1 Actor-Critic network architectures: shared vs. separate networks

One serious downside of sharing parameters is that it can make learning more unstable. That’s because now there are two components to the gradient being backpropagated simultaneously through the network—the policy gradient from the actor and the value function gradient from the critic. The two gradients may have different scales which need to be balanced for the agent to learn well [75]. For example, the log probability component of the policy loss has a range ∈ (−∞, 0], and the absolute value of the advantage function may be quite small, particularly if the action resolution7 is small. This combination of these two factors can result in small absolute values for the policy loss. On the other hand, the scale of the value loss is related to the value of being in a state, which may be very large.

Balancing two potentially different gradient scales is typically implemented by adding a scalar weight to one of the losses to scale it up or down. For example, the value loss in Code 6.5 was scaled with a self.val_loss_coef (line 11). However, this becomes another hyperparameter to tune during training.