6.3 A2C Algorithm

Here we put the actor and critic together to form the Advantage Actor-Critic (A2C) algorithm, shown in Algorithm 6.1.

Algorithm 6.1 A2C algorithm

Each algorithm we have studied so far focused on learning one of two things: how to act (a policy) or how to evaluate actions (a critic). Actor-Critic algorithms learn both together. Aside from that, each element of the training loop should look familiar, since they have been part of the algorithms presented earlier in this book. Let’s go through Algorithm 6.1 step by step.

Lines 1–3: Set the values of important hyperparameters: β, αA, αC. β determines how much entropy regularization to apply (see below for more details). αA and αC are the learning rates used when optimizing each of the networks. They can be the same or different. These values vary depending on the RL problem we are trying to solve, and need to be determined empirically.

Line 4: Randomly initialize the parameters of both networks.

Line 6: Gather some data using the current policy network θA. This algorithm shows episodic training, but this approach also applies to batch training.

Lines 8–10: For each (st, at, rt,

) experience in the episode, calculate

) experience in the episode, calculate  ,

,  , and

, and  using the critic network.

using the critic network.Line 11: For each (st, at, rt,

) experience in the episode, optionally calculate the entropy of the current policy distribution πθA using the actor network. The role of entropy is discussed in detail in Box 6.2.

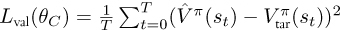

) experience in the episode, optionally calculate the entropy of the current policy distribution πθA using the actor network. The role of entropy is discussed in detail in Box 6.2.Lines 13–14: Calculate the value loss. As with the DQN algorithms, we selected MSE6 as the measure of distance between

and

and  . However, any other appropriate loss function, such as the Huber loss, could be used.

. However, any other appropriate loss function, such as the Huber loss, could be used.

Lines 15–16: Calculate the policy loss. This has the same form as we saw in the REINFORCE algorithm with the addition of an optional entropy regularization term. Notice that we are minimizing the loss, but we want to maximize the policy gradient, hence the negative sign in front of

log πθA(at | st) as in REINFORCE.

log πθA(at | st) as in REINFORCE.Lines 17–18: Update the critic parameters using the gradient of the value loss.

Lines 19–20: Update the actor parameters using the policy gradient.

too much. The balance between these two terms of the objective is controlled through the β parameter.

too much. The balance between these two terms of the objective is controlled through the β parameter.