Daydream Controller

- Getting to Know the Daydream Controller

- Controlling the Controller

- Summary

Everything you need to know about programming with the Google Daydream controller: from tapping into the various sensors, to using the event system to grab and toss objects in 3D space.

Save 35% off the list price* of the related book or multi-format eBook (EPUB + MOBI + PDF) with discount code ARTICLE.

* See informit.com/terms

With Unity and the Daydream SDK set up, we can shift our focus to the controller: understanding what it is, how it works, and how to use it to develop compelling games and apps. The Daydream controller augments the users’ bodies by giving them a physical connection to the virtual world. It allows for the precision required by VR applications and the creativity needed in gaming. This chapter taps into the controller’s sensors and buttons to handle user input, interact with objects in the 3D environment, and update the controller’s visual elements to customize its look and feel.

Getting to Know the Daydream Controller

The Daydream controller is the differentiating piece of hardware in Google’s Daydream VR platform. It allows for complex interaction within the virtual environment, helping to create a sense of presence for the user. Essentially, the controller is the main physical connection point between your app and the user’s reality. So, for this reason, your understanding of how it works is key to creating compelling experiences in Daydream.

This chapter familiarizes you with all the various hardware and software pieces that combine to make up the Daydream controller.

At the heart of these pieces is the controller API, and the low-level access it grants to the controller’s features. The recipes explored cover various techniques for manipulating interactive objects in VR space and how to reskin and replace the visual elements of the controller to give it a custom look and feel.

Throughout, you will implement various controller-specific tools the SDK provides to simplify development and enhance user experience, adding up to a complete knowledge of the Daydream controller. By the end of this chapter, you will understand when to use the support provided by the SDK, when to program it yourself, and—most importantly—how to use the controller to build memorable experiences in your own games and apps.

Why the Controller?

The Daydream controller allows users to physically connect with the environment around them in VR. Through the controller, users can interact naturally; explore environments; hold tools; navigate menus; and point at, click, and interact with virtual objects.

The Daydream team’s ultimate goal was to create a powerfully expressive tool that is simple enough to fit in your pocket. For that reason, the controller is accessible enough for new users to express themselves creatively and precise enough to be used in advanced applications.

Unlike its basic cousin, Google Cardboard, which was built for easily digestible content, Daydream is built for longer form experiences. Cardboard’s interaction model forced the user to hold the headset up with one hand. Daydream’s interaction model differs from this. Because it is strapped on to the user’s head, it frees up both hands, allowing for a controller. This means less potential for fatigue and the possibility of longer, more immersive experiences.

How the Controller Works

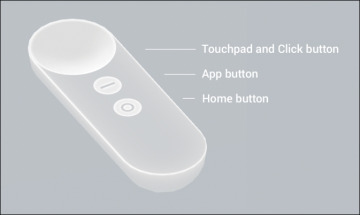

From a technical perspective, the controller (see Figure 3.1) has several main features that you want to familiarize yourself with:

Motion sensors: Including gyroscope, orientation, and the accelerometer

Touchpad: Allowing for 2D positional information of the user’s thumb

Buttons: Various buttons for your app and the system

Figure 3.1 The Daydream controller.

Hardware Inputs

The key to allowing for point and click at locations in 3D space is the controller’s highly calibrated nine-axis Inertial Measurement Unit (IMU) sensor. Using the same technology available in most modern mobile phones, the IMU combines data on the chip from an accelerometer, gyroscope, and magnetometer, all outputting to an absolute rotational value in VR space.

The controller’s user inputs consist of a touchpad and buttons. The clickable touchpad allows for fine-grained manipulation, quick swiping, and clicking without having to lift your thumb. Along with the touchpad there are three other buttons:

Home button (sometimes called the Daydream button): This is reserved entirely for the system. Clicking it opens the Daydream Dashboard, whereas holding it for a couple of seconds lets users re-center the view.

App button: This is reserved for you, the app developer, to do whatever you want. The recommendation is to utilize it for app-level system functions. These are things such as pausing your game and showing a menu, although there is nothing to stop you from using it in an actual game mechanic.

Volume buttons: These are located on the side of the controller for easy volume adjustment while inside of VR. Daydream doesn’t provide developers access to the volume buttons.

Orientation

Orientation describes the direction the controller is pointing in 3D space. The controller exists in 3DoF space, so it has three rotational axes: roll, pitch, and yaw (x, y, z). In the Unity SDK, this rotation is described as a quaternion and is accessed through the Orientation property in the GvrControllerInput class. To retrieve an orientation Vector3 pointing in the same direction as the controller, use:

Vector3 orient = GvrControllerInput.Orientation * Vector3.forward;

It is important to remember the controller’s local coordinate system for orientation is locked to the controller’s local coordinates, not to world space or global coordinates. So X points to the right of the controller, Y points up from the top of the controller, and Z points forward from the front of the controller, no matter which way it is pointing in world space.

Gyroscope

The gyroscope reports the angular velocity of the controller, meaning its speed around a particular local axis. The reading is accessed through the controller’s Gyro property, which reads a Vector3 in radians per second:

Vector3 angVel = GvrControllerInput.Gyro;

Because the gyro specifically reports speed, and not direction, if the controller was at rest in the preceding example, the gyro reading would be (0,0,0), no matter the orientation of the controller.

Acceleration

The accelerometer measures the acceleration of the controller on each of its three local axes. The controller’s acceleration data can be accessed through the Accel property on the GvrControllerInput class. The property is a Vector3 in meters per second squared:

Vector3 accel = GvrControllerInput.Accel;

Note that acceleration includes gravity on the y-axis. So the reading in the preceding example, if the controller was at rest, would be approximately (0.0, 9.8, 0.0). As you will remember (or possibly have repressed) from your high school physics class, if acceleration on the y-axis is at 0.0, you are probably in outer space.

Connectivity

The controller is connected to the phone or headset via Bluetooth Low Energy (BLE). Google VR Services and the Gvr SDK handle all the BLE communications to the controller. It is possible to access various details about the connection status and state of the controller through the State property in the GvrControllerInput class that is of type GvrConnectionState:

GvrConnectionState state = GvrControllerInput.State;

The GvrConnectionState is a C# enum that contains various values describing the state of connection, such as connected, disconnected, and scanning. You explore connection states further in Recipe 3.2.

Touchpad Touch

The touchpad represents touch interaction on the x and y axes as a Vector2. You can think of the touchpad as a square with its origin (0,0) at the top left and (1,1) to the bottom right. The Vector2 touch location is accessed through the TouchPos property on the GvrControllerInput class:

Vector2 touchPos = GvrControllerInput.TouchPos;

It is important to first check that the user is actually touching the touchpad before checking this property. Do this using the IsTouching boolean (true or false) property of the GvrControllerInput. The GvrControllerInput also has two other useful properties: TouchDown and TouchUp. See Recipe 3.1 for examples of accessing the touchpad’s touch properties.

Touch position can also be accessed through the TouchPosCentered property of GvrControllerInput. In this case, (0,0) is in the center of the touchpad and the x and y range is between –1 and 1.

Buttons

Two buttons are available for developers to use in their apps:

Click button: This is the primary button for selection and interaction. Although it is housed on the touchpad, don’t confuse the touch input with the click input.

App button: This is the other button open to developers. It is recommended for secondary input, such as presenting menus, and app-level system information.

Recipe 3.1 covers these two buttons in detail. You will use them both frequently throughout the many recipes in this book, so getting comfortable with them now is worth it.

Controller Support

The Daydream SDK makes available a number of tools for building controller-based apps. Like all of Daydream’s useful SDK tools, prefabs, and classes, knowing when to use them, when to tweak them, and when to build something totally custom is important.

The Daydream SDK offers support for the controller in these five main areas:

Controller visualization: A GameObject containing a 3D mesh of the controller that highlights user interaction on the touchpad and buttons

Tooltips: Text overlays that describe the unique function of each button in your app

Laser and reticle visuals: A laser pointer and pointer cursor (or reticle)

Arm model: An implementation and interface for the programmatic arm model, a mathematical approximation of the controller’s position in 6DoF space

Input system: An event system that is specifically geared to work with the Daydream controller in a VR environment

Controller Visualization

Daydream provides a 3D model of the controller as a part of the GvrControllerPointer prefab. The controller visualization has the ability to display where on the touchpad the user is touching, and the button that is currently being pressed. The look of the controller visual is managed by the GvrControllerVisual script, attached to the ddcontroller Game Object, which is a child of the controller. Here you can customize the look of the controller by changing the materials for each of the states of the controller. The “Visualizing the Controller” section later in this chapter takes you through this further.

Tooltips

The controller’s tooltips appear when users bring the controller up to their faces. Tooltips comprise a set of customizable text components that provide information about the function of each button.

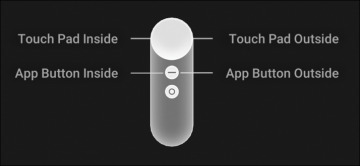

The tooltips are added to the GvrControllerVisual script in the Attachment Prefabs section. This is found on the ControllerVisual prefab (choose GvrControllerPointer prefab > ControllerVisual). Tooltips are made from one of two supplied prefabs: simple or template. The simple version uses a material that you can customize by changing the texture. The template is a Canvas with UI text elements of the various tooltips that can be activated, deactivated, or updated; see Figure 3.2. The tooltips can be set to appear on the inside or the outside of the controller, based on your stylistic preference. Their appearance automatically switches sides according to the user’s handedness (left or right). You get a chance to further customize the tooltips in Recipe 3.15.

Figure 3.2 Controller with tooltips.

Laser and Reticle Visualization

By default, Daydream provides a laser and reticle that you can use in your app to help users interact with the environment. Apart from selection, the laser is also useful for locating the reticle when it has drifted out of view.

For ergonomic reasons, the laser points down at a 15-degree angle from the end of the controller. User testing has shown this points in a straight horizontal line when the controller is in a natural resting position for the user’s hand. You can update this angle for your app.

The GvrLaserPointer and the GvrLaserVisual scripts, attached to the Laser prefab in the GvrControllerPointer, are where to change things like color and distance of the laser along with other properties of the laser. Recipe 3.17 later in the chapter covers this in detail.

Arm Model

The Daydream SDK provides developers a complex arm model that can be used for almost all situations. An arm model is a mathematical model that predicts the location of the controller in 6DoF space, based on 3DoF rotational and acceleration data. When you lift the controller up to your face, you see it being lifted up to your face in VR, even though this is just a programmatic approximation based on the rotation. The arm model takes into account positions of the shoulder, elbow, and wrist of the player to approximate the location and is accurate enough to be used in precision-based applications.

Properties of the arm model can be edited inside the GvrArmModel script attached to the GvrControllerPointer prefab. This is covered in Recipe 3.18. The default arm model is tuned to work assuming you are holding the controller like a laser pointer. For other use cases, such as flipping pancakes with a frying pan or hitting nails with a hammer, you need to tweak the values accordingly, or use one of the custom arm models supplied with the Daydream Elements project.

Event System (Input System)

The custom Event System (or Input System) provided by Daydream allows for easy interaction between the controller’s pointer and GameObjects within the environment.

To use the Daydream Event System, make sure the GvrEventSystem prefab is added to your scene’s hierarchy. The GvrEventSystem contains the script GvrPointerInputModule, an implementation of Unity’s BaseInputModule, and a part of Unity’s standard Event System.

The GvrEventSystem prefab is used throughout this chapter to interact with 3D objects in the scene, and it is added by default to the scenes as a starting point in all the recipes. Refer to Recipe 2.5 for the starting point of the recipes in this chapter.