Cloud NLP and Sentiment Analysis

All three of the dominant cloud providers—AWS, GCP, and Azure—have solid NLP engines that can be called via an API. In this section, NLP examples on all three clouds will be explored. Additionally, a real-world production AI pipeline for NLP pipeline will be created on AWS using serverless technology.

NLP on Azure

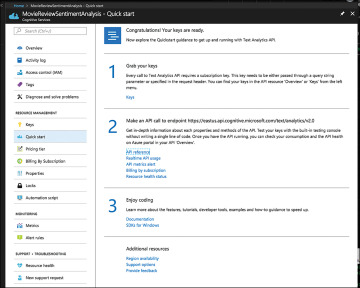

Microsoft Cognitive Services have a Text Analytics API that has language detection, key phrase extraction, and sentiment analysis. In Figure 11.2, the endpoint is created so API calls can be made. This example will take a negative collection of movie reviews from the Cornell Computer Science Data Set on Movie Reviews (http://www.cs.cornell.edu/people/pabo/movie-review-data/) and use it to walk through the API.

Figure 11.2 Microsoft Azure Cognitive Services API

First, imports are done in this first block in Jupyter Notebook.

In [1]: import requests ...: import os ...: import pandas as pd ...: import seaborn as sns ...: import matplotlib as plt ...:

Next, an API key is taken from the environment. This API key was fetched from the console shown in Figure 11.2 under the section keys and was exported as an environmental variable, so it isn’t hard-coded into code. Additionally, the text API URL that will be used later is assigned to a variable.

In [4]: subscription_key=os.environ.get("AZURE_API_KEY")

In [5]: text_analytics_base_url = ...: https://eastus.api.cognitive.microsoft.com/ text/analytics/v2.0/

Next, one of the negative reviews is formatted in the way the API expects.

In [9]: documents = {"documents":[]}

...: path = "../data/review_polarity/ txt_sentoken/neg/cv000_29416.txt"

...: doc1 = open(path, "r")

...: output = doc1.readlines()

...: count = 0

...: for line in output:

...: count +=1

...: record = {"id": count, "language": "en", "text": line}

...: documents["documents"].append(record)

...:

...: #print it out

...: documents

The data structure with the following shape is created.

Out[9]:

{'documents': [{'id': 1,

'language': 'en',

'text': 'plot : two teen couples go to a church party , drink and then drive . \n'},

{'id': 2, 'language': 'en',

'text': 'they get into an accident . \n'},

{'id': 3,

'language': 'en',

'text': 'one of the guys dies , but his girlfriend continues to see him in her life, and has nightmares . \n'},

{'id': 4, 'language': 'en', 'text': "what's the deal ? \n"},

{'id': 5,

'language': 'en',

Finally, the sentiment analysis API is used to score the individual documents.

{'documents': [{'id': '1', 'score': 0.5},

{'id': '2', 'score': 0.13049307465553284},

{'id': '3', 'score': 0.09667149186134338},

{'id': '4', 'score': 0.8442018032073975},

{'id': '5', 'score': 0.808459997177124

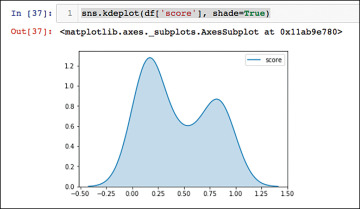

At this point, the return scores can be converted into a Pandas DataFrame to do some EDA. It isn’t a surprise that the median value of the sentiments for a negative review are 0.23 on a scale of 0 to 1, where 1 is extremely positive and 0 is extremely negative.

In [11]: df = pd.DataFrame(sentiments['documents'])

In [12]: df.describe()

Out[12]:

score

count 35.000000

mean 0.439081

std 0.316936

min 0.037574

25% 0.159229

50% 0.233703

75% 0.803651

max 0.948562

This is further explained by doing a density plot. Figure 11.3 shows a majority of highly negative sentiments.

Figure 11.3 Density Plot of Sentiment Scores

NLP on GCP

There is a lot to like about the Google Cloud Natural Language API (https://cloud.google.com/natural-language/docs/how-to). One of the convenient features of the API is that you can use it in two different ways: analyzing sentiment in a string, and also analyzing sentiment from Google Cloud Storage. Google Cloud also has a tremendously powerful command-line tool that makes it easy to explore their API. Finally, it has some fascinating AI APIs, some of which will be explored in this chapter: analyzing sentiment, analyzing entities, analyzing syntax, analyzing entity sentiment, and classifying content.

Exploring the Entity API

Using the command-line gcloud API is a great way to explore what one of the APIs does. In the example, a phrase is sent via the command line about LeBron James and the Cleveland Cavaliers.

→ gcloud ml language analyze-entities --content="LeBron James plays for the Cleveland Cavaliers."

{

"entities": [

{

"mentions": [

{

"text": {

"beginOffset": 0,

"content": "LeBron James"

},

"type": "PROPER"

}

],

"metadata": {

"mid": "/m/01jz6d",

"wikipedia_url": "https://en.wikipedia.org/wiki/LeBron_James"

},

"name": "LeBron James",

"salience": 0.8991045,

"type": "PERSON"

},

{

"mentions": [

{

"text": {

"beginOffset": 27,

"content": "Cleveland Cavaliers"

},

"type": "PROPER"

}

],

"metadata": {

"mid": "/m/0jm7n",

"wikipedia_url": "https://en.wikipedia.org/wiki/Cleveland_Cavaliers"

},

"name": "Cleveland Cavaliers",

"salience": 0.100895494,

"type": "ORGANIZATION"

}

],

"language": "en"

}

A second way to explore the API is to use Python. To get an API key and authenticate, you need to follow the instructions (https://cloud.google.com/docs/authentication/getting-started). Then, launch the Jupyter Notebook in the same shell as the GOOGLE_APPLICATION_CREDENTIALS variable is exported:

→ ✗ export GOOGLE_APPLICATION_CREDENTIALS= /Users/noahgift/cloudai-65b4e3299be1.json

→ ✗ jupyter notebook

Once this authentication process is complete, the rest is straightforward. First, the python language api must be imported (This can be installed via pip if it isn’t already: pip install --upgrade google-cloud-language.)

In [1]: # Imports the Google Cloud client library ...: from google.cloud import language ...: from google.cloud.language import enums ...: from google.cloud.language import types

Next, a phrase is sent to the API and entity metadata is returned with an analysis.

In [2]: text = "LeBron James plays for the Cleveland Cavaliers." ...: client = language.LanguageServiceClient() ...: document = types.Document( ...: content=text, ...: type=enums.Document.Type.PLAIN_TEXT) ...: entities = client.analyze_entities(document).entities ...:

The output has a similar look and feel to the command-line version, but it comes back as a Python list.

[name: "LeBron James"

type: PERSON

metadata {

key: "mid"

value: "/m/01jz6d"

}

metadata {

key: "wikipedia_url"

value: "https://en.wikipedia.org/wiki/LeBron_James"

}

salience: 0.8991044759750366

mentions {

text {

content: "LeBron James"

begin_offset: -1

}

type: PROPER

}

, name: "Cleveland Cavaliers"

type: ORGANIZATION

metadata {

key: "mid"

value: "/m/0jm7n"

}

metadata {

key: "wikipedia_url"

value: "https://en.wikipedia.org/wiki/Cleveland_Cavaliers"

}

salience: 0.10089549422264099

mentions {

text {

content: "Cleveland Cavaliers"

begin_offset: -1

}

type: PROPER

}

]

A few of the takeaways are that this API could be easily merged with some of the other explorations done in Chapter 6, “Predicting Social-Media Influence in the NBA.” It wouldn’t be hard to imagine creating an AI application that found extensive information about social influencers by using these NLP APIs as a starting point. Another takeaway is that the command line given to you by the GCP Cognitive APIs is quite powerful.

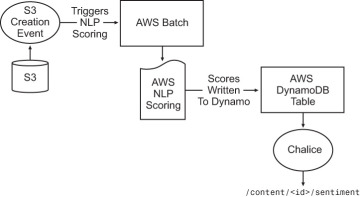

Production Serverless AI Pipeline for NLP on AWS

One thing AWS does well, perhaps better than any of the “big three” clouds, is make it easy to create production applications that are easy to write and manage. One of their “game changer” innovations is AWS Lambda. It is available to both orchestrate pipelines and serve our HTTP endpoints, like in the case of chalice. In Figure 11.4, a real-world production pipeline is described for creating an NLP pipeline.

Figure 11.4 Production Serverless NLP Pipeline on AWS

To get started with AWS sentiment analysis, some libraries need to be imported.

In [1]: import pandas as pd ...: import boto3 ...: import json

Next, a simple test is created.

In [5]: comprehend = boto3.client(service_name='comprehend')

...: text = "It is raining today in Seattle"

...: print('Calling DetectSentiment')

...: print(json.dumps(comprehend.detect_sentiment(Text=text, LanguageCode='en'), sort_keys=True, indent=4))

...:

...: print('End of DetectSentiment\n')

...:

The output shows a “SentimentScore.”

Calling DetectSentiment

{

"ResponseMetadata": {

"HTTPHeaders": {

"connection": "keep-alive",

"content-length": "164",

"content-type": "application/x-amz-json-1.1",

"date": "Mon, 05 Mar 2018 05:38:53 GMT",

"x-amzn-requestid": "7d532149-2037-11e8-b422-3534e4f7cfa2"

},

"HTTPStatusCode": 200,

"RequestId": "7d532149-2037-11e8-b422-3534e4f7cfa2",

"RetryAttempts": 0

},

"Sentiment": "NEUTRAL",

"SentimentScore": {

"Mixed": 0.002063251566141844,

"Negative": 0.013271247036755085,

"Neutral": 0.9274052977561951,

"Positive": 0.057260122150182724

}

}

End of DetectSentiment

Now, in a more realistic example, we’ll use the previous “negative movie reviews document” from the Azure example. The document is read in.

In [6]: path = "/Users/noahgift/Desktop/review_polarity/txt_sentoken/neg/cv000_29416.txt" ...: doc1 = open(path, "r") ...: output = doc1.readlines() ...:

Next, one of the “documents” (rember each line is a document according to NLP APIs) is scored.

In [7]: print(json.dumps(comprehend.detect_sentiment(Text=output[2], LanguageCode='en'), sort_keys=True, inden

...: t=4))

{

"ResponseMetadata": {

"HTTPHeaders": {

"connection": "keep-alive",

"content-length": "158",

"content-type": "application/x-amz-json-1.1",

"date": "Mon, 05 Mar 2018 05:43:25 GMT",

"x-amzn-requestid": "1fa0f6e8-2038-11e8-ae6f-9f137b5a61cb"

},

"HTTPStatusCode": 200,

"RequestId": "1fa0f6e8-2038-11e8-ae6f-9f137b5a61cb",

"RetryAttempts": 0

},

"Sentiment": "NEUTRAL",

"SentimentScore": {

"Mixed": 0.1490383893251419,

"Negative": 0.3341641128063202,

"Neutral": 0.468740850687027,

"Positive": 0.04805663228034973

}

}

It’s no surprise that that document had a negative sentiment score since it was previously scored this way. Another interesting thing this API can do is to score all of the documents inside as one giant score. Basically, it gives the median sentiment value. Here is what that looks like.

In [8]: whole_doc = ', '.join(map(str, output))

In [9]: print(json.dumps(comprehend.detect_sentiment(Text=whole_doc, LanguageCode='en'), sort_keys=True, inden

...: t=4))

{

"ResponseMetadata": {

"HTTPHeaders": {

"connection": "keep-alive",

"content-length": "158",

"content-type": "application/x-amz-json-1.1",

"date": "Mon, 05 Mar 2018 05:46:12 GMT",

"x-amzn-requestid": "8296fa1a-2038-11e8-a5b9-b5b3e257e796"

},

"Sentiment": "MIXED",

"SentimentScore": {

"Mixed": 0.48351600766181946,

"Negative": 0.2868672013282776,

"Neutral": 0.12633098661899567,

"Positive": 0.1032857820391655

}

}='en'), sort_keys=True, inden

...: t=4))

An interesting takeaway is that the AWS API has some hidden tricks up its sleeve and has a nuisance that is missing from the Azure API. In the previous Azure example, the Seaborn output showed that, indeed, there was a bimodal distribution with a minority of reviews liking the movie and a majority disliking the movie. The way AWS presents the results as “mixed” sums this up quite nicely.

The only things left to do is to create a simple chalice app that will take the scored inputs that are written to Dynamo and serve them out. Here is what that looks like.

from uuid import uuid4

import logging

import time

from chalice import Chalice

import boto3

from boto3.dynamodb.conditions import Key

from pythonjsonlogger import jsonlogger

#APP ENVIRONMENTAL VARIABLES

REGION = "us-east-1"

APP = "nlp-api"

NLP_TABLE = "nlp-table"

#intialize logging

log = logging.getLogger("nlp-api")

LOGHANDLER = logging.StreamHandler()

FORMMATTER = jsonlogger.JsonFormatter()

LOGHANDLER.setFormatter(FORMMATTER)

log.addHandler(LOGHANDLER)

log.setLevel(logging.INFO)

app = Chalice(app_name='nlp-api')

app.debug = True

def dynamodb_client():

"""Create Dynamodb Client"""

extra_msg = {"region_name": REGION, "aws_service": "dynamodb"}

client = boto3.client('dynamodb', region_name=REGION)

log.info("dynamodb CLIENT connection initiated", extra=extra_msg)

return client

def dynamodb_resource():

"""Create Dynamodb Resource"""

extra_msg = {"region_name": REGION, "aws_service": "dynamodb"}

resource = boto3.resource('dynamodb', region_name=REGION)

log.info("dynamodb RESOURCE connection initiated", extra=extra_msg)

return resource

def create_nlp_record(score):

"""Creates nlp Table Record

"""

db = dynamodb_resource()

pd_table = db.Table(NLP_TABLE)

guid = str(uuid4())

res = pd_table.put_item(

Item={

'guid': guid,

'UpdateTime' : time.asctime(),

'nlp-score': score

}

)

extra_msg = {"region_name": REGION, "aws_service": "dynamodb"}

log.info(f"Created NLP Record with result{res}", extra=extra_msg)

return guid

def query_nlp_record():

"""Scans nlp table and retrieves all records"""

db = dynamodb_resource()

extra_msg = {"region_name": REGION, "aws_service": "dynamodb",

"nlp_table":NLP_TABLE}

log.info(f"Table Scan of NLP table", extra=extra_msg)

pd_table = db.Table(NLP_TABLE)

res = pd_table.scan()

records = res['Items']

return records

@app.route('/')

def index():

"""Default Route"""

return {'hello': 'world'}

@app.route("/nlp/list")

def nlp_list():

"""list nlp scores"""

extra_msg = {"region_name": REGION,

"aws_service": "dynamodb",

"route":"/nlp/list"}

log.info(f"List NLP Records via route", extra=extra_msg)

res = query_nlp_record()

return res