- 4.1 Case Study

- 4.2 Creating a Naive Parallel Version

- 4.3 Performance of OpenACC Programs

- 4.4 An Optimized Parallel Version

- 4.5 Summary

- 4.6 Exercises

4.3 Performance of OpenACC Programs

Why did the code slow down? The first suspect that comes to mind for any experienced GPU programmer is data movement. The device-to-host memory bottleneck is usually the culprit for such a disastrous performance as this. That indeed turns out to be the case.

You could choose to use a sophisticated performance analysis tool, but in this case, the problem is so egregious you can probably find enlightenment with something as simple as the PGI environment profiling option:

export PGI_ACC_TIME=1

If you run the executable again with this option enabled, you will get additional output, including this:

Accelerator Kernel Timing data

main NVIDIA devicenum=0

time(us): 11,460,015

31: compute region reached 3372 times

33: kernel launched 3372 times

grid: [32x250] block: [32x4]

device time(us): total=127,433 max=54 min=37 avg=37

elapsed time(us): total=243,025 max=2,856 min=60 avg=72

31: data region reached 6744 times

31: data copyin transfers: 3372

device time(us): total=2,375,875 max=919 min=694 avg=704

39: data copyout transfers: 3372

device time(us): total=2,093,889 max=889 min=616 avg=620

41: compute region reached 3372 times

41: data copyin transfers: 3372

device time(us): total=37,899 max=2,233 min=6 avg=11

43: kernel launched 3372 times

grid: [32x250] block: [32x4]

device time(us): total=178,137 max=66 min=52 avg=52

elapsed time(us): total=297,958 max=2,276 min=74 avg=88

43: reduction kernel launched 3372 times

grid: [1] block: [256]

device time(us): total=47,492 max=25 min=13 avg=14

elapsed time(us): total=136,116 max=1,011 min=32 avg=40

43: data copyout transfers: 3372

device time(us): total=60,892 max=518 min=13 avg=18

41: data region reached 6744 times

41: data copyin transfers: 6744

device time(us): total=4,445,950 max=872 min=651 avg=659

49: data copyout transfers: 3372

device time(us): total=2,092,448 max=1,935 min=616 avg=620

The problem is not subtle. The line numbers 31 and 41 correspond to your two kernels directives. Each resulted in a lot of data transfers, which ended up using most of the time. Of the total sampled time of 11.4 seconds (everything is in microseconds here), well over 10s was spent in the data transfers, and very little time in the compute region. That is no surprise given that we can see multiple data transfers for every time a kernels construct was actually launched. How did this happen?

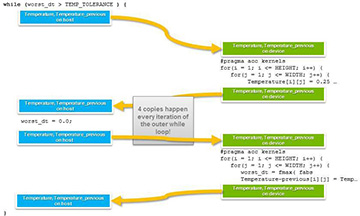

Recall that the kernels directive does the safe thing: When in doubt, copy any data used within the kernel to the device at the beginning of the kernels region, and off at the end. This paranoid approach guarantees correct results, but it can be expensive. Let’s see how that worked in Figure 4.2.

Figure 4.2. Multiple data copies per iteration

What OpenACC has done is to make sure that each time you call a device kernels, any involved data is copied to the device, and at the end of the kernels region, it is all copied back. This is safe but results in two large arrays getting copied back and forth twice for each iteration of the main loop. These are two 1,000 × 1,000 double-precision arrays, so this is (2 arrays) × (1,000 × 1,000 grid points/array) × (8 bytes/grid point) = 16MB of memory copies every iteration.

Note that we ignore worst_dt. In general, the cost of copying an 8-byte scalar (non-array) variable is negligible.