- Planning an Approach

- Planning the Workbook Structure

- The VBA Code

- The Analysis Sheets

Planning the Workbook Structure

Your workbook needs three types of worksheets: one type to collect the data from your web queries, one type to bring the query data together in a single place, and one type to run whatever analyses you decide are needed. (You also need a VBA module to hold your code, but that is covered in a later section of this chapter.)

Those three types of worksheet are discussed in the next three sections.

Query Sheets

If you haven’t used Excel to retrieve data from the Web, you might be surprised at how easy it is. I’m not speaking here of using your browser to get to a web page, selecting and copying some or part of its contents, and then pasting back into the workbook. I’m speaking of queries that can execute automatically and on a predetermined schedule, thus enabling you to walk away and let the computer gather data without you micromanaging it.

Suppose that you want to retrieve data from Amazon about a book entitled Statistical Analysis with Excel. You open a new workbook and rename an unused worksheet to something such as “Stats.”

Next, start your browser if necessary and navigate to Amazon’s page for that book. When you’re there, copy the page’s full address from the browser’s address box—drag across the address to highlight it and either press Ctrl + C or use the browser’s Edit menu to choose the Copy command.

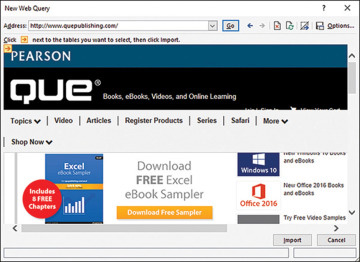

Switch back to Excel and click the Ribbon’s Data tab. Click the From Web button in the Get External Data group. The New Web Query window displays as shown in Figure 1.1.

Figure 1.1 What you see at first depends on your browser’s home page.

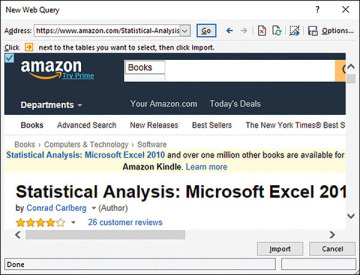

Drag through the address that appears in the Address box and press Ctrl + V to paste the address you copied. When you click the Go button, the query window opens the Amazon page (see Figure 1.2).

Figure 1.2 Web pages sometimes consist of several tables, rectangular areas that divide the page into different segments.

Typically you see one, and sometimes more than one, square icon (a red arrow on a yellow background) that identifies the location of part of the web page, called a table. You can select a table by clicking its icon. When you click one of these icons to select it, the icon turns blue to indicate that you want to download the data in that table.

I recommend that you select the entire page by clicking the icon in the upper-left corner of the window. If you select only a table or tables that contain the data you’re interested in, it could easily happen that a day, week, or month from now the page might be changed, so that the data you want appears on the same page but in a different table. Then your query will miss the data.

But if you select the entire web page instead of just a table or tables, the page’s owner would have to remove the data you’re interested in completely for you to miss it, and in that case it wouldn’t matter how much of the page you selected to download.

The individual table icons are useful mainly when you want to do a one-time-only download from a web page. Then you might want to avoid downloading a lot of extraneous stuff that would just make it harder to find the data you’re after. In the type of case I’m describing here, though, you’ll let Excel do the finding for you.

Furthermore, you don’t save much time or bandwidth by selecting just a subset of the web page. In most cases you’re picking up a couple of megabytes of text at most in an entire page.

Speed of Execution

Nevertheless, you should be aware of a speed versus version tradeoff. I have learned that using Excel 2007 and more recent versions, web queries can take significantly more time to complete than in earlier versions of Excel. Among the changes made to Excel 2007 was the addition of much more thorough checks of the data returned from web pages for malicious content.

I’ve found that when using an earlier version, it takes about 30 seconds to execute eight web queries in the way I’m describing here. Using Excel 2016, it takes about three times as long.

The basic intent of the project I’m describing here is to automatically and regularly update your downloaded data, so it probably seems unimportant to worry about whether the process takes half a minute or a minute and a half. Occasionally, though, for one reason or another I want to get an immediate update of the information and so I force the process to run manually. On those occasions I’d rather not wait.

Bringing the Data Back

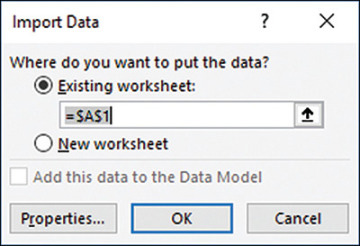

After you have clicked a red-and-yellow icon to turn it blue (using, I hope, the icon in the upper-left corner of the New Web Query window so that you get the entire page), click the Import button at the bottom of the New Web Query window. After a few seconds, the Import Data window appears as shown in Figure 1.3.

Figure 1.3 You can import immediately, but it’s a good idea to check the property settings first.

Accept the default destination of cell A1 and click OK. (The reason to use cell A1 becomes apparent when it’s time to refresh the query using VBA, later in this chapter.) There are some useful properties to set, so I recommend that you click the Properties button before you complete the import. The Properties window that displays is shown in Figure 1.4.

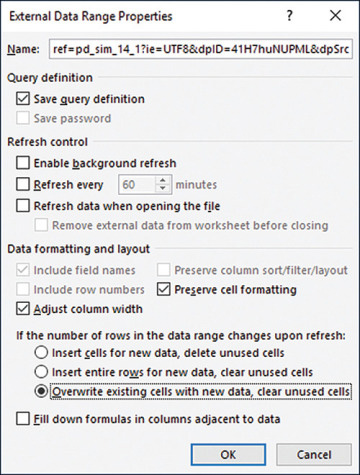

Be sure that the Save Query Definition box is checked. That way you can repeatedly and automatically run the query without having to define it all over again.

The Enable Background Refresh checkbox requires a little explanation. If it is filled, any VBA procedure that is running continues running as the query executes, and other direct actions by the user can proceed normally. Sometimes that can cause problems if a procedure depends on the results of the query: If the procedure expects to find data that isn’t available yet, you might get a runtime error or a meaningless result. Therefore, I usually clear the Enable Background Refresh checkbox.

Figure 1.4 By default the Save Query Definition checkbox is filled, but you should verify the setting when you create the query.

The various options in the Properties dialog box listed under Data Formatting and Layout are subtly different but can be important. I spend three pages detailing the differences in another Que book, Managing Data with Excel, and I don’t propose to do it again here. For present purposes, you might just as well accept the default values.

Click OK when you have made any changes you want and then click OK in the Import Data window. Excel completes the query, usually within a few seconds, and writes the results to the worksheet (see Figure 1.5).

Figure 1.5 When the query has finished executing, you wind up with a haystack of text and numbers. The next step is to find the needle.

Finding the Data

After the data has been retrieved from the web page, the next task is to locate the piece or pieces of information you’re looking for. I want to stress, though, that you need do this once only for each product you’re tracking—and quite possibly just once for all the products. It depends on how the web page administrator is managing the data.

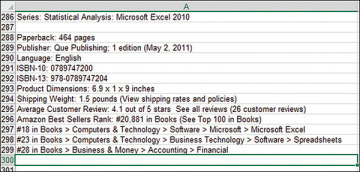

What you need to look for is a string of text that’s normally a constant: one that doesn’t change from hour to hour or day to day. That text string should be near—ideally, adjacent to—the data you want to retrieve. Figure 1.6 shows a portion of the results of a query.

Figure 1.6 In this case, the string Sellers Rank: in cell A296 uniquely locates the product ranking.

If you use Excel’s Find feature to scan a worksheet for the string Sellers Rank:, you can locate the worksheet cell that also contains the ranking for the product. With just a little more work, which you can easily automate and which I describe in the next section about VBA code, you can isolate the actual ranking from the surrounding text; it’s that ranking that you’re after.

Why not just note the cell address where the ranking is found after the query is finished? That would work fine if you could depend on the web page’s layout remaining static. But the website administrator has only to add an extra line, or remove one, above the data’s current location, and that will throw off the location of the cell with the data you’re after. No, you have to look for it each time, and the Find operation takes place very quickly anyway.

Summary Sheets

After you’ve acquired the data from a web page and isolated the figure you’re looking for, you need a place to put that figure, plus other relevant information such as date and time. That place is normally a separate worksheet. You expect to be querying the same web page repeatedly, as hours and days elapse. Therefore, you’ll want to store information that you’ve already retrieved somewhere that won’t get overwritten the next time the query runs.

So, establish an unused worksheet and name it something appropriate such as Summary or Synthesis or All Products. There are a few structural rules covered in the next section that you’ll find helpful to follow. But you can include some other useful analyses on the summary sheet, as long as they don’t interfere with the basic structure.

Structuring the Summary Sheet

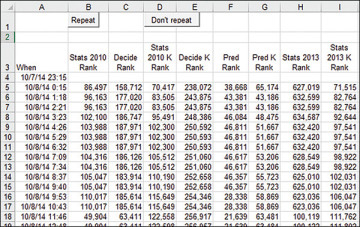

Figure 1.7 shows some of the structures that I put on my summary sheet.

Figure 1.7 You can put snapshot analyses supported by worksheet functions on the summary sheet.

In Figure 1.7, the first few columns are reserved for the rankings of several books that I have obtained via web queries from the appropriate Amazon pages. I also store the date and time the queries finished executing in column A. That time data provides my basis for longitudinal summaries: a baseline for the forecasting analyses that I discuss in Chapters 3 through 6 and 11.

It’s at this point that you have a decision to make. It’s nice to be able to retrieve data about sales rankings for products such as books. If you’ve written a good one, it’s gratifying to see the rankings drop as time goes by. (Remember, numerically lower rankings are better: A rank of 1 is a best seller.) But you likely never got paid a royalty or a commission, or had to fill a re-order, strictly on the basis of a sales ranking. It’s the sales themselves that you’re ultimately seeking: Granted that intermediate objectives such as clicks and conversions and rankings are key performance indicators, they don’t directly represent revenue.

From Rankings to Sales

So how do you translate sales rankings into a count of sales? I started by tracking sales rankings on Amazon for about a week and noticed some points of interest.

Telling a Sale from No Sale

A jump from a poorer ranking to a better ranking probably means the sale of at least one item. If the product has no sales during a given period, its ranking declines as other products do sell and move up. (It is of course possible that a book might experience sales even as its ranking declines, if its competition experiences greater sales.)

Ranking Sales

How do you rank sales? You can’t do it strictly on the number sold. A book, for example, might have sold 200 copies over a two-year period. Another book might have sold 100 copies since it was published last week. The second book is clearly performing better than the first, so you somehow have to combine elapsed time with number sold. Amazon doesn’t say, but my guess would be that the rankings are based in part on the ratio of sales to days elapsed since publication—in other words, sales per day.

Improved Rankings Without Sales

There are periods when an item’s ranking improves very gradually over a period of hours. There’s no reason to believe that an improvement from a ranking of, say, 20,000 to 19,999 indicates a sale. More likely it is a result of another day passing and the rankings recalculating accordingly. That means that before you conclude a sale took place, you need a credible minimum criterion.

Deciding on a Criterion

The criterion should be a rate, not a constant number. If a book jumps from a ranking of 200,101 to 200,001, that 100-place increase is considerably different from a book that jumps from a ranking of 101 to 1. I decided to conclude that a sale had taken place if an increase in rankings equaled or exceeded ten percent of the prior ranking. So, if a book ranked 15,000 at 3:00 p.m. and 13,000 at 4:00 p.m.:

(15000 − 13000)/15000 = 0.13 or 13%

I conclude that a sale took place.

Structuring the Formula

Suppose that I have two rankings for a given product, one taken at 3:00 p.m. in cell C18 and one taken at 4:00 p.m. in cell C19. If I want to test whether a sale took place between 3:00 p.m. and 4:00 p.m., I can enter this formula in, say, L19:

=IF((C18-C19)/C18>0.1,1,0)

The formula returns a 1 if the difference between the two rankings is positive (for example, an increase from a ranking of 1,000 to 900 is positive) and exceeds 10% of the earlier ranking. The formula returns a zero otherwise. After the formula is established on the worksheet, I use the same VBA code that re-executes the queries to copy the formula to the next available row.

Snapshot Formulas

I also like to watch one statistic that doesn’t depend on an ordered baseline of data the way that sales estimates do. That is the minimum (that is, the highest) sales ranking attained by each book since I started tracking the rankings.

I use Excel’s MIN( ) function to get that analysis for each book (in hard copy format). I put the functions at the top of the columns so that they won’t interfere with the results that come back from the web pages as the queries execute over time.

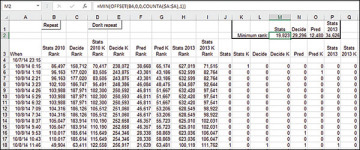

Figure 1.8 shows what those analyses look like. To get the minimum, best sales ranking for the same book, I use this formula in cell M2:

= MIN(OFFSET(B4,0,0,COUNTA($A:$A),1))

Figure 1.8 You can put snapshot analyses supported by worksheet functions on the summary sheet.

The formula returns the smallest numeric value for the fourth to the final populated row in column B.

Customizing Your Formulas

As shown in the final section of this chapter, I keep a monthly total of sales by book title in a pivot table that immediately follows the final and most current row of downloaded data. But because I often find it useful to know the total sales on the current day, I make a special provision for that. I keep it next to the pivot table that records monthly sales. It totals the number of books sold on the current day, regardless of the book title.

The formula that returns the current day’s total uses two named ranges, ranges that relocate themselves as each hour passes. The ranges are named RecentDays and RecentSales. Both range names take advantage of Excel’s mixed addressing feature.

For the formula to work properly, it needs to locate the rows that contain the data that was downloaded on the current day. Suppose that I select cell N2500 and define the range name RecentDays as referring to this range on the worksheet named Summary:

Summary!$A2468:$A2497

The name I have defined exists 14 columns to the left of cell N2500, and it occupies the rows from 33 to 4 rows above cell N2500—that is, the range encompasses 30 rows.

But the range name involves a mixed reference: a combination of a fixed and a relative reference. In this case, the column is fixed (by the dollar signs) to column A. We could enter a formula that uses the defined name in, say, Column B, and then copy and paste the that formula into column C. The formula would still refer to cells in column A because the dollar signs fix it there.

But if we copied and pasted the formula into cell N2501—that is, one row below where it was originally entered—the relative portion of the range’s address would increment by one row. As used in cell N2501, the mixed reference in RecentDays would point to $A2469:$A2498. The reference is relative to the location of whatever cell uses it.

I use the same approach with the range name RecentSales, which also uses a mixed reference but captures more than just one column. Here’s what RecentSales refers to, again assuming that cell N2500 is active when I define the name:

=Summary!$J2469:$Q2498

On the Summary sheet of the Collector workbook, column A contains the date and time when the rankings in columns B through I were downloaded. Columns J through Q contain a 1 (if the change in rankings indicate that a particular book was sold) or a 0 (otherwise).

Suppose that the most recent download wrote the ranking in row 2241. Suppose further that we array enter this formula in cell N2244:

=SUM(IF(DAY(RecentDays)=DAY(NOW( )),RecentSales,0))

When the range names RecentDays and RecentSales are used in a cell in row 2244, the relative portions of the mixed references cause the names to refer to values in rows 2212 through 2241. So the array formula does the following:

It checks to see if the value that identifies the current day (returned by the function DAY) equals the days in the range RecentDays (that’s the range A2212:A2241 as the name is used in row 2244). If the values are equal, then the record was downloaded today.

If a record in rows 2212 through 2241 was downloaded today, the array formula includes it in the sum of the values in the range named RecentSales. As used in the array formula, that is the range of 1s and 0s in J2212 through Q2241.

And that results in the number of inferred sales made today. Because the named ranges include 30 rows, the formula does not have to look all the way back to the beginning of the data in row 5. Because the named ranges include 30 rows, the formula is pretty sure to include all the hourly downloads that took place on the current day. The IF function ensures that the SUM function will ignore records downloaded on any prior day.

As you’ll see in this chapter’s section on VBA, when a new record is captured from a website and written to the worksheet, a new row is inserted at the bottom of the existing set of records. So doing pushes the cell with the array formula one row further down the worksheet, and the defined names now refer to ranges one row further down than before the insertion took place. So the array formula always points to a range of cells from 33 to 4 rows above the array formula itself.

You will doubtless have many questions that you’d like to ask of your data. The little summary formula that this section has described is idiosyncratic and of limited value beyond the specific data set that the Collector project downloads. I’ve spent this much time on it principally to demonstrate that Excel’s worksheet tools, formulas, functions and names are powerful enough to accommodate very specialized requirements.

It’s time to look closely at the VBA code needed to automate the project.