- Definition and Scope

- A Brief History of Augmented Reality

- Examples

- Related Fields

- Summary

Related Fields

In the previous section, we have highlighted a few AR applications. Other compelling examples of applications only tangentially match the definition we have given of AR. These applications often come from the related fields of mixed reality, ubiquitous computing, and virtual reality, which we briefly discuss here.

Mixed Reality Continuum

A user immersed in virtual reality experiences only virtual stimuli, for example, inside a CAVE (a room with walls consisting of stereoscopic back-projections) or when wearing a closed HMD. The space between reality and virtual reality, which allows real and virtual elements to be combined to varying degrees, is called mixed reality. In fact, some people prefer the term “mixed reality” over “augmented reality,” because they appreciate the broader and more encompassing notion of MR.

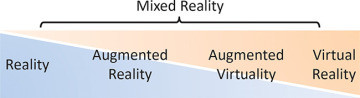

This view can be attributed to Milgram and Kishino [1994], who proposed a continuum (Figure 1.30) spanning from reality to virtual reality. They characterized MR as follows:

[MR involves the] merging of real and virtual worlds somewhere along the “virtuality continuum” which connects completely real environments to completely virtual ones.

Figure 1.30 The mixed reality continuum captures all possible combinations of the real and virtual worlds.

Benford et al. [1998] go one step further, arguing that a complex environment will often be composed of multiple displays and adjacent spaces, which constitute “mixed realities” (note the plural). These multiple spaces meet at “mixed reality boundaries.”

According to this perspective, augmented reality contains primarily real elements and, therefore, is closer to reality. For example, a user with an AR app on a smartphone will continue perceiving the real world in the normal way, but with some additional elements presented on the smartphone. The real-world experience clearly dominates in such a case. The opposite concept, augmented virtuality, prevails when there are primarily virtual elements present. As an example, imagine an online role-playing game, where the avatars’ faces are textured in real time with a video acquired from the player’s face. Everything in this virtual game world, except the faces, is virtual.

Virtual Reality

At the far right end of the MR continuum, virtual reality immerses a user in a completely computer-generated environment. This removes any restrictions as to what a user can do or experience in VR. VR is now becoming increasingly popular for enhanced computer games. New designs for HMD gaming devices, such as the Oculus Rift or HTC Vive, are receiving a great deal of public attention. Such devices are also suitable for augmented virtuality applications. Consequently, AR and VR can easily coexist within the MR continuum. As we will see later, transitional interfaces can be designed to harness the combined advantages of both concepts.

Ubiquitous Computing

Mark Weiser proposed the concept of ubiquitous computing (ubicomp) in his seminal 1991 essay. His work anticipates the massive introduction of digital technology into everyday life. Contrasting ubicomp with virtual reality, he advocates bringing the “virtuality” of computer-readable data into the physical world via a variety of computer form factors, which should sound familiar to today’s technology users: inch-scale “tabs,” foot-scale “pads,” and yard-scale “boards.”

Depending on the room, you may see more than 100 tabs, 10 or 20 pads, and one or two boards. This leads to our goal for initially deploying the hardware of embodied virtuality: hundreds of computers per room. [Weiser 1991]

This description includes the idea of mobile computing, which allows users to access digital information anytime and anywhere. However, it also predicts the “Internet of Things,” in which all elements of our everyday environment are instrumented. Mackay [1998] has argued that augmented things should also be considered as a form of AR. Consider, for example, home automation, driver assistance systems in cars, and smart factories capable of mass customization. If such technology works well, it essentially disappears from our perception. The first two sentences of Weiser’s 1991 article succinctly express this model:

The most profound technologies are those that disappear. They weave themselves into the fabric of everyday life until they are indistinguishable from it.

Ubicomp is primarily intended as “calm computing”; that is, human attention or control is neither required nor intended. However, at some point, control will still be necessary. A human operator away from a desktop computer, for example, may need to steer complex equipment. In such a situation, an AR interface can directly present status updates, telemetry information, and control widgets in a view of the real environment. In this sense, AR and ubicomp fit extremely well: AR is the ideal user interface for ubicomp systems.

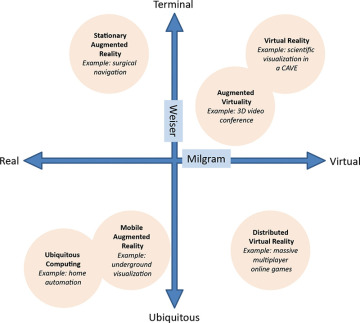

According to Weiser, VR is the opposite of ubicomp. Weiser notes the monolithic nature of VR environments, such as a CAVE, which isolate a user from the real world. However, Newman et al. [2007] suggest that ubicomp actually combines two important characteristics: virtuality and ubiquity. Virtuality, as described by the MR continuum, expresses the degree to which virtual and reality are mixed. Weiser considers location and place as computational inputs. Thus, ubiquity describes the degree to which information access is independent from being in a fixed place (a terminal). Based on these understandings, we can arrange a family of technologies in a “Milgram–Weiser” chart as shown in Figure 1.31.

Figure 1.31 The Milgram–Weiser chart visualizes the relationships of various user interface paradigms.