Analytics Success Story: Man Versus Machine

It has been a relentless pursuit by humans since the emergence of computing systems to develop machines that are capable of competing against humans on intelligence-requiring tasks. These developments have been tested on a number of games and computing scenarios. Following are the most notable ones in the span of history. Once we look at these examples, we no longer can say that machine learning is just for making predictions. Machine learning can be used to compute and make intelligent decisions. Behind these games and contests, the intention has always been to push forward the ability of computers to handle the kinds of complex calculations needed to help discover new medical drugs; do the broad financial modeling needed to identify trends and carry out risk analysis; handle large database searches; and perform massive calculations needed in advanced fields of science.

Checkers

Perhaps the earliest known example of machine learning within the context of games is Arthur Samuel’s work on a program that learned how to play checkers better than he could. Samuel used machine learning to learn how to make the right decisions. He developed the checkers playing game on IBM 700 series computers while he was working at IBM in the 1960s and 1970s. Arthur Lee Samuel was an American pioneer in the field of computer gaming and artificial intelligence. He coined the term machine learning in 1959. The Samuel Checkers-Playing Program was among the world’s first successful self-learning programs, and as such an early demonstration of the fundamental concept of artificial intelligence (AI).

Chess

Deep Blue was a chess-playing computer developed by IBM. It is known for being the first computer chess-playing system to win both a chess game and a chess match against a reigning world champion under regular time controls. Deep Blue won its first game against a world champion on 10 February 1996, when it defeated Garry Kasparov in game one of a six-game match. However, Kasparov won three and drew two of the following five games, defeating Deep Blue by a score of 4–2. Deep Blue was then heavily upgraded and played Kasparov again in May 1997. The match lasted several days and received massive media coverage around the world. It was the classic plot line of man versus machine. Deep Blue won game six, thereby winning the six-game rematch 3½–2½ and becoming the first computer system to defeat a reigning world champion in a match under standard chess tournament time controls.

Jeopardy!

After Deep Blue, a little more than a decade later, in another attempt to develop smart machines, IBM developed Watson in 2011 to compete against the best human players on the popular game show, Jeopardy!. Watson defeated the best two players (most money winner and most consecutive winner) at Jeopardy!. IBM Watson is designed as a learning machine (also called a cognitive machine at IBM) from unstructured data sources, or textual knowledge repositories. It was given numerous digital information sources to digest, and then it was trained with pairs of question answers from Jeopardy!. As it trained, its performance at answering Jeopardy! questions improved until it was able to best top human players. IBM Watson learned how to answer questions. It learned how to decide which answer was best given a question it had never seen before. A detailed description of IBM Watson’s story on Jeopardy! and its technical capabilities is given below.

Go

In December 2016, Google AlphaGo won a match against one of the top Go players. AlphaGo is an artificial intelligence machine learning that is based on deep learning. It was first trained on a large set of recorded Go games between top players. Then it trained against itself. As it trained, its performance at Go increased until it became better than a top human player. AlphaGo learned how to decide what the best next move was for any Go board configuration.

IBM Watson Explained

IBM Watson is perhaps the smartest computer system built to date. After a little more than a decade from the successful development of Deep Blue, IBM researchers came up with another, perhaps more challenging idea: a machine that can not only play Jeopardy! but beat the best of the best of human champions on the game show. Compared to chess, Jeopardy! is much more challenging. While chess is well structured, has simple rules, and therefore is a good match for computer processing, Jeopardy! is a game designed for human intelligence and creativity. It should be built like a cognitive computing system, much like a natural extension of what humans can do at their best. To be competitive, Watson needed to work and think like a human. Making sense of the imprecision inherent in human language was the key to success. With Watson, cognitive computing forged a new partnership between humans and computers that scales and augments human expertise.

Watson is an extraordinary computer system—a novel combination of advanced hardware and software—designed to answer questions posed in natural human language. It was developed in 2010 by an IBM research team as part of a DeepQA project and was named after IBM’s first president, Thomas J. Watson. The motivation behind Watson was to look for a major research challenge (one that could rival the scientific and popular interest of Deep Blue), which would also have clear relevance to IBM’s business interests. The goal was to advance computational science by exploring new ways for computer technology to affect science, business, and society at large. Accordingly, IBM Research undertook a challenge to build a computer system that could compete at the human champion level in real time on the American TV quiz show Jeopardy! The extent of the challenge included fielding a real-time automatic contestant on the show—capable of listening, understanding, and responding—not merely a laboratory exercise.

In 2011, as a test of its abilities, Watson competed on the quiz show Jeopardy!, which was the first ever human-versus-machine matchup for the show. In a two-game, combined-point match (broadcast in three Jeopardy! episodes during February 14–16), Watson beat Brad Rutter, the biggest all-time money winner on Jeopardy!, and Ken Jennings, the record holder for the longest championship streak (75 days). In these episodes, Watson consistently outperformed its human opponents on the game’s signaling device but had trouble responding to a few categories, notably those having short clues containing only a few words. Watson had access to 200 million pages of structured and unstructured content consuming 4 terabytes of disk storage. During the game, Watson was not connected to the Internet.

Meeting the Jeopardy! challenge required advancing and incorporating a variety of QA technologies (text mining and natural language processing), including parsing, question classification, question decomposition, automatic source acquisition and evaluation, entity and relation detection, logical form generation, and knowledge representation and reasoning. Winning at Jeopardy! required accurately computing confidence in one’s answers. The questions and content are ambiguous and noisy, and none of the individual algorithms are perfect. Therefore, each component must produce a confidence in its output, and individual component confidence must be combined to compute the overall confidence of the final answer. The final confidence is used to determine whether the computer system should risk choosing to answer at all. In Jeopardy! parlance, this confidence is used to determine whether the computer will “ring in” or “buzz in” for a question. The confidence must be computed during the time the question is read and before the opportunity to buzz in. This is roughly between 1 and 6 seconds with an average around 3 seconds.

How Does Watson Do It?

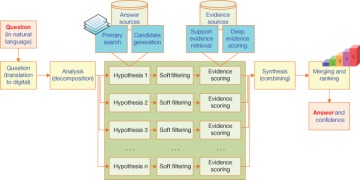

The system behind Watson, which is called DeepQA, is a massively parallel, text mining–focused, probabilistic evidence-based computational architecture. For the Jeopardy! challenge, Watson used more than 100 different techniques for analyzing natural language, identifying sources, finding and generating hypotheses, finding and scoring evidence, and merging and ranking hypotheses. What is far more important than any particular technique that Watson used was how it combined techniques in DeepQA such that overlapping approaches could bring their strengths to bear and contribute to improvements in accuracy, confidence, and speed. DeepQA is an architecture with an accompanying methodology that is not specific to the Jeopardy! challenge. The overarching principles in DeepQA are massive parallelism, many experts, pervasive confidence estimation, and integration of the-latest-and-greatest in text analytics.

Massive parallelism. Exploit massive parallelism in the consideration of multiple interpretations and hypotheses.

Many experts. Facilitate the integration, application, and contextual evaluation of a range of loosely coupled probabilistic question and content analytics.

Pervasive confidence estimation. No component commits to an answer; all components produce features and associated confidences, scoring different question and content interpretations. An underlying confidence-processing substrate learns how to stack and combine the scores.

Integrate shallow and deep knowledge. Balance the use of strict semantics and shallow semantics, leveraging many loosely formed ontologies.

Figure 1.6 illustrates the DeepQA architecture at a high level. More technical details about the various architectural components and their specific roles and capabilities can be found in Ferrucci et al. (2010).

FIGURE 1.6 A high-level depiction of the DeepQA architecture.

The Jeopardy! challenge helped IBM address requirements that led to the design of the DeepQA architecture and the implementation of Watson. After three years of intense research and development by a core team of about 20 researchers and a significant amount of R&D money, Watson managed to perform at human expert levels in terms of precision, confidence, and speed at the Jeopardy! quiz show. At that point in time, perhaps the big question was “so what now?” Was it all for a quiz show? Absolutely not! After showing the rest of the world what Watson (and the cognitive system behind Watson) could do, it became an inspiration for the next generation of intelligent information systems. For IBM, it was a demonstration of what is possible (and perhaps what the company is capable of doing) with the cutting-edge analytics and computational sciences. The message was clear: if a smart machine can beat the best of the best in humans at what they are the best at, think about what it can do for your organizational problems. The first industry that utilized Watson was health care, followed by finance, retail, education, public services, and advanced science.