- 3.1 Classification Tasks

- 3.2 A Simple Classification Dataset

- 3.3 Training and Testing: Don't Teach to the Test

- 3.4 Evaluation: Grading the Exam

- 3.5 Simple Classifier #1: Nearest Neighbors, Long Distance Relationships, and Assumptions

- 3.6 Simple Classifier #2: Naive Bayes, Probability, and Broken Promises

- 3.7 Simplistic Evaluation of Classifiers

- 3.8 EOC

3.3 Training and Testing: Don’t Teach to the Test

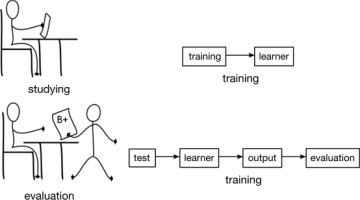

Let’s briefly turn our attention to how we are going to use our data. Imagine you are taking a class (Figure 3.2). Let’s go wild and pretend you are studying machine learning. Besides wanting a good grade, when you take a class to learn a subject, you want to be able to use that subject in the real world. Our grade is a surrogate measure for how well we will do in the real world. Yes, I can see your grumpy faces: grades can be very bad estimates of how well we do in the real world. Well, we’re in luck! We get to try to make good grades that really tell us how well we will do when we get out there to face reality (and, perhaps, our student loans).

FIGURE 3.2 School work: training, testing, and evaluating.

So, back to our classroom setting. A common way of evaluating students is to teach them some material and then test them on it. You might be familiar with the phrase “teaching to the test.” It is usually regarded as a bad thing. Why? Because, if we teach to the test, the students will do better on the test than on other, new problems they have never seen before. They know the specific answers for the test problems, but they’ve missed out on the general knowledge and techniques they need to answer novel problems. Again, remember our goal. We want to do well in the real-world use of our subject. In a machine learning scenario, we want to do well on unseen examples. Our performance on unseen examples is called generalization. If we test ourselves on data we have already seen, we will have an overinflated estimate of our abilities on novel data.

Teachers prefer to assess students on novel problems. Why? Teachers care about how the students will do on new, never-before-seen problems. If they practice on a specific problem and figure out what’s right or wrong about their answer to it, we want that new nugget of knowledge to be something general that they can apply to other problems. If we want to estimate how well the student will do on novel problems, we have to evaluate them on novel problems. Are you starting to feel bad about studying old exams yet?

I don’t want to get into too many details of too many tasks here. Still, there is one complication I feel compelled to introduce. Many presentations of learning start off using a teach-to-the-test evaluation scheme called in-sample evaluation or training error. These have their uses. However, not teaching to the test is such an important concept in learning systems that I refuse to start you off on the wrong foot! We just can’t take an easy way out. We are going to put on our big girl and big boy pants and do this like adults with a real, out-of-sample or test error evaluation. We can use these as an estimate for our ability to generalize to unseen, future examples.

Fortunately, sklearn gives us some support here. We’re going to use a tool from sklearn to avoid teaching to the test. The train_test_split function segments our dataset that lives in the Python variable iris. Remember, that dataset has two components already: the features and the target. Our new segmentation is going to split it into two buckets of examples:

A portion of the data that we will use to study and build up our understanding and

A portion of the data that we will use to test ourselves.

We will only study—that is, learn from—the training data. To keep ourselves honest, we will only evaluate ourselves on the testing data. We promise not to peek at the testing data. We started by breaking our dataset into two parts: features and target. Now, we’re breaking each of those into two pieces:

Features → training features and testing features

Targets → training targets and testing targets

We’ll get into more details about train_test_split later. Here’s what a basic call looks like:

In [5]:

# simple train-test split

(iris_train_ftrs, iris_test_ftrs,

iris_train_tgt, iris_test_tgt) = skms.train_test_split(iris.data,

iris.target,

test_size=.25)

print("Train features shape:", iris_train_ftrs.shape)

print("Test features shape:", iris_test_ftrs.shape)

Train features shape: (112, 4) Test features shape: (38, 4)

So, our training data has 112 examples described by four features. Our testing data has 38 examples described by the same four attributes.

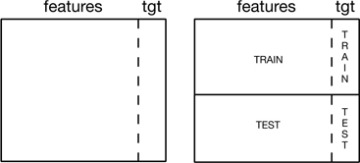

If you’re confused about the two splits, check out Figure 3.3. Imagine we have a box drawn around a table of our total data. We identify a special column and put that special column on the right-hand side. We draw a vertical line that separates that rightmost column from the rest of the data. That vertical line is the split between our predictive features and the target feature. Now, somewhere on the box we draw a horizontal line—maybe three quarters of the way towards the bottom.

FIGURE 3.3 Training and testing with features and a target in a table.

The area above the horizontal line represents the part of the data that we use for training. The area below the line is—you got it!—the testing data. And the vertical line? That single, special column is our target feature. In some learning scenarios, there might be multiple target features, but those situations don’t fundamentally alter our discussion. Often, we need relatively more data to learn from and we are content with evaluating ourselves on somewhat less data, so the training part might be greater than 50 percent of the data and testing less than 50 percent. Typically, we sort data into training and testing randomly: imagine shuffling the examples like a deck of cards and taking the top part for training and the bottom part for testing.

Table 3.1 lists the pieces and how they relate to the iris dataset. Notice that I’ve used both some English phrases and some abbreviations for the different parts. I’ll do my best to be consistent with this terminology. You’ll find some differences, as you go from book A to blog B and from article C to talk D, in the use of these terms. That isn’t the end of the world and there are usually close similarities. Do take a moment, however, to orient yourself when you start following a new discussion of machine learning.

Table 3.1 Relationship between Python variables and iris data components.

iris Python variable |

Symbol |

Phrase |

|---|---|---|

iris |

Dall |

(total) dataset |

iris.data |

Dftrs |

train and test features |

iris.target |

Dtgt |

train and test targets |

iris_train_ftrs |

Dtrain |

training features |

iris_test_ftrs |

Dtest |

testing features |

iris_train_tgt |

Dtraintgt |

training target |

iris_test_tgt |

Dtesttgt |

testing target |

One slight hiccup in the table is that iris.data refers to all of the input features. But this is the terminology that scikit-learn chose. Unfortunately, the Python variable name data is sort of like the mathematical x: they are both generic identifiers. data, as a name, can refer to just about any body of information. So, while scikit-learn is using a specific sense of the word data in iris.data, I’m going to use a more specific indicator, Dftrs, for the features of the whole dataset.