9.8 Tools

In many 3D applications, the use of real-world devices for 3D interaction can lead to increased usability. These devices, or their virtual representations, called tools, provide directness of interaction because of their real-world correspondence. Although individual tools may be used for selection, manipulation, travel, or other 3D interaction tasks, we consider a set of tools in a single application to be a system control technique. Like the tool palettes in many popular 2D drawing applications, tools in 3D UIs provide a simple and intuitive technique for changing the mode of interaction: simply select an appropriate tool.

We distinguish between three kinds of tools: physical tools, tangibles, and virtual tools. Physical tools are a collection of real physical objects (with corresponding virtual representations) that are sometimes called props. A physical tool might be used to perform one function only, but it could also perform multiple functions over time. A user accesses a physical tool by simply picking it up and using it. Physical tools are a subset of the larger category of tangible user interfaces (TUI, Ullmer and Ishii 2001). Physical tools are tangibles that represent a real-world tool, while tangibles in general can also be abstract shapes to which functions are connected. In contrast, virtual tools have no physical instantiation. Here, the tool is a metaphor; users select digital representations of tools, for example by selecting a virtual tool on a tool belt (Figure 9.17).

Figure 9.17 Tool belt menu. Note that the tool belt appears larger than normal because the photograph was not taken from the user’s perspective. (Photograph reprinted from Forsberg et al. [2000], © 2000 IEEE)

9.8.1 Techniques

A wide range of purely virtual tool belts exist, but they are largely undocumented in the literature, with few exceptions (e.g., Pierce et al. 1999). Therefore, in this section we focus on the use of physical tools and TUIs as used for system control in 3D UIs.

Based on the idea of props, a whole range of TUIs has appeared. TUIs often make use of physical tools to perform actions in a VE (Ullmer and Ishii 2001; Fitzmaurice et al. 1995). A TUI uses physical elements that represent a specific kind of action in order to interact with an application. For example, the user could use a real eraser to delete virtual objects or a real pencil to draw in the virtual space. In AR, tools can also take a hybrid form between physical shape and virtual tool. A commonly used technique is to attach visual markers to generic physical objects to be able to render virtual content on top of the physical object. An example can be found in Figure 9.18, where a paddle is used to manipulate objects in a scene (Kawashima et al. 2000).

Figure 9.18 Using tools to manipulate objects in an AR scene. (Kato et al. 2000, © 2000 IEEE).

Figure 9.19 shows a TUI for 3D interaction. Here, Ethernet-linked interaction pads representing different operations are used together with radio frequency identification (RFID)-tagged physical cards, blocks, and wheels, which represent network-based data, parameters, tools, people, and applications. Designed for use in immersive 3D environments as well as on the desktop, these physical devices ease access to key information and operations. When used with immersive VEs, they allow one hand to continuously manipulate a tracking wand or stylus, while the second hand can be used in parallel to load and save data, steer parameters, activate teleconference links, and perform other operations.

Figure 9.19 Visualization artifacts—physical tools for mediating interaction with 3D UIs. (Image courtesy of Brygg Ullmer and Stefan Zachow, Zuse Institute Berlin)

A TUI takes the approach of combining representation and control. This implies the combination of both physical representations and digital representations, or the fusion of input and output in one mediator. TUIs have the following key characteristics (from Ullmer and Ishii 2001):

Physical representations are computationally coupled to underlying digital information.

Physical representations embody mechanisms for interactive control.

Physical representations are perceptually coupled to actively mediated digital representations.

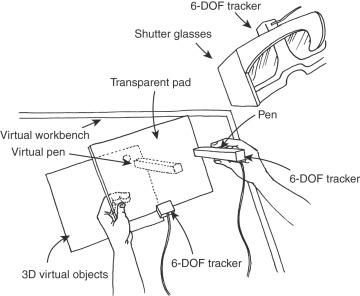

These ideas can also be applied to develop prop-based physical menus. In HWD-based VEs, for example, a tracked pen can be used to select from a virtual menu placed on a tracked tablet (Bowman and Wingrave 2001), which may also be transparent. An example of the latter approach is the Personal Interaction Panel (Schmalstieg et al. 1999), which combines a semitransparent Perspex plate to display a menu, combining VR and AR principles (Figure 9.20). Tablet computers can also be used; a tablet has the advantage of higher accuracy and increased resolution. The principal advantage of displaying a menu on a tablet is the direct haptic feedback to the user who interacts with the menu. This results in far fewer selection problems compared to a menu that simply floats in the VE space.

Figure 9.20 Personal Interaction Panel—combining virtual and augmented reality. (Adapted from Schmalstieg et al. 1999)

9.8.2 Design and Implementation Issues

The form of the tool communicates the function the user can perform with the tool, so carefully consider the form when developing props. A general approach is to imitate a traditional control design (Bullinger et al. 1997). Another approach is to duplicate everyday tools. The user makes use of either the real tool or something closely resembling the tool in order to manipulate objects in a spatial application.

Another important issue is the compliance between the real and virtual worlds; that is, the correspondence between real and virtual positions, shapes, motions, and cause-effect relationships (Hinckley et al. 1994). Some prop-based interfaces, like the Cubic Mouse (Fröhlich and Plate 2000), have demonstrated a need for a clutching mechanism. See Chapter 7 for more information on compliance and clutching in manipulation techniques.

The use of props naturally affords eyes-off operation (the user can operate the device by touch), which may have significant advantages, especially when the user needs to focus visual attention on another task. On the other hand, it also means that the prop must be designed to allow tactile interaction. A simple tracked tablet, for example, does not indicate the locations of menu items with haptic cues; it only indicates the general location of the menu.

A specific issue for physical menus is that the user may want to place the menu out of sight when it is not in use. The designer may choose to put a clip on the tablet so that the user can attach it to his clothing, may reserve a special place in the display environment for it, or may simply provide a handle on the tablet so it can be held comfortably at the user’s side.

9.8.3 Practical Application

Physical tools are very specific devices. In many cases, they perform only one function. In applications with a great deal of functionality, tools can still be useful, but they may not apply to all the user tasks. There is a trade-off between the specificity of the tool (a good affordance for its function) and the amount of tool switching the user will have to do.

Public installations of VEs (e.g., in museums or theme parks) can greatly benefit from the use of tools. Users of public installations by definition must be able to use the interface immediately. Tools tend to allow exactly this. A well-designed tool has a readily apparent set of affordances, and users may draw from personal experience with a similar device in real life. Many theme park installations make use of props to allow the user to begin playing right away. For example, the Pirates of the Caribbean installation at Disney Quest uses a physical steering wheel and cannons. This application has almost no learning curve—including the vocal introduction, users can start interacting with the environment in less than a minute (Mine 2003).