9.7 Gestural Commands

Gestures were one of the first system control techniques for VEs and other 3D environments. Ever since early projects like Krueger’s Videoplace (Krueger et al. 1985), developers have been fascinated by using the hands as direct input, almost as if one is not using an input device at all. Especially since the seemingly natural usage of gestures in the movie Minority Report, many developers have experimented with gestures. Gesture interfaces are often thought of as an integral part of perceptual user interfaces (Turk and Robertson 2000) or natural user interfaces (Wigdor and Wixon 2011). However, designing a truly well performing and easy-to-learn system is one of the most challenging tasks of 3D UI design. While excellent gesture-based interfaces exist for simple task sets that replicate real-world actions—gaming environments such as the Wii or XBox Kinect are good examples—more complex gestural interfaces for system control are hard to design (LaViola 2013).

Gestural commands can be classified as either postures or gestures. A posture is a static configuration of the hand (Figure 9.11), whereas a gesture is a movement of the hand (Figure 9.12), perhaps while it is in a certain posture. An example of a posture is holding the fingers in a V-like configuration (the “peace” sign), whereas waving and drawing are examples of gestures. The usability of gestures and postures for system control depends on the number and complexity of the gestural commands—more gestures imply more learning for the user.

Figure 9.11 Examples of postures using a Data Glove. (Photograph courtesy of Joseph J. LaViola Jr.)

Figure 9.12 Mimic gesture. (Schkolne et al. 2001; © 2001 ACM; reprinted by permission)

9.7.1 Techniques

One of the best examples to illustrate the diversity of gestural commands is Polyshop (later Multigen’s Smart Scene; Mapes and Moshell 1995). In this early VE application, all interaction was specified by postures and gestures, from navigation to the use of menus. For example, the user could move forward by pinching an imaginary rope and pulling herself along it (the “grabbing the air” technique—see Chapter 8, “Travel,” section 8.7.2). As this example shows, system control overlaps with manipulation and navigation in such a 3D UI, since the switch to navigation mode occurs automatically when the user pinches the air. This is lightweight and effective because no active change of mode is performed.

In everyday life, we use many different types of gestures, which may be combined to generate composite gestures. We identify the following gesture categories, extending the categorizations provided by Mulder (1996) and Kendon (1988):

Mimic gestures: Gestures that are not connected to speech but are directly used to describe a concept. For example, Figure 9.12 shows a gesture in 3D space that defines a curved surface (Schkolne et al. 2001).

Symbolic gestures: Gestures as used in daily life to express things like insults or praise (e.g., “thumbs up”).

Sweeping: Gestures coupled to the use of marking-menu techniques. Marking menus, originally developed for desktop systems and widely used in modeling applications, use pie-like menu structures that can be explored using different sweep-like trajectory motions (Ren and O’Neill 2013).

Sign language: The use of a specified set of postures and gestures in communicating with hearing-impaired people (Fels 1994), or the usage of finger counting to select menu items (Kulshreshth et al. 2014).

Speech-connected hand gestures: Spontaneous gesticulation performed unintentionally during speech or language-like gestures that are integrated in the speech performance. A specific type of language-like gesture is the deictic gesture, which is a gesture used to indicate a referent (e.g., object or direction) during speech. Deictic gestures have been studied intensely in HCI and applied to multimodal interfaces such as Bolt’s “put that there” system (Bolt 1980).

Surface-based gestures: Gestures made on multitouch surfaces. Although these are 2D, surface-based gestures (Rekimoto 2002) have been used together with 3D systems to create hybrid interfaces, an example being the Toucheo system displayed in Figure 9.13 (Hachet et al. 2011).

Whole-body interaction: While whole-body gestures (motions) can be mimic or symbolic gestures, we list them here separately due to their specific nature. Instead of just the hand and possibly arm movement (England 2011), users may use other body parts like feet, or even the whole body (Beckhaus and Kruijff, 2004).

Figure 9.13 TOUCHEO—combining 2D and 3D interfaces. (© Inria / Photo H. Raguet).

9.7.2 Design and Implementation Issues

The implementation of gestural interaction depends heavily on the input device being used. At a low level, the system needs to be able to track the hand, fingers, and other body parts involved in gesturing (see Chapter 6, “3D User Interface Input Hardware,” section 6.3.2. At a higher level, the postures and motions of the body must be recognized as gestures. Gesture recognition typically makes use of either machine learning or heuristics.

Gesture recognition is still not always reliable. Calibration may be needed but may not always be possible. When gestural interfaces are used in public installations, recognition should be robust without a calibration phase.

When a menu is accessed via a gestural interface, the lower accuracy of gestures may lead to the need for larger menu items. Furthermore, the layout of menu items (horizontal, vertical, or circular) may have an effect on the performance of menu-based gestural interfaces.

Gesture-based system control shares many of the characteristics of speech input discussed in the previous section. Like speech, a gestural command combines initialization, selection, and issuing of the command. Gestures should be designed to have clear delimiters that indicate the initialization and termination of the gesture. Otherwise, many normal human motions may be interpreted as gestures while not intended as such (Baudel and Beaudouin-Lafon, 1993). This is known as the gesture segmentation problem. As with push-to-talk in speech interfaces, the UI designer should ensure that the user really intends to issue a gestural command via some implicit or explicit mechanism (this sometimes is called a “push-to-gesture” technique). One option could be to disable gestures in certain areas, for example close to controllers or near a monitor (Feiner and Beshers, 1990).

The available gestures in the system are typically invisible to the user: users may need to discover the actual gesture or posture language (discoverability). Subsequently, the number and composition of gestures should be easy to learn. Depending on the frequency of usage of an application by a user, the total number of gestures may need to be limited to a handful, while the set of gestures for an expert user may be more elaborate. In any case, designers should make sure the cognitive load is reasonable. Finally, the system should also provide adequate feedback to the user when a gesture is recognized.

9.7.3 Practical Application

Gestural commands have significant appeal for system control in 3D UIs because of their important role in our day-to-day lives. Choose gestural commands if the application domain already has a set of well-defined, natural, easy-to-understand, and easy-to-recognize gestures. In addition, gestures may be more useful in combination with another type of input (see section 9.9). Keep in mind that gestural interaction can be very tiring, especially for elderly people (Bobeth et al. 2012). Hence, you may need to adjust your choice of system control methods based on the duration of tasks.

Entertainment and video games are just one example of an application domain where 3D gestural interfaces are becoming more common. This trend is evident from the fact that all major video game consoles and the PC support devices capture 3D motion from a user. In other cases, video games are being used as the research platform for exploring and improving 3D gesture recognition. Figure 9.14 shows an example of using a video game to explore what the best 3D gesture set would be for a first-person navigation game (Norton et al. 2010). A great deal of 3D gesture recognition research has focused on the entertainment and video game domain (Cheema et al. 2013; Bott et al. 2009; Kang et al. 2004; Payne et al. 2006; Starner et al. 2000).

Figure 9.14 A user performing a 3D climbing gesture in a video game application. (Image courtesy of Joseph LaViola).

Medical applications used in operating rooms are another area where 3D gestures have been explored. Using passive sensing enables the surgeon or doctor to use gestures to gather information about a patient on a computer while still maintaining a sterile environment (Bigdelou et al. 2012; Schwarz et al. 2011). 3D gesture recognition has also been explored with robotic applications in the human-robot interaction field. For example, Pfeil et al. (2013) used 3D gestures to control unmanned aerial vehicles (UAVs; Figure 9.15). They developed and evaluated several 3D gestural metaphors for teleoperating the robot. Williamson et al. (2013) developed a full-body gestural interface for dismounted soldier training, while Riener (2012) explored how 3D gestures could be used to control various components of automobiles. Finally, 3D gesture recognition has recently been explored in consumer electronics, specifically for control of large-screen smart TVs (Lee et al. 2013; Takahashi et al. 2013).

Figure 9.15 A user controlling an unmanned aerial vehicle (UAV) with 3D gestures (Image courtesy of Joseph LaViola).

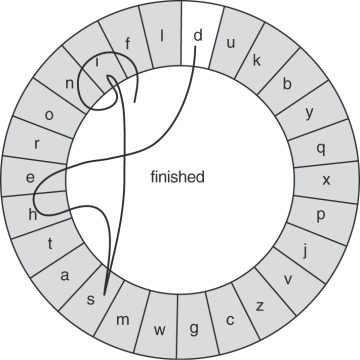

Gesture interfaces have also been used for symbolic input. Figure 9.16 shows an example of such an interface, in which pen-based input is used. These techniques can operate at both the character-level and word-level. For example, in Cirrin, a stroke begins in the central area of a circle and moves through regions around a circle representing each character in a word (Figure 9.16).

Figure 9.16 Layout of the Cirrin soft keyboard for pen-based input (Mankoff and Abowd 1998, © 1998 ACM; reprinted by permission