9.11 Case Studies

System control issues are critical to both of our case studies. If you have not yet read the introduction to the case studies, take a look at section 2.4 before reading this section.

9.11.1 VR Gaming Case Study

Given the description so far of our VR action-adventure game, you might think that the game is purely about direct interaction with the world and that there are no system control tasks to consider. Like most real applications, however, there are a variety of small commands and settings that the player needs to be able to control, such as saving a game to finish later, loading a saved game, pausing the game, choosing a sound effects volume, etc. Since these actions will happen only rarely and are not part of the game world itself, a simple 3D graphical point-and-click menu such as those described in section 9.5 will be sufficient. Pointing can be done with the dominant hand’s controller, acting as a virtual laser pointer.

There are, however, two more prominent system control tasks that will occur often, and are more central to the gameplay. The first of these tasks is opening the inventory, so a collected item can be placed in it or so an item can be chosen out of it. An inventory (set of items that are available to the player) is a concept with a straightforward real-world metaphor: a bag or backpack. We can fit this concept into our story by having the hero (the player) come into the story already holding the flashlight and a shopping bag. The shopping bag can be shown hanging from the nondominant hand (the same hand holding the flashlight).

To open the bag (inventory), the player moves the dominant hand close to the bag’s handle (as if he’s going to pull one side of the bag away from the other). If the player is already holding an object in the dominant hand, he can press a button to drop it into the bag, which can then automatically close. This is essentially a “virtual tool” approach to system control (section 9.8), which is appropriate since we want our system control interface to integrate directly into the world of the game.

If the player wants to grab an item out of the inventory, it might be tricky to see all the items in the bag from outside (especially since, like many games of this sort, the inventory will magically be able to hold many items, some of which might be larger than the bag itself). To address this, we again draw inspiration from the game Fantastic Contraption, which provides a menu that’s accessed when the player grabs a helmet and puts it over his head, placing the player in a completely different menu world. Similarly, we allow the player to put his head inside the bag, which then grows very large, so that the player is now standing inside the bag, with all the items arrayed around him (a form of graphical menu), making it easy to select an object using the selection techniques described in section 7.12.1. After an item is selected, the bag shrinks back to normal size and the player finds himself standing in the virtual room again.

The second primary in-game system control task is choosing a tool to be used with the tool handle on the player’s dominant hand. As we discussed in the selection and manipulation chapter, the user starts with a remote selection tool (the “frog tongue”), but in order to solve the puzzles and defeat the monsters throughout the game, we envision that many different tools might be acquired. So the question is how to select the right tool mode at the right time. Again, we could use a very physical metaphor here, like the virtual tool belt discussed in section 9.8, where the player reaches to a location on the belt to swap one tool for another one. But this action is going to occur quite often in the game and will sometimes need to be done very quickly (e.g., when a monster is approaching and the player needs to get the disintegration ray out NOW). So instead we use the metaphor of a Swiss army knife. All the tools are always attached to the tool handle, but only one is out and active at a time. To switch to the next tool, the player just flicks the controller quickly up and down. Of course, this means that the player might have to toggle through several tool choices to get to the desired tool, but if there aren’t too many (perhaps a maximum of four or five), this shouldn’t be a big problem. Players will quickly memorize the order of the tools, and we can also use sound effects to indicate which tool is being chosen, so switching can be done eyes-free.

Key Concepts

Think differently about the design of system control that’s part of gameplay and system control that’s peripheral to gameplay.

When there are few options to choose from, a toggle that simply rotates through the choices is acceptable (and maybe even faster), rather than a direct selection.

System control doesn’t have to be boring, but be careful not to make it too heavyweight.

9.11.2 Mobile AR Case Study

While many AR applications tend to have lower functional complexity, the HYDROSYS application provided access to a wider range of functions. The process of designing adequate system control methods revealed issues that were particular for AR applications but also showed similarities to difficulties in VR systems.

As with all 3D systems, system control is highly dependent on the display type and input method. In our case, we had a 5.6” screen on the handheld device, with a 1024 x 600 resolution, and users provided input to the screen using finger-touch or a pen. The interface provided access to four different task categories: data search, general tools, navigation, and collaboration tools. As each category included numerous functions, showing all at once was not a viable approach—either the menu system would overlap most of the augmentations, or the menus would be illegible. Thus, we had to find a screen-space-effective method that would not occlude the environment.

We created a simple but suitable solution by separating the four groups of tasks. We assigned each task group an access button in one of the corners. When not activated, a transparent button showing the name of the task group was shown. We chose a transparent icon to limit occlusion of the augmented content. The icon did have legibility issues with certain backgrounds (see the bottom of Figure 9.22), a problem typical for AR interfaces (Kruijff et al. 2010). This was not a major problem, since users could easily memorize the content of the four icons.

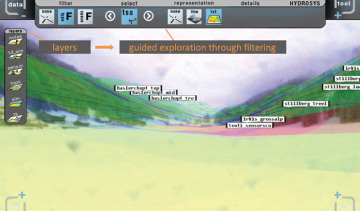

Figure 9.22 Example of a menu bar, in this case the data search menu, using the guided exploration approach. (Image courtesy by Ernst Kruijff and Eduardo Veas).

Once a menu button was selected, a menu would appear at one of the four borders of the screen, leaving the center of the screen visible. In this way, users could have a menu open while viewing the augmented content. We used straightforward 2D menus with icons, designed to be highly legible under different lighting conditions that occur in outdoor environments. Menu icons combined visual representations and text, as some functions could not be easily represented by visual representation alone. In some cases, we could not avoid pop-up lists of choices—only a limited number of icons could be shown on a horizontal bar—but most of the menus were space-optimized. One particular trick we used was the filtering of menu options. In the data search menu bar (see the top of Figure 9.22), we used the principle of guided exploration. For example, certain types of visualizations were only available for certain types of sensor data: when a user selected a sensor data type, in the next step, only the appropriate visualization methods were shown, saving space and improving performance along the way.

Key Concepts

Perceptual issues: Visibility and legibility affect the design methods of AR system control in a way similar to those of general 2D menu design. However, their effects are often stronger, since AR interfaces are highly affected by display quality and outdoor conditions.

Screen space: As screen space is often limited, careful design is needed to optimize the layout of system control methods to avoid occlusion of the augmentations.