Examples

In this section, we continue our exploration of AR by examining a set of examples, which showcase both AR technology and applications of that technology. We begin with application domains in which AR technologies demonstrated early success—namely, industry and construction. These examples are followed by applications in maintenance and training, and in the medical domain. We then discuss examples that focus on individuals on the move: personal information display and navigational support. Finally, we present examples illustrating how large audiences can be supported by AR using enhanced media channels in, for example, television, online commerce, and gaming.

Industry and Construction

As mentioned in our brief historic overview of AR, some of the first actual applications motivating the use of AR were industrial in nature, such as Boeing’s wire bundle assembly needs and early maintenance and repair examples.

Industrial facilities are becoming increasingly complex, which profoundly affects their planning and operation. Architectural structures, infrastructure, and machines are planned using computer-aided design (CAD) software, but typically many alterations are made during actual construction and installation. These alterations usually do not find their way back into the CAD models. In addition, there may be a large body of legacy structures predating the introduction of CAD for planning as well as the need for frequent changes of the installations—for example, when a factory is adapted for the manufacturing of a new product. Planners would like to compare the “as planned” to the “as is” state of a facility and identify any critical deviations. They would also like to obtain a current model of the facility, which can be used for planning, refurbishing or logistics procedures.

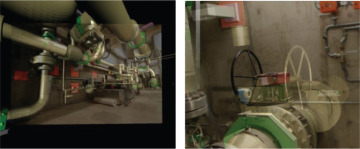

Traditionally, this is done with 3D scanners and off-site data integration and comparison. This process is lengthy and tedious, however, and it results in low-level models consisting of point clouds. AR offers the opportunity to perform on-site inspection, bringing the CAD model to the facility rather than the reverse. Georgel et al. [2007], for example, have developed a technique for still-frame AR that extracts the camera pose from perspective cues in a single image and overlays registered, transparently rendered CAD models (Figure 1.12).

Figure 1.12 AR can be used for discrepancy analysis in industrial facilities. These images show still frames overlaid with CAD information. Note how the valve on the right-hand side was mounted on the left side rather than on the right side as in the model. Courtesy of Nassir Navab.

Schönfelder and Schmalstieg [2008] have proposed a system based on the Planar (Figure 1.13), an AR display on wheels with external tracking. It provides fully interactive, real-time discrepancy checking for industrial facilities.

Figure 1.13 The Planar is a touchscreen display on wheels (left), which can be used for discrepancy analysis directly on the factory floor (right). Courtesy of Ralph Schönfelder.

Utility companies rely on geographic information systems (GIS) for managing underground infrastructure, such as telecommunication lines or gas pipes. The precise locations of the underground assets are required in a variety of situations. For example, construction managers are legally obliged to obtain information on underground infrastructure, so that they can avoid any damage to these structures during excavations. Likewise, locating the reason for outages or updating outdated GIS information frequently requires on-site inspection. In all these cases, presenting an AR view that is derived from the GIS and directly registered on the target site can significantly improve the precision and speed of outdoor work [Schall et al. 2008]. Figure 1.14 shows Vidente, one such outdoor AR visualization system.

Figure 1.14 Tablet computer with differential GPS system for outdoor AR (left). Geo-registered view of a virtual excavation revealing a gas pipe (right). Courtesy of Gerhard Schall.

Camera-bearing micro-aerial vehicles (drones) are increasingly being used for airborne inspection and reconstructions of construction sites. These drones may have some degree of autonomous flight control, but always require a human operator. AR can be extremely useful in locating the drone (Figure 1.15), monitoring its flight parameters such as position over ground, height, or speed, and alerting the operator to potential collisions [Zollmann et al. 2014].

Figure 1.15 While the drone has flown far away and is barely visible, its position can be visualized using a spherical AR overlay. Courtesy of Stefanie Zollmann.

Maintenance and Training

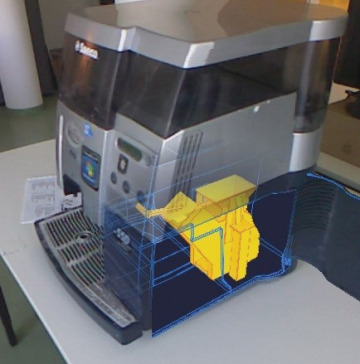

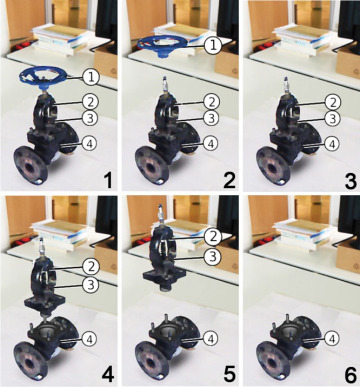

Understanding how things work, and learning how to assemble, disassemble, or repair them, is an important challenge in many professions. Maintenance engineers often devote a large amount of time to studying manuals and documentation, since it is often impossible to memorize all procedures in detail. AR, however, can present instructions directly superimposed in the field of view of the worker. This can provide more effective training, but, more importantly, allows personnel with less training to correctly perform the work. Figure 1.16 reveals how AR can assist with the removal of the brewing unit of an automatic coffee maker, and Figure 1.17 shows the disassemble sequence for a valve [Mohr et al. 2015].

Figure 1.16 Ghost visualization revealing the interior of a coffee machine to guide end-user maintenance. Courtesy of Peter Mohr.

Figure 1.17 Automatically generated disassembly sequence of a valve. Courtesy of Peter Mohr.

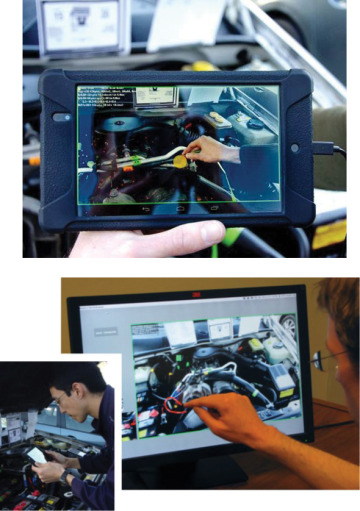

If human support is sought, AR can provide a shared visual space for live mobile remote collaboration on physical tasks [Gauglitz et al. 2014a]. With this approach, a remote expert can explore the scene independently of the local user’s current camera position and can communicate via spatial annotations that are immediately visible to the local user in the AR view (Figure 1.18). This can be achieved with real-time visual tracking and reconstruction, eliminating the need for preparation or instrumentation of the environment. AR telepresence combines the benefits of live video conferencing and remote scene exploration into a natural collaborative interface.

Figure 1.18 A car repair scenario assisted by a remote expert via AR telepresence on a tablet computer (top). The remote expert can draw hints directly on the 3D model of the car that is incrementally transmitted from the repair site (bottom). Courtesy of Steffen Gauglitz.

Medical

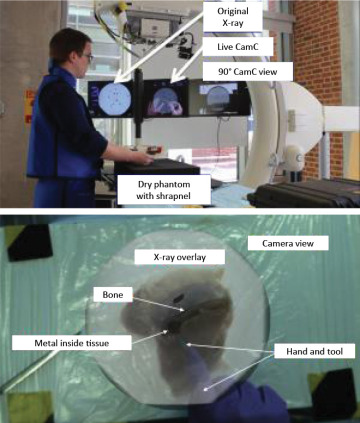

The use of X-ray imaging revolutionized diagnostics by allowing physicians to see inside a patient without performing surgery. However, conventional X-ray and computed tomography devices separate the interior view from the exterior view of the patient. AR integrates these views, enabling the physician to see directly inside the patient. One such example, which is now commercially available, is the Camera Augmented Mobile C-arm, or CamC (Figure 1.19). A mobile C-arm is used to provide X-ray views in the operating theater. CamC extends these views with a conventional video camera, which is arranged coaxially with the X-ray optics to deliver precisely registered image pairs [Navab et al. 2010]. The physician can transition and blend between the inside and outside views as desired. CamC has many clinical applications, including guiding needle biopsies and facilitating orthopedic screw placement.

Figure 1.19 The CamC is a mobile C-arm, which allows a physician to seamlessly blend between a conventional camera view and X-ray images. Courtesy of Nassir Navab.

Personal Information Display

As we have seen, several specific application domains can profit from the use of AR technology. But can this technology be applied more broadly to support larger audiences in completing everyday tasks? Today, this question is being answered with a resounding “yes.” A large variety of AR browser apps are already available on smartphones (e.g., Layar, Wikitudes, Junaio, and others). These apps are intended to deliver information related to places of interest in the user’s environment, superimposed over the live video from the device’s camera. The places of interest are either given in geo-coordinates and identified via the phone’s sensors (GPS, compass readings) or identified by image recognition. AR browsers have obvious limitations, such as potentially poor GPS accuracy and augmentation capabilities only for individual points rather than full objects. Nevertheless, thanks to the proliferation of smartphones, these apps are universally available, and their use is growing, owing to the social networking capabilities built into the AR browsers. Figure 1.20 shows the AR browser Yelp Monocle, which is integrated into the social business review app Yelp.

Figure 1.20 AR browsers such as Yelp Monocle superimpose points of interest on a live video feed.

Another compelling use case for AR browsing is simultaneous translation of foreign languages. This utility is now widely available in the Google Translate app (Figure 1.21). The user just has to select the target language and point the device camera toward the printed text; the translation then appears superimposed over the image.

Figure 1.21 Google Translate superimposes spontaneous translations of text, recognized in real time, over the camera image.

Navigation

The idea of heads-up navigation, which does not distract the operator of a vehicle moving at high speeds from the environment ahead, was first considered in the context of military aircraft [Furness 1986]. A variety of see-through displays, which can be mounted to the visor of a pilot’s helmet, have been developed since the 1970s. These devices, which are usually called heads-up displays, are mostly intended to show nonregistered information, such as the current speed or torque, but can also be used to show a form of AR. Military technology, however, is usually not directly applicable to the consumer market, which demands different ergonomics and pricing structures.

With improved geo-information, it has become possible to overlay larger structures on in-car navigation systems, such as road networks. Figure 1.22 shows Wikitude Drive, a first-person car navigation system. The driving instructions are overlaid on top of the live video feed rather than being presented in a map-like view. The registration quality in this system is acceptable despite being based on smartphone sensors such as GPS, as the inertia of a car allows the system to predict the geography ahead with relative accuracy.

Figure 1.22 Wikitude Drive superimposes a perspective view of the road ahead. Courtesy of Wikitude GmbH.

Figure 1.23 shows a parking assistant, which overlays a graphical visualization of the car trajectory onto the view of a rear-mounted camera.

Figure 1.23 The parking assistant is a commercially available AR feature in many contemporary cars. Courtesy of Brigitte Ludwig.

Television

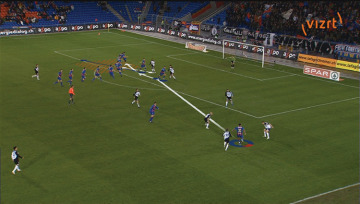

Many people likely first encountered AR as annotations to live camera footage brought to their homes via broadcast TV. The first and most prominent example of this concept is the virtual 1st & 10 line in American football, indicating the yardage needed for a first down, which is superimposed directly on the TV screencast of a game. While the idea and first patents for creating such on-field markers for football broadcasts date back to the late 1970s, it took until 1998 for the concept to be realized. The same concept of annotating TV footage with virtual overlays has successfully been applied to many other sports, including baseball, ice hockey, car racing, and sailing. Figure 1.24 shows a televised soccer game with augmentations. The audience in this incarnation of AR has no ability to vary the viewpoint individually. Given that the live action on the playing field is captured by tracked cameras, interactive viewpoint changes are still possible, albeit not under the end-viewer’s control.

Figure 1.24 Augmented TV broadcast of a soccer game. Courtesy of Teleclub and Vizrt, Switzerland (LiberoVision AG).

Several competing companies provide augmentation solutions for various broadcast events, creating convincing and informative live annotations. The annotation possibilities have long since moved beyond just sports information or simple line graphics, and now include sophisticated 3D graphics renderings of branding logos or product advertisements.

Using similar technology, it is possible—and, in fact, common in today’s TV broadcasts—to present a moderator and other TV personalities in virtual studio settings. In this application, the moderator is filmed by tracked cameras in front of a green screen and inserted into a virtual rendering of the studio. The system even allows for interactive manipulation of virtual props.

Similar technologies are being used in the film industry, such as for providing a movie director and actors with live previews of what a film scene might look like after special effects or other compositing has been applied to the camera footage of a live set environment. This application of AR is sometimes referred to as Pre-Viz.

Advertising and Commerce

The ability of AR to instantaneously present arbitrary 3D views of a product to a potential buyer is already being welcomed in advertising and commerce. This technology can lead to truly interactive experiences for the customer. For example, customers in Lego stores can hold a toy box up to an AR kiosk, which then displays a 3D image of the assembled Lego model. Customers can turn the box to view the model from any vantage point.

An obvious target for AR is the augmentation of printed material, such as flyers or magazines. Readers of the Harry Potter novels know how pictures in the Daily Prophet newspaper come alive. This idea can be realized with AR by superimposing digital movies and animations on top of specific portions of a printed template. When the magazine is viewed on a computer or smartphone, the static pictures are replaced by animated sequences or movies (Figure 1.25).

Figure 1.25 The lifestyle magazine Red Bulletin was the first print publication to feature dynamic content using AR. Courtesy of Daniel Wagner.

AR can also be helpful for a sales person who is trying to demonstrate the virtues of a product (Figure 1.26). Especially for complex devices, it may be difficult to convey the internal operation with words alone. Letting a potential customer observe the animated interior allows for much more compelling presentations at trade shows and in show rooms alike.

Figure 1.26 Marketing presentation of a Waeco air-conditioning service unit. Courtesy of magiclensapp.com.

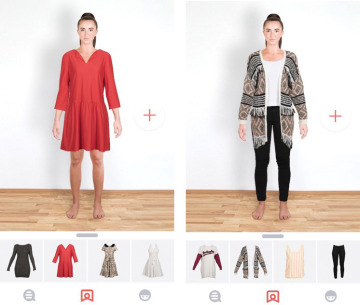

Pictofit is a virtual dressing room application that lets users preview garments from online fashion stores on their own body (Figure 1.27). The garments are automatically adjusted to match the wearer’s size. In addition, body measurements are estimated and made available to assist in the entry of purchase data.

Figure 1.27 Pictofit can extract garment images from online shopping sites and render them to match an image of the customer. Courtesy of Stefan Hauswiesner, ReactiveReality.

Games

One of the first commercial AR games was The Eye of Judgment, an interactive trading card game for the Sony PlayStation 3. The game is delivered with an overhead camera, which picks up game cards and summons corresponding creatures to fight matches.

An important quality of traditional games is their tangible nature. Kids can turn their entire room into a playground, with pieces of furniture being converted into a landscape that supports physical activities such as jumping and hiding. In contrast, video games are usually confined to a purely virtual realm. AR can bring digital games together with the real environment. For example, Vuforia SmartTerrain (Figure 1.28) delivers a 3D scan of a real scene and turns it into a playing field for a “tower defense” game.

Figure 1.28 Vuforia SmartTerrain scans the environment and turns it into a game landscape. © 2013 Qualcomm Connected Experiences, Inc. Used with permission.

Microsoft’s IllumiRoom [Jones et al. 2013] is a prototype of a projector-based AR game experience. It combines a regular TV set with a home-theater projector to extend the game world beyond the confines of the TV (Figure 1.29). The 3D game scene shown in the projection is registered with the one on the TV, but the projection covers a much wider field of view. While the player concentrates on the center screen, the peripheral field of view is also filled with dynamic images, leading to a greatly enhanced game experience.

Figure 1.29 Using a TV-plus-projector setup, the IllumiRoom extends the game world beyond the boundaries of the screen. Courtesy of Microsoft Research.