What Software Architects Need to Know About DevOps

What Software Architects Need to Know About DevOps

The motivation for DevOps is that developers and operators often have opposing goals: Developers (Devs) try to push new features into the product, while the core concern of operators (Ops) is system dependability and availability—which is facilitated by putting high burdens on the process of releasing new features. The goal of DevOps is to achieve both, high frequencies of releasing new features and dependability, by encouraging collaboration and shared responsibilities between Devs and Ops.

If your organization is interested in achieving shorter cycles for new features becoming available in software products, without sacrificing quality, then you should care about DevOps. Take the example of IBM, which, by applying DevOps and related practices, "… has gone from spending about 58% of its development resources on innovation to about 80%," according to this recent report. Wouldn’t you like to spend most of your time on the fun and innovative parts of your job?

DevOps brings about changes on several levels: organizational structure and culture, software structure, test automation, and continuous deployment. Architects need to know about DevOps implication on team structure, stakeholders, and architecture styles and patterns. We start with a definition of DevOps, before we give an overview of each of those topics.

Defining DevOps

In our book, we use a goal-driven definition of DevOps:

- DevOps is a set of practices intended to reduce the time between committing a change to a system and the change being placed into normal production, while ensuring high quality.

This definition has several implications. First, the quality of the deployed change to a system (usually in the form of code) is important. Quality means suitability for use by various stakeholders including end users, developers, or system administrators. It also includes availability, security, reliability, and other “ilities.” One method for ensuring quality is to have a variety of automated test cases that must be passed prior to placing changed code into production. Another method is to test the change in production with a limited set of users prior to opening it up to the world. Still another method is to closely monitor newly deployed code for a period of time. We do not specify in the definition how quality is ensured, but we do require that production code be of high quality.

The definition also requires the delivery mechanism to be of high quality. This implies that reliability and the repeatability of the delivery mechanism should be high. If it fails regularly, the time required increases. If there are errors in how the change is delivered, the quality of the deployed system suffers, e.g., through reduced availability or reliability.

We identify two times as being important. One is the time when a developer commits newly developed code. This marks the end of basic development and the beginning of the deployment path. The second time is the deploying of that code into production. There is a period after code has been deployed into production when the code undergoes live testing and is closely monitored for potential problems. Once the code has passed live testing and close monitoring, then it is considered as a portion of the normal production system. We make a distinction between deploying code into production for live testing and close monitoring and then, after passing the tests, promoting the newly developed code to be equivalent to previously developed code.

Our definition is goal-oriented. We do not specify the form of the practices or whether tools are used to implement them. If a practice is intended to reduce the time between a commit from a developer and deploying into production, it is a DevOps practice—whether it involves agile methods, tools, or forms of coordination. This is in contrast to several other definitions. Wikipedia, for example, stresses communication, collaboration, and integration between various stakeholders without stating the goal of such communication, collaboration, or integration. Timing goals are implicit. Other definitions stress the connection between DevOps and agile methods. Again, there is no mention of the purpose of utilizing agile methods on either the time to develop or the quality of the production system. Still other definitions stress the tools being used, without mentioning the goal of DevOps practices, time involved, or quality.

Finally, the goals specified in the definition do not restrict the scope of DevOps practices to testing and deployment. In order to achieve these goals, it is important to include an Ops perspective in the collection of requirements, i.e., significantly earlier than committing changes. Analogously, the definition does not mean DevOps practices end with deployment into production; the goal is to ensure high quality of the deployed system throughout its lifecycle. Thus, monitoring practices that help achieve the goals are to be included as well.

DevOps Practices and Architectural Implications

We have identified five different categories of DevOps practices, which satisfy our definition. For each practice, we discuss the architectural implications.

Treat Ops as first-class citizens from the point of view of requirements. These practices fit in the high quality aspect of the definition. Operations have a set of requirements that pertain to logging and monitoring. For example, logging messages should be understandable and useable by an operator. Involving operations in the development of requirements will ensure that these types of requirements are considered.

Adding requirements to a system from Ops may require some architectural modification. In particular, the Ops requirements are likely to be in the area of logging, monitoring, and information to support incident handling. These requirements will be like other requirements for modifications to a system: possibly requiring some minor modifications to the architecture but, typically, not drastic modifications.

Make Dev more responsible for relevant incident handling. These practices are intended to shorten the time between the observation of an error and the repair of that error. Organizations that utilize these practices typically have a period of time in which Dev has primary responsibility for a new deployment; later on Ops has primary responsibility.

By itself, this change is just a process change and should require no architectural modifications. However, just as with the previous practice, once Dev becomes aware of the requirements for incident handling, some architectural modifications may result.

- Continuous deployment. Practices associated with continuous deployment are intended to shorten the time between a developer committing code to a repository and that code being deployed. Continuous deployment also emphasizes automated tests, to increase the quality of code making its way into production. Continuous deployment is the practice which leads to the most far-reaching architectural modifications. On the one hand, an organization can introduce continuous deployment practices with no major architectural changes. On the other hand, organizations that have adopted continuous deployment practices frequently begin moving to a microservice architecture. We cover microservice architectures and explore the reasons for adoption below.

- Develop infrastructure code with the same set of practices as application code. Practices that apply to the development of infrastructure code are intended both to ensure high quality in the deployed applications and to ensure that deployments proceed as planned. Errors in deployment scripts such as misconfigurations can cause errors in the application, in the environment, or in the deployment process. Applying quality control practices used in normal software development when developing operations scripts and processes will help control the quality of these specifications. These practices will not affect the application code but may affect the architecture of the infrastructure code.

Enforced deployment process used by all, including Dev and Ops personnel. These practices are intended to ensure higher quality of deployments, e.g., by requiring the continuous deployment pipeline to be used for any change, even a small change in configuration. This avoids errors caused by ad hoc deployments and resulting misconfiguration. The practices also refer to the time that it takes to diagnose and repair an error. The normal deployment process should make it easy to trace the history of a particular virtual machine image and understand the components that were included in that image.

In general, when a process becomes enforced, some individuals may be required to change their normal operating procedures and, possibly, the structure of the systems on which they work. One point where a deployment process could be enforced is in the initiation phase of each system. Each system, when it is initialized, verifies its pedigree. That is, it arrived at execution through a series of steps, each of which can be checked to have occurred. Furthermore, the systems on which it depends, e.g., operating systems or middleware, also have verifiable pedigrees.

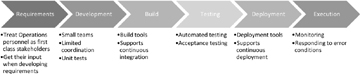

Figure 1 gives an overview of DevOps processes.

Figure 1 DevOps lifecycle processes.

At its most basic, DevOps advocates treating operations personnel as first-class stakeholders. Preparing a release can be a very serious and onerous process. As such, operations personnel may need to be trained in the types of runtime errors that can occur in a system under development; they may have suggestions as to the type and structure of log files; and they may provide other types of input into the requirements process. At its most extreme, DevOps practices make developers responsible for monitoring the progress and errors that occur during deployment and execution, so theirs would be the voices suggesting requirements. In between are practices that cover teams, build processes, testing processes, and deployment processes. We discuss continuous deployment, monitoring, security, and more in dedicated chapters of our book.

Organizational Aspects of DevOps

One main difference between DevOps and traditional models of software development is team size. Although the exact team size recommendation differs from one methodology to another, all agree that the size of the team should be relatively small. Amazon has a two pizza rule: No team should be larger than can be fed from two pizzas. Although there is a fair bit of ambiguity in this rule (how big are the pizzas, how hungry are the members of the team), the intent is clear.

The advantages of small teams are:

- They can make decisions quickly. In every meeting, attendees wish to express their opinions. The smaller the number of attendees at the meeting, the fewer the number of opinions expressed and the less time spent hearing differing opinions. Consequently, the opinions can be expressed and a consensus arrived at faster than with a large team.

- It is easier to fashion a small number of people into a coherent unit than a large number. A coherent unit is one in which everyone understands and subscribes to a common set of goals for the team.

- It is easier for individuals to express an opinion or idea in front of a small group than in front of a large one.

The disadvantage of a small team is that some tasks are larger than what can be accomplished by a small number of individuals. In this case, the task has to be broken up into smaller pieces, each given to a different team and the different pieces need to work together sufficiently well to accomplish the larger task. To achieve this, the teams need to coordinate. However, coordination needs to be asynchronous and implicit, best achieved through a suitable architecture and interfaces, else all benefits are counterbalanced by the additional coordination overhead.

The team size becomes a major driver of the overall architecture. A small team, by necessity, works on a small amount of code. We will see that an architecture constructed around a collection of microservices is a good means to package these small tasks and reduce the need for explicit coordination, so we will call the output of a development team a service. We give an overview of microservice architectures driven by small teams next.

Implications for Software Architecture: Microservices

DevOps achieves its goals partially by replacing explicit coordination with implicit and often less coordination, and we will see how the architecture of the system being developed acts as the implicit coordination mechanism.

As said previously, development teams using DevOps processes are usually small and should have limited inter-team coordination. Small teams imply that each team has a limited scope in terms of the components they develop. When a team deploys a component, it cannot go into production unless the component is compatible with other components with which it interacts. This compatibility can be ensured explicitly through multi-team coordination, or it can be ensured implicitly through the definition of the architecture.

An organization can introduce continuous deployment without major architectural modifications. Dramatically reducing the time required to place a component into production, however, will require architectural support:

- Deploying without the necessity of explicit coordination with other teams will reduce the time required to place a component into production.

- Allowing for different versions of the same service to be simultaneously in production will allow different team members to deploy without coordination with other members of their team.

- Rolling back a deployment in the event of errors allows for various forms of live testing.

An architectural style that satisfies these requirements is a microservice architecture. This style is used in practice by organizations that have adopted or inspired many DevOps practices. Although project requirements may cause deviations to this style, it remains a good general basis for projects that are adopting DevOps practices.

A microservice architecture consists of a collection of services where each service provides a small amount of functionality and the total functionality of the system is derived from composing multiple services. A microservice architecture, with some modifications, gives each team the ability to deploy its service independently from other teams, to have multiple versions of a service in production simultaneously, and to roll back to a prior version relatively easily.

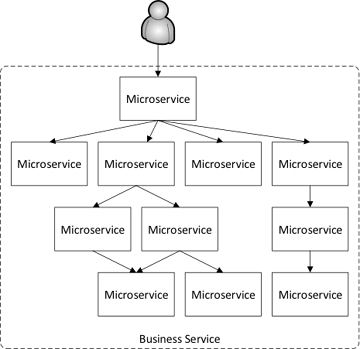

Figure 2 describes the situation that results from using a microservice architecture. A user interacts with a single consumer-facing service. This service, in turn, utilizes a collection of other services. We use the terminology “service” to refer to a component that provides a service and “client” to refer to a component that requests a service. A single component can be a client in one interaction and a service in another. In a system such as LinkedIn, the service depth may reach as much as 70 for a single user request.

Figure 2 User interacting with a single service that, in turn, utilizes multiple other services

Summary

The main takeaway from this article is that people have defined DevOps from different perspectives, but one common objective is to reduce the time between a feature or improvement being conceived to its eventual deployment to users, without sacrificing quality.

DevOps will face barriers due to both culture and technical challenges. It will have a huge impact on team structure, software architecture, and traditional ways of conducting operations. We have given you a taste of it by listing some common practices.

The DevOps goal of minimizing coordination among various teams can be achieved by using a microservice architectural style where the coordination mechanism, the resource management decisions, and the mapping of architectural elements are all specified by the architecture and hence require minimal inter-team coordination.

All in all, we believe that DevOps will lead IT onto exciting new ground, with high frequency of innovation and fast cycles to improve the user experience. This article is only a short summary of our book DevOps: A Software Architect's Perspective. In the book, you can find more information on the topics highlighted here, as well as quality aspects, monitoring practices, three case studies from practice, and much more.