- Agile Testing Quadrants

- Challenging the Quadrants

- Using Other Influences for Planning

- Planning for Test Automation

- Summary

As agile development becomes increasingly mainstream, there are established techniques that experienced practitioners use to help plan testing activities in agile projects, although less experienced teams sometimes misunderstand or misuse these useful approaches. Also, the advances in test tools and frameworks have somewhat altered the original models that applied back in the early 2000s. Models help us view testing from different perspectives. Let’s look at some foundations of agile test planning and how they are evolving.

Agile Testing Quadrants

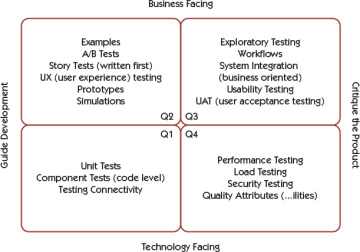

The agile testing quadrants (the Quadrants) are based on a matrix Brian Marick developed in 2003 to describe types of tests used in Extreme Programming (XP) projects (Marick, 2003). We’ve found the Quadrants to be quite handy over the years as we plan at different levels of precision. Some people have misunderstood the purpose of the Quadrants. For example, they may see them as sequential activities instead of a taxonomy of testing types. Other people disagree about which testing activities belong in which quadrant and avoid using the Quadrants altogether. We’d like to clear up these misconceptions.

Figure 8-1 is the picture we currently use to explain this model. You’ll notice we’ve changed some of the wording since we presented it in Agile Testing. For example, we now say “guide development” instead of “support development.” We hope this makes it clearer.

Figure 8-1 Agile testing quadrants

It’s important to understand the purpose behind the Quadrants and the terminology used to convey their concepts. The quadrant numbering system does not imply any order. You don’t work through the quadrants from 1 to 4, in a sequential manner. It’s an arbitrary numbering system so that when we talk about the Quadrants, we can say “Q1” instead of “technology-facing tests that guide development.” The quadrants are

- Q1: technology-facing tests that guide development

- Q2: business-facing tests that guide development

- Q3: business-facing tests that critique (evaluate) the product

- Q4: technology-facing tests that critique (evaluate) the product

The left side of the quadrant matrix is about preventing defects before and during coding. The right side is about finding defects and discovering missing features, but with the understanding that we want to find them as fast as possible. The top half is about exposing tests to the business, and the bottom half is about tests that are more internal to the team but equally important to the success of the software product. “Facing” simply refers to the language of the tests—for example, performance tests satisfy a business need, but the business would not be able to read the tests; they are concerned with the results.

Most agile teams would start with specifying Q2 tests, because those are where you get the examples that turn into specifications and tests that guide coding. In his 2003 blog posts about the matrix, Brian called Q2 and Q1 tests “checked examples.” He had originally called them “guiding” or “coaching” examples and credits Ward Cunningham for the adjective “checked.” Team members would construct an example of what the code needs to do, check that it doesn’t do it yet, make the code do it, and check that the example is now true (Marick, 2003). We include prototypes and simulations in Q2 because they are small experiments to help us understand an idea or concept.

In some cases it makes more sense to start testing for a new feature using tests from a different quadrant. Lisa has worked on projects where her team used performance tests for a spike for determination of the architecture, because that was the most important quality attribute for the feature. Those tests fall into Q4. If your customers are uncertain about their requirements, you might even do an investigation story and start with exploratory testing (Q3). Consider where the highest risk might be and where testing can add the most value.

Most teams concurrently use testing techniques from all of the quadrants, working in small increments. Write a test (or check) for a small chunk of a story, write the code, and once the test is passing, perhaps automate more tests for it. Once the tests (automated checks) are passing, use exploratory testing to see what was missed. Perform security or load testing, and then add the next small chunk and go through the whole process again.

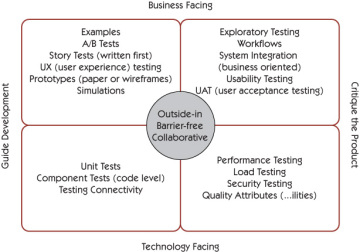

Michael Hüttermann adds “outside-in, barrier-free, collaborative” to the middle of the quadrants (see Figure 8-2). He uses behavior-driven development (BDD) as an example of barrier-free testing. These tests are written in a natural, ubiquitous “given_when_then” language that’s accessible to customers as well as developers and invites conversation between the business and the delivery team. This format can be used for both Q1 and Q2 checking. See Michael’s Agile Record article (Hüttermann, 2011b) or his book Agile ALM (Hüttermann, 2011a) for more ideas on how to augment the Quadrants.

Figure 8-2 Agile testing quadrants (with Michael Hüttermann’s adaptation)

The Quadrants are merely a taxonomy or model to help teams plan their testing and make sure they have all the resources they need to accomplish it. There are no hard-and-fast rules about what goes in which quadrant. Adapt the Quadrants model to show what tests your team needs to consider. Make the testing visible so that your team thinks about testing first as you do your release, feature, and story planning. This visibility exposes the types of tests that are currently being done and the number of people involved. Use it to provoke discussions about testing and which areas you may want to spend more time on.

When discussing the Quadrants, you may realize there are necessary tests your team hasn’t considered or that you lack certain skills or resources to be able to do all the necessary testing. For example, a team that Lisa worked on realized that they were so focused on turning business-facing examples into Q2 tests that guide development that they were completely ignoring the need to do performance and security testing. They added in user stories to research what training and tools they would need and then budgeted time to do those Q4 tests.

Planning for Quadrant 1 Testing

Back in the early 1990s, Lisa worked on a waterfall team whose programmers were required to write unit test plans. Unit test plans were definitely overkill, but thinking about the unit tests early and automating all of them were a big part of the reason that critical bugs were never called in to the support center. Agile teams don’t plan Q1 tests separately. In test-driven development (TDD), also called test-driven design, testing is an inseparable part of coding. A programmer pair might sit and discuss some of the tests they want to write, but the details evolve as the code evolves. These unit tests guide development but also support the team in the sense that a programmer runs them prior to checking in his or her code, and they are run in the CI on every single check-in of code.

There are other types of technical testing that may be considered as guiding development. They might not be obvious, but they can be critical to keeping the process working. For example, let’s say you can’t do your testing because there is a problem with connectivity. Create a test script that can be run before your smoke test to make sure that there are no technical issues. Another test programmers might write is one to check the default configuration. Many times these issues aren’t known until you start deploying and testing.

Planning for Quadrant 2 Testing

Q2 tests help with planning at the feature or story level. Part IV, “Testing Business Value,” will explore guiding development with more detailed business-facing tests. These tests or checked examples are derived from collaboration and conversations about what is important to the feature or story. Having the right people in a room to answer questions and give specific examples helps us plan the tests we need. Think about the levels of precision discussed in the preceding chapter; the questions and the examples get more precise as we get into details about the stories. The process of eliciting examples and creating tests from them fosters collaboration across roles and may identify defects in the form of hidden assumptions or misunderstandings before any code is written.

Show everyone, even the business owners, what you plan to test; see if you’re standing on anything sacred, or if they’re worried you’re missing something that has value to them.

Creating Q2 tests doesn’t stop when coding begins. Lisa’s teams have found it works well to start with happy path tests. As coding gets under way and the happy path tests start passing, testers and programmers flesh out the tests to encompass boundary conditions, negative tests, edge cases, and more complicated scenarios.

Planning for Quadrant 3 Testing

Testing has always been central to agile development, and guiding development with customer-facing Q2 tests caught on early with agile teams. As agile teams have matured, they’ve also embraced Q3 testing, exploratory testing in particular. More teams are hiring expert exploratory testing practitioners, and testers on agile teams are spending time expanding their exploratory skills.

Planning for Q3 tests can be a challenge. We can start defining test charters before there is completed code to explore. As Elisabeth Hendrickson explains in her book Explore It! (Hendrickson, 2013), charters let us define where to explore, what resources to bring with us, and what information we hope to find. To be effective, some exploratory testing might require completion of multiple small user stories, or waiting until the feature is complete. You may also need to budget time to create the user personas that you might need for testing, although these may already have been created in story-mapping or other feature-planning exercises. Defining exploratory testing charters is not always easy, but it is a great way to share testing ideas with the team and to be able to track what testing was completed. We will give examples of such charters in Chapter 12, “Exploratory Testing,” where we discuss different exploratory testing techniques.

One strategy to build in time for exploratory testing is writing stories to explore different areas of a feature or different personas. Another strategy, which Janet prefers, is having a task for exploratory testing for each story, as well as one or more for testing the feature. If your team uses a definition of “done,” conducting adequate exploratory testing might be part of that. You can size individual stories with the assumption that you’ll spend a significant amount of time doing exploratory testing. Be aware that unless time is specifically allocated during task creation, exploratory testing often gets ignored.

Q3 also includes user acceptance testing (UAT). Planning for UAT needs to happen during release planning or as soon as possible. Include your customers in the planning to decide the best way to proceed. Can they come into the office to test each new feature? Perhaps they are in a different country and you need to arrange computer sharing. Work to get the most frequent and fastest feedback possible from all of your stakeholders.

Planning for Quadrant 4 Testing

Quadrant 4 tests may be the easiest to overlook in planning, and many teams tend to focus on tests to guide development. Quadrant 3 activities such as UAT and exploratory testing may be easier to visualize and are often more familiar to most testers than Quadrant 4 tests. For example, more teams need to support their application globally, so testing in the internationalization and localization space has become important. Agile teams have struggled with how to do this; we include some ideas in Chapter 13, “Other Types of Testing.”

Some teams talk about quality attributes with acceptance criteria on each story of a feature. We prefer to use the word constraints. In Discover to Deliver (Gottesdiener and Gorman, 2012), Ellen Gottesdiener and Mary Gorman recommend using Tom and Kai Gilb’s Planguage (their planning language; see the bibliography for Part III, “Planning—So You Don’t Forget the Big Picture,” for links) to talk about these constraints in a very definite way (Gilb, 2013).

If your product has a constraint such as “Every screen must respond in less than three seconds,” that criterion doesn’t need to be repeated for every single story. Find a mechanism to remind your team when you are discussing the story that this constraint needs to be built in and must be tested. Liz Keogh describes a technique to write tests about how capabilities such as system performance can be monitored (Keogh, 2014a). Organizations usually know which operating systems or browsers they are supporting at the beginning of a release, so add them as constraints and include them in your testing estimations. These types of quality attributes are often good candidates for testing at a feature level, but if it makes sense to test them at the story level, do so there; think, “Test early.” Chapter 13, “Other Types of Testing,” will cover a few different testing types that you may have been struggling with.