The Third Principle of the Incremental Commitment Spiral Model: Concurrent Multidiscipline Engineering

- 3.1 Failure Story: Sequential RPV Systems Engineering and Development

- 3.2 Success Story: Concurrent Competitive-Prototyping RPV Systems Development

- 3.3 Concurrent Development and Evolution Engineering

- 3.4 Concurrent Engineering of Hardware, Software, and Human Factors Aspects

- 3.5 Concurrent Requirements and Solutions Engineering

- “Do everything in parallel, with frequent synchronizations.”

- —Michael Cusumano and Richard Selby, Microsoft Secrets, 1995

- “As the correct solution of any problem depends primarily on a true understanding of what the problem really is, and wherein lies its difficulty, we may profitably pause upon the threshold of our subject to consider first, in a more general way, its real nature: the causes which impede sound practice; the conditions on which success or failure depends; the directions in which error is most to be feared. Thus we shall attain that great perspective for success in any work—a clear mental perspective, saving us from confusing the obvious with the important, and the obscure and remote with the unimportant.”

- —Arthur M. Wellington, The Economic Theory of the Location of Railroads, 1887

The first flowering of systems engineering as a formal discipline focused on the engineering of complex physical systems such as ships, aircraft, transportation systems, and logistics systems. The physical behavior of the systems could be well analyzed by mathematical techniques, with passengers treated along with baggage and merchandise as a class of logistical objects with average sizes, weights, and quantities. Such mathematical models were very good in analyzing the physical performance tradeoffs of complex system alternatives. They also served as the basis for the development of elegant mathematical theories of systems engineering.

The physical systems were generally stable, and were expected to have long useful lifetimes. Major fixes or recalls of fielded systems were very expensive, so it was worth investing significant up-front effort in getting their requirements to be complete, consistent, traceable, and testable, particularly if the development was to be contracted out to a choice of competing suppliers. It was important not to overly constrain the solution space, so the requirements were not to include design choices, and the design could not begin until the requirements were fully specified.

Various sequential process models were developed to support this approach, such as the diagonal waterfall model, the V-model (a waterfall with a bend upward in the middle), and the two-leg model (an inverted V-model). These were effective in developing numerous complex physical systems, and were codified into government and standards-body process standards. The manufacturing process of assembling physical components into subassemblies, assemblies, subsystems, and system products was reflected in functional-hierarchy design standards, integration and test standards, and work breakdown structure standards as the way to organize and manage the system definition and development.

The fundamental assumptions underlying this set of sequential processes, prespecified requirements, and functional-hierarchy product models began to be seriously undermined in the 1970s and 1980s. The increasing pace of change in technology, competition, organizations, and life in general made assumptions about stable, prespecifiable requirements unrealistic. The existence of cost-effective, competitive, incompatible commercial products or other reusable non-developmental items (NDIs) made it necessary to evaluate and often commit to solution components before finalizing the requirements (the consequences of not doing this will be seen in the failure case study in Chapter 4). The emergence of freely available graphic user interface (GUI) generators made rapid user interface prototyping feasible, but also made the prespecification of user interface requirement details unrealistic. The difficulty of adapting to rapid change with brittle, optimized, point-solution architectures generally made optimized first-article design to fixed requirements unrealistic.

As shown in the “hump diagram” of Figure 0-5 in the Introduction, the ICSM emphasizes the principle of concurrent rather than sequential work for understanding needs; envisioning opportunities; system scoping; system objectives and requirements determination; architecting and designing of the system and its hardware, software, and human elements; life-cycle planning; and development of feasibility evidence. Of course, the humps in Figure 0-5 are not a one-size-fits-all representation of every project’s effort distribution. In practice, the evidence- and risk-based decision criteria discussed in Figures 0-7 and 0-8 in the Introduction can determine which specific process model will fit best for which specific situation. This includes situations in which the sequential process is still best, as its assumptions still hold in some situations. Also, since requirements increasingly emerge from use, working on all of the requirements and solutions in advance is not feasible—which is where the ICSM Principle 2 of incremental commitment applies.

This establishes the context for the “Do everything in parallel” quote at the beginning of this chapter. Even though preferred sequential-engineering situations still exist in which “Do everything in parallel” does not universally apply, it is generally best to apply it during the first ICSM Exploratory phase. By holistically and concurrently addressing during this beginning phase all of the system’s hardware, software, human factors, and economic considerations (as described in the Wellington quote at the beginning of the chapter), projects will generally be able to determine their process drivers and best process approach for the rest of the system’s life cycle. Moreover, as discussed previously, the increasing prevalence of process drivers such as emergence, dynamism, and NDI support will make concurrent approaches increasingly dominant.

Thus suitably qualified, we can proceed to the main content of Chapter 3. Our failure and success case studies are two different sequential and concurrent approaches to a representative complex cyber–physical–human government system acquisition involving remotely piloted vehicles (RPVs). The remaining sections will discuss best practices for concurrent cyber–physical–human factors engineering, concurrent requirements and solutions engineering, concurrent development and evolution engineering, and support of more rapid concurrent engineering.

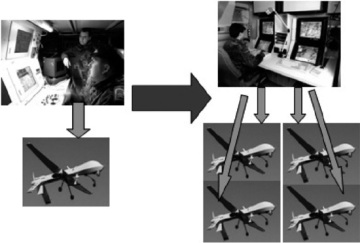

An example to illustrate ICSM concurrent-engineering benefits is the unmanned aerial system (UAS; i.e., RPV) system enhancement discussed in Chapter 5 of the NRC’s Human–System Integration report 1. These RPVs are airplanes or helicopters operated remotely by humans. The systems are designed to keep humans out of harm’s way. However, the current RPV systems are human-intensive, often requiring two people, and often considerably more, to operate a single vehicle. The increase in need to operate numerous RPVs is causing a strong desire to modify the 1:2 (one vehicle controlled by two people) ratio to allow for a single operator to operate more than one RPV, as shown in Figure 3-1.

FIGURE 3-1 Vision of 4:1 Remotely Piloted Vehicle System (from Pew and Mavor, 2007)

A recent advanced technology demonstration of an autonomous-agent–based system enabled a single operator to control four RPVs flying in formation to a crisis area while compensating for changes in direction to avoid adverse weather conditions or no-fly zones. Often, such demonstrations to high-level decision makers, who are typically focused on rapidly getting innovations into the competition space, will lead to commitments to major acquisitions before the technical and economic implications have been worked out (good examples have been the Iridium satellite-based personal telephone system and the London Ambulance System).

Based on our analyses of such failures and complementary successes (e.g., the rapid-delivery systems of Federal Express, Amazon, and Walmart), the failure and success stories in this chapter illustrate failure and success patterns in the RPV domain. In the future, the technical, economic, and safety challenges for similarly autonomous air vehicles will become even more complex, as with Amazon’s recent concept and prototype of filling the air with tiny, fully autonomous, battery-powered helicopters rapidly delivering packages from its warehouse to your front door.

In this chapter, the demonstration of a 4:1 vehicle:controller ratio capability highly impressed senior leadership officials viewing the demo, and they established a high-priority rapid-development program to acquire and field a common agent-based 4:1 RPV control capability for use in battlefield-based, sea-based, and home-country–based RPV operations.

3.1 Failure Story: Sequential RPV Systems Engineering and Development

This section presents a hypothetical sequential approach representative of several recent government acquisition programs, which would use the demo results to create the requirements for a proposed program that used the agent-based technology to develop a 4:1 ratio system that enabled a single operator to control four RPVs in battlefield-based, sea-based, and home-country–based RPV operations. A number of assumptions were made to sell the program at an optimistic cost of $1 billion and schedule of 40 months. Enthusiasm was such that the program, budget, and schedule were established, and a multi-service working group of experienced battlefield-based, sea-based, and home-country–based RPV controllers was established to develop the requirements for the system.

The resulting requirements included the need to synthesize status information from multiple on-board and external sensors; to perform dynamic reallocation of RPVs to targets; to perform self-defense functions; to communicate status and observational information to central commanders and other RPV controllers; to control RPVs in the same family but with different releases having somewhat different controls; to avoid harming friendly forces or noncombatants; and to be network-ready with respect to self-identification when entering battle zones, establishing security credentials and protocols, operating in a publish–subscribe environment, and participating in replanning activities based on changing conditions. These requirements were included in a request for proposal (RFP) that was sent out to prospective bidders.

The winning bidder provided an even more impressive demo of agent technology and a proposal indicating that all of the problems were well understood, that a preliminary design review (PDR) could be held in 120 days, and that the cost would be only $800 million. The program managers and their upper management were delighted at the prospect of saving $200 million of the taxpayers’ money, and they established a fixed-price contract to develop the 4:1 system to the requirements in the RFP in 40 months, with a System Functional Requirements Review (SFRR) in 60 days and a PDR in 120 days.

At the SFRR, the items reviewed were transcriptions and small elaborations of the requirements in the RFP. They did not include any functions for coordinating the capabilities, and included only sunny-day operational scenarios. There were no capabilities for recovering from outages in the network, from the loss of RPVs, or from incompatible sensor data, or for tailoring the controls to battlefield-based, sea-based, or home-country–based control equipment. The contractor indicated that it had hired some ex-RPV controllers who were busy putting such capabilities together.

However, at the PDR, the contractor could not show feasible solutions for several critical and commonly occurring scenarios, such as coping with network outages, missing RPVs, and inconsistent data; having the individual controllers coordinate with each other; performing self-defense functions; tailoring the controls to multiple equipment types; and satisfying various network-ready interoperability protocols. As has been experienced in practice 2, such capabilities are much needed and difficult to achieve.

Because the schedule was tight and the contractor had almost run out of systems engineering funds, management proposed to address the problems by using a “concurrent engineering” approach of having the programmers develop the software capabilities while the systems engineers were completing the detailed design of the hardware displays and controls. Having no other face-saving alternative to declaring the PDR to be a failure, the customers declared the PDR to be passed.

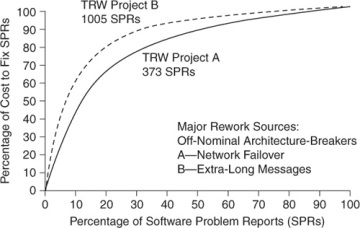

Actually, proceeding into development while completing the design is a pernicious misuse of the term “concurrent engineering,” as there is not enough time to produce feasibility evidence and to synchronize and stabilize the numerous off-nominal approaches taken by the software developers and the hardware-detail designers. The situation becomes even worse when portions of the system are subcontracted to different organizations, which will often reuse existing assets in incompatible ways. The almost-certain result for large systems is one or more off-nominal architecture-breakers that require large amounts of rework and throwaway software to reconcile the inconsistent architectural decisions made by the self-fulfilling “hurry up and code, because we will have a lot of debugging to do” programmers. Figure 3-2 shows the results of such approaches for two large TRW projects, in which 80% of the rework resulted from the 20% of problem fixes resulting from critical off-nominal architecture-breakers 3.

FIGURE 3-2 Results of Creating or Neglecting Off-Nominal Architecture-Breakers

As a result, after 40 months and $800 million in expenditures, some RPV control components were developed but were experiencing integration problems, and even after descoping the performance to a 1:1 operator:RPV ratio, several problems were still unresolved. For example, the hardware engineers used their traditional approach to defining interfaces in terms of message content (e.g., “The sensor data crossing an interface is defined in terms of the following units, dimensions, coordinate systems, precision, frequency, or other characteristics”). They then took full earned value credit for defining the system’s interfaces. However, the RPVs were operating in a Net-centric system of systems, where interface definition includes protocols for joining the network, performing security handshakes, publishing and subscribing to services, leaving the network, and so on. As there was no earned value left for defining these protocols, they remained undefined while the earned value system continued to indicate full credit for interface definition. The resulting rework and overruns could be said to result from off-nominal architecture breakers or from shortfalls in the concurrent engineering of the sensor data processing and networking aspects of the system, and from shortfalls in accountability for results.

Eventually, the 1:1 capability was achieved and the system delivered, but with reduced functionality, a cost of $3 billion, and a schedule of 80 months. Even worse, the hasty patching to get the first article delivered left the customer with a brittle, poorly documented, poorly tested system that would be the source of many expensive years of system ownership and sub-par performance.