- Using Aggregates in the Scrum Core Domain

- Rule: Model True Invariants in Consistency Boundaries

- Rule: Design Small Aggregates

- Rule: Reference Other Aggregates by Identity

- Rule: Use Eventual Consistency Outside the Boundary

- Reasons to Break the Rules

- Gaining Insight through Discovery

- Implementation

- Wrap-Up

Gaining Insight through Discovery

With the rules of Aggregates in use, we’ll see how adhering to them affects the design of the SaaSOvation Scrum model. We’ll see how the project team rethinks their design again, applying newfound techniques. That effort leads to the discovery of new insights into the model. Their various ideas are tried and then superseded.

Rethinking the Design, Again

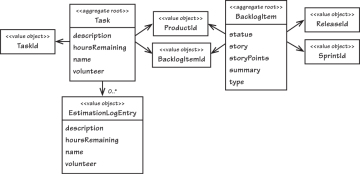

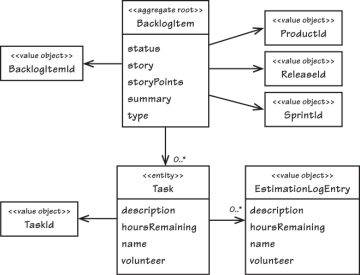

After the refactoring iteration that broke up the large-cluster Product, the BacklogItem now stands alone as its own Aggregate. It reflects the model presented in Figure 10.7. The team composed a collection of Task instances inside the BacklogItem Aggregate. Each BacklogItem has a globally unique identity, its BacklogItemId. All associations to other Aggregates are inferred through identities. That means its parent Product, the Release it is scheduled within, and the Sprint to which it is committed are referenced by identities. It seems fairly small.

Figure 10.7. The fully composed BacklogItem Aggregate

With the team now jazzed about designing small Aggregates, could they possibly overdo it in that direction?

Some will see this as a classic opportunity to use eventual consistency, but we won’t jump to that conclusion just yet. Let’s analyze a transactional consistency approach, then investigate what could be accomplished using eventual consistency. We can then draw our own conclusion as to which approach is preferred.

Estimating Aggregate Cost

As Figure 10.7 shows, each Task holds a collection of EstimationLogEntry instances. These logs model the specific occasions when a team member enters a new estimate of hours remaining. In practical terms, how many Task elements will each BacklogItem hold, and how many EstimationLogEntry elements will a given Task hold? It’s hard to say exactly. It’s largely a measure of how complex any one task is and how long a sprint lasts. But some back-of-the-envelope (BOTE) calculations might help [Bentley].

Task hours are usually reestimated each day after a team member works on a given task. Let’s say that most sprints are either two or three weeks in length. There will be longer sprints, but a two- to three-week time span is common enough. So let’s select a number of days somewhere between ten and 15. Without being too precise, 12 days works well since there may actually be more two-week than three-week sprints.

Next, consider the number of hours assigned to each task. Remembering that tasks must be broken down into manageable units, we generally use a number of hours between four and 16. Normally if a task exceeds a 12-hour estimate, Scrum experts suggest breaking it down further. But using 12 hours as a first test makes it easier to simulate work evenly. We can say that tasks are worked on for one hour on each of the 12 days of the sprint. Doing so favors more complex tasks. So we’ll figure 12 reestimations per task, assuming that each task starts out with 12 hours allocated to it.

The question remains: How many tasks would be required per backlog item? That too is a difficult question to answer. What if we thought in terms of there being two or three tasks required per Layer (4) or Hexagonal Port-Adapter (4) for a given feature slice? For example, we might count three for the User Interface Layer (14), two for the Application Layer (14), three for the Domain Layer, and three for the Infrastructure Layer (14). That would bring us to 11 total tasks. It might be just right or a bit slim, but we’ve already erred on the side of numerous task estimations. Let’s bump it up to 12 tasks per backlog item to be more liberal. With that we are allowing for 12 tasks, each with 12 estimation logs, or 144 total collected objects per backlog item. While this may be more than the norm, it gives us a chunky BOTE calculation to work with.

There is another variable to be considered. If Scrum expert advice to define smaller tasks is commonly followed, it would change things somewhat. Doubling the number of tasks (24) and halving the number of estimation log entries (6) would still produce 144 total objects. However, it would cause more tasks to be loaded (24 rather than 12) during all estimation requests, consuming more memory on each. The team will try various combinations to see if there is any significant impact on their performance tests. But to start they will use 12 tasks of 12 hours each.

Common Usage Scenarios

Now it’s important to consider common usage scenarios. How often will one user request need to load all 144 objects into memory at once? Would that ever happen? It seems not, but the team needs to check. If not, what’s the likely high-end count of objects? Also, will there typically be multiclient usage that causes concurrency contention on backlog items? Let’s see.

The following scenarios are based on the use of Hibernate for persistence. Also, each Entity type has its own optimistic concurrency version attribute. This is workable because the changing status invariant is managed on the BacklogItem Root Entity. When the status is automatically altered (to done or back to committed), the Root’s version is bumped. Thus, changes to tasks can happen independently of each other and without impacting the Root each time one is modified, unless the result is a status change. (The following analysis could need to be revisited if using, for example, document-based storage, since the Root is effectively modified every time a collected part is modified.)

When a backlog item is first created, there are zero contained tasks. Normally it is not until sprint planning that tasks are defined. During that meeting tasks are identified by the team. As each one is called out, a team member adds it to the corresponding backlog item. There is no need for two team members to contend with each other for the Aggregate, as if racing to see who can enter new tasks more quickly. That would cause collision, and one of the two requests would fail (for the same reason simultaneously adding various parts to Product previously failed). However, the two team members would probably soon figure out how counterproductive their redundant work is.

If the developers learned that multiple users do indeed regularly want to add tasks together, it would change the analysis significantly. That understanding could immediately tip the scales in favor of breaking BacklogItem and Task into two separate Aggregates. On the other hand, this could also be a perfect time to tune the Hibernate mapping by setting the optimistic-lock option to false. Allowing tasks to grow simultaneously could make sense in this case, especially if they don’t pose performance and scalability issues.

If tasks are at first estimated at zero hours and later updated to an accurate estimate, we still don’t tend to experience concurrency contention, although this would add one additional estimation log entry, pushing our BOTE total to 13. Simultaneous use here does not change the backlog item status. Again, it advances to done only by going from greater than zero to zero hours, or regresses to committed if already done and hours are changed from zero to one or more–two uncommon events.

Will daily estimations cause problems? On day one of the sprint there are usually zero estimation logs on a given task of a backlog item. At the end of day one, each volunteer team member working on a task reduces the estimated hours by one. This adds a new estimation log to each task, but the backlog item’s status remains unaffected. There is never contention on a task because just one team member adjusts its hours. It’s not until day 12 that we reach the point of status transition. Still, as each of any 11 tasks is reduced to zero hours, the backlog item’s status is not altered. It’s only the very last estimation, the 144th on the 12th task, that causes automatic status transition to the done state.

Memory Consumption

Now to address the memory consumption. Important here is that estimates are logged by date as Value Objects. If a team member reestimates any number of times on a single day, only the most recent estimate is retained. The latest Value of the same date replaces the previous one in the collection. At this point there’s no requirement to track task estimation mistakes. There is the assumption that a task will never have more estimation log entries than the number of days the sprint is in progress. That assumption changes if tasks were defined one or more days before the sprint planning meeting, and hours were reestimated on any of those earlier days. There would be one extra log for each day that occurred.

What about the total number of tasks and estimates in memory for each reestimation? When using lazy loading for the tasks and estimation logs, we would have as many as 12 plus 12 collected objects in memory at one time per request. This is because all 12 tasks would be loaded when accessing that collection. To add the latest estimation log entry to one of those tasks, we’d have to load the collection of estimation log entries. That would be up to another 12 objects. In the end the Aggregate design requires one backlog item, 12 tasks, and 12 log entries, or 25 objects maximum total. That’s not very many; it’s a small Aggregate. Another factor is that the higher end of objects (for example, 25) is not reached until the last day of the sprint. During much of the sprint the Aggregate is even smaller.

Will this design cause performance problems because of lazy loads? Possibly, because it actually requires two lazy loads, one for the tasks and one for the estimation log entries for one of the tasks. The team will have to test to investigate the possible overhead of the multiple fetches.

There’s another factor. Scrum enables teams to experiment in order to identify the right planning model for their practices. As explained by [Sutherland], experienced teams with a well-known velocity can estimate using story points rather than task hours. As they define each task, they can assign just one hour to each task. During the sprint they will reestimate only once per task, changing one hour to zero when the task is completed. As it pertains to Aggregate design, using story points reduces the total number of estimation logs per task to just one and almost eliminates memory overhead.

Exploring Another Alternative Design

Is there another design that could contribute to Aggregate boundaries more fitting to the usage scenarios?

Implementing Eventual Consistency

It looks as if there could be a legitimate use of eventual consistency between separate Aggregates. Here is how it could work.

public class TaskHoursRemainingEstimated implements DomainEvent {

private Date occurredOn;

private TenantId tenantId;

private BacklogItemId backlogItemId;

private TaskId taskId;

private int hoursRemaining;

...

}

A specialized subscriber would now listen for these and delegate to a Domain Service to coordinate the consistency processing. The Service would

- Use the BacklogItemRepository to retrieve the identified BacklogItem.

- Use the TaskRepository to retrieve all Task instances associated with the identified BacklogItem.

- Execute the BacklogItem command named estimateTaskHours-Remaining(), passing the Domain Event’s hoursRemaining and the retrieved Task instances. The BacklogItem may transition its status depending on parameters.

The team should find a way to optimize this. The three-step design requires all Task instances to be loaded every time a reestimation occurs. When using our BOTE estimate and advancing continuously toward done, 143 out of 144 times that’s unnecessary. This could be optimized pretty easily. Instead of using the Repository to get all Task instances, they could simply ask it for the sum of all Task hours as calculated by the database:

public class HibernateTaskRepository implements TaskRepository {

...

public int totalBacklogItemTaskHoursRemaining(

TenantId aTenantId,

BacklogItemId aBacklogItemId) {

Query query = session.createQuery(

"select sum(task.hoursRemaining) from Task task "

+ "where task.tenantId = ? and "

+ "task.backlogItemId = ?");

...

}

}

Eventual consistency complicates the user interface a bit. Unless the status transition can be achieved within a few hundred milliseconds, how would the user interface display the new state? Should they place business logic in the view to determine the current status? That would constitute a smart UI anti-pattern. Perhaps the view would just display the stale status and allow users to deal with the visual inconsistency. That could easily be perceived as a bug, or at least be very annoying.

Is It the Team Member’s Job?

One important question has thus far been completely overlooked: Whose job is it to bring a backlog item’s status into consistency with all remaining task hours? Do team members using Scrum care if the parent backlog item’s status transitions to done just as they set the last task’s hours to zero? Will they always know they are working with the last task that has remaining hours? Perhaps they will and perhaps it is the responsibility of each team member to bring each backlog item to official completion.

On the other hand, what if there is another project stakeholder involved? For example, the product owner or some other person may desire to check the candidate backlog item for satisfactory completion. Maybe someone wants to use the feature on a continuous integration server first. If others are happy with the developers’ claim of completion, they will manually mark the status as done. This certainly changes the game, indicating that neither transactional nor eventual consistency is necessary. Tasks could be split off from their parent backlog item because this new use case allows it. However, if it is really the team members who should cause the automatic transition to done, it would mean that tasks should probably be composed within the backlog item to allow for transactional consistency. Interestingly, there is no clear answer here either, which probably indicates that it should be an optional application preference. Leaving tasks within their backlog item solves the consistency problem, and it’s a modeling choice that can support both automatic and manual status transitions.

Time for Decisions

This level of analysis can’t continue all day. There needs to be a decision. It’s not as if going in one direction now would negate the possibility of going another route later. Open-mindedness is now blocking pragmatism.

If you were a member of the ProjectOvation team, which modeling option would you have chosen? Don’t shy away from discovery sessions as demonstrated in the case study. That entire effort would require 30 minutes, and perhaps as much as 60 minutes at worst. It’s well worth the time to gain deeper insight into your Core Domain.