- Using Aggregates in the Scrum Core Domain

- Rule: Model True Invariants in Consistency Boundaries

- Rule: Design Small Aggregates

- Rule: Reference Other Aggregates by Identity

- Rule: Use Eventual Consistency Outside the Boundary

- Reasons to Break the Rules

- Gaining Insight through Discovery

- Implementation

- Wrap-Up

- The universe is built up into an aggregate of permanent objects connected by causal relations that are independent of the subject and are placed in objective space and time.

- –Jean Piaget

Clustering Entities (5) and Value Objects (6) into an Aggregate with a carefully crafted consistency boundary may at first seem like quick work, but among all DDD tactical guidance, this pattern is one of the least well understood.

To start off, it might help to consider some common questions. Is an Aggregate just a way to cluster a graph of closely related objects under a common parent? If so, is there some practical limit to the number of objects that should be allowed to reside in the graph? Since one Aggregate instance can reference other Aggregate instances, can the associations be navigated deeply, modifying various objects along the way? And what is this concept of invariants and a consistency boundary all about? It is the answer to this last question that greatly influences the answers to the others.

There are various ways to model Aggregates incorrectly. We could fall into the trap of designing for compositional convenience and make them too large. At the other end of the spectrum we could strip all Aggregates bare and as a result fail to protect true invariants. As we’ll see, it’s imperative that we avoid both extremes and instead pay attention to the business rules.

Using Aggregates in the Scrum Core Domain

We’ll take a close look at how Aggregates are used by SaaSOvation, and specifically within the Agile Project Management Context the application named ProjectOvation. It follows the traditional Scrum project management model, complete with product, product owner, team, backlog items, planned releases, and sprints. If you think of Scrum at its richest, that’s where ProjectOvation is headed; this is a familiar domain to most of us. The Scrum terminology forms the starting point of the Ubiquitous Language (1). Since it is a subscription-based application hosted using the software as a service (SaaS) model, each subscribing organization is registered as a tenant, another term of our Ubiquitous Language.

From these they envisioned a model and made their first attempt at a design. Let’s see how it went.

First Attempt: Large-Cluster Aggregate

The team put a lot of weight on the words Products have in the first statement, which influenced their initial attempt to design Aggregates for this domain.

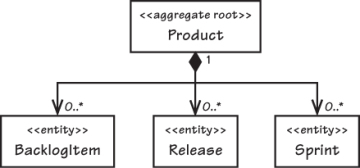

This design is shown in the following code, and as a UML diagram in Figure 10.1:

public class Product extends ConcurrencySafeEntity {

private Set<BacklogItem> backlogItems;

private String description;

private String name;

private ProductId productId;

private Set<Release> releases;

private Set<Sprint> sprints;

private TenantId tenantId;

...

}

Figure 10.1. Product modeled as a very large Aggregate

The big Aggregate looked attractive, but it wasn’t truly practical. Once the application was running in its intended multi-user environment, it began to regularly experience transactional failures. Let’s look more closely at a few client usage patterns and how they interact with our technical solution model. Our Aggregate instances employ optimistic concurrency to protect persistent objects from simultaneous overlapping modifications by different clients, thus avoiding the use of database locks. As discussed in Entities (5), objects carry a version number that is incremented when changes are made and checked before they are saved to the database. If the version on the persisted object is greater than the version on the client’s copy, the client’s is considered stale and updates are rejected.

Consider a common simultaneous, multiclient usage scenario:

- Two users, Bill and Joe, view the same Product marked as version 1 and begin to work on it.

- Bill plans a new BacklogItem and commits. The Product version is incremented to 2.

- Joe schedules a new Release and tries to save, but his commit fails because it was based on Product version 1.

Persistence mechanisms are used in this general way to deal with concurrency.1 If you argue that the default concurrency configurations can be changed, reserve your verdict for a while longer. This approach is actually important to protecting Aggregate invariants from concurrent changes.

These consistency problems came up with just two users. Add more users, and you have a really big problem. With Scrum, multiple users often make these kinds of overlapping modifications during the sprint planning meeting and in sprint execution. Failing all but one of their requests on an ongoing basis is completely unacceptable.

Nothing about planning a new backlog item should logically interfere with scheduling a new release! Why did Joe’s commit fail? At the heart of the issue, the large-cluster Aggregate was designed with false invariants in mind, not real business rules. These false invariants are artificial constraints imposed by developers. There are other ways for the team to prevent inappropriate removal without being arbitrarily restrictive. Besides causing transactional issues, the design also has performance and scalability drawbacks.

Second Attempt: Multiple Aggregates

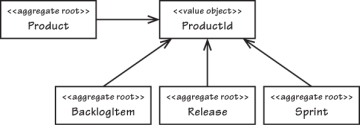

Now consider an alternative model as shown in Figure 10.2, in which there are four distinct Aggregates. Each of the dependencies is associated by inference using a common ProductId, which is the identity of Product considered the parent of the other three.

Figure 10.2.Product and related concepts are modeled as separate Aggregate types.

Breaking the single large Aggregate into four will change some method contracts on Product. With the large-cluster Aggregate design the method signatures looked like this:

public class Product ... {

...

public void planBacklogItem(

String aSummary, String aCategory,

BacklogItemType aType, StoryPoints aStoryPoints) {

...

}

...

public void scheduleRelease(

String aName, String aDescription,

Date aBegins, Date anEnds) {

...

}

public void scheduleSprint(

String aName, String aGoals,

Date aBegins, Date anEnds) {

...

}

...

}

All of these methods are CQS commands [Fowler, CQS]; that is, they modify the state of the Product by adding the new element to a collection, so they have a void return type. But with the multiple-Aggregate design, we have

public class Product ... {

...

public BacklogItem planBacklogItem(

String aSummary, String aCategory,

BacklogItemType aType, StoryPoints aStoryPoints) {

...

}

public Release scheduleRelease(

String aName, String aDescription,

Date aBegins, Date anEnds) {

...

}

public Sprint scheduleSprint(

String aName, String aGoals,

Date aBegins, Date anEnds) {

...

}

...

}

These redesigned methods have a CQS query contract and act as Factories (11); that is, each creates a new Aggregate instance and returns a reference to it. Now when a client wants to plan a backlog item, the transactional Application Service (14) must do the following:

public class ProductBacklogItemService ... {

...

@Transactional

public void planProductBacklogItem(

String aTenantId, String aProductId,

String aSummary, String aCategory,

String aBacklogItemType, String aStoryPoints) {

Product product =

productRepository.productOfId(

new TenantId(aTenantId),

new ProductId(aProductId));

BacklogItem plannedBacklogItem =

product.planBacklogItem(

aSummary,

aCategory,

BacklogItemType.valueOf(aBacklogItemType),

StoryPoints.valueOf(aStoryPoints));

backlogItemRepository.add(plannedBacklogItem);

}

...

}

So we’ve solved the transaction failure issue by modeling it away. Any number of BacklogItem, Release, and Sprint instances can now be safely created by simultaneous user requests. That’s pretty simple.

However, even with clear transactional advantages, the four smaller Aggregates are less convenient from the perspective of client consumption. Perhaps instead we could tune the large Aggregate to eliminate the concurrency issues. By setting our Hibernate mapping optimistic-lock option to false, we make the transaction failure domino effect go away. There is no invariant on the total number of created BacklogItem, Release, or Sprint instances, so why not just allow the collections to grow unbounded and ignore these specific modifications on Product? What additional cost would there be for keeping the large-cluster Aggregate? The problem is that it could actually grow out of control. Before thoroughly examining why, let’s consider the most important modeling tip the SaaSOvation team needed.