BPF and the I/O Stack

Demonstrates how BPF can trace at all layers of the storage I/O stack. The tools traced the block I/O layer, the I/O scheduler, SCSI, and nvme as an example driver.

Disk I/O is a common source of performance issues because I/O latency to a heavily loaded disk can reach tens of milliseconds or more—orders of magnitude slower than the nanosecond or microsecond speed of CPU and memory operations. Analysis with BPF tools can help find ways to tune or eliminate this disk I/O, leading to some of the largest application performance wins.

The term disk I/O refers to any storage I/O type: rotational magnetic media, flash-based storage, and network storage. These can all be exposed in Linux in the same way, as storage devices, and analyzed using the same tools.

Between an application and a storage device is usually a file system. File systems employ caching, read ahead, buffering, and asynchronous I/O to avoid blocking applications on slow disk I/O. I therefore suggest that you begin your analysis at the file system, covered in Chapter 8.

Tracing tools have already become a staple for disk I/O analysis: I wrote the first popular disk I/O tracing tools, iosnoop(8) in 2004 and iotop(8) in 2005, which are now shipped with different OSes. I also developed the BPF versions, called biosnoop(8) and biotop(8), finally adding the long-missing “b” for block device I/O. These and other disk I/O analysis tools are covered in this chapter.

This chapter begins with the necessary background for disk I/O analysis, summarizing the I/O stack. I explore the questions that BPF can answer, and provide an overall strategy to follow. I then focus on tools, starting with traditional disk tools and then BPF tools, including a list of BPF one-liners. This chapter ends with optional exercises.

9.1 Background

This section covers disk fundamentals, BPF capabilities, and a suggested strategy for disk analysis.

9.1.1 Disk Fundamentals

Block I/O Stack

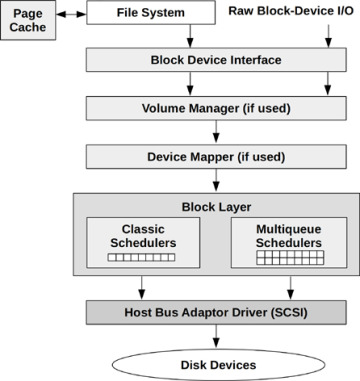

The main components of the Linux block I/O stack are shown in Figure 9-1.

FIGURE 9-1 Linux block I/O stack

The term block I/O refers to device access in blocks, traditionally 512-byte sectors. The block device interface originated from Unix. Linux has enhanced block I/O with the addition of schedulers for improving I/O performance, volume managers for grouping multiple devices, and a device mapper for creating virtual devices.

Internals

Later BPF tools will refer to some kernel types used by the I/O stack. To introduce them here: I/O is passed through the stack as type struct request (from include/linux/blkdev.h) and, for lower levels, as struct bio (from include/linux/blk_types.h).

rwbs

For tracing observability, the kernel provides a way to describe the type of each I/O using a character string named rwbs. This is defined in the kernel blk_fill_rwbs() function and uses the characters:

R: Read

W: Write

M: Metadata

S: Synchronous

A: Read-ahead

F: Flush or force unit access

D: Discard

E: Erase

N: None

The characters can be combined. For example, “WM” is for writes of metadata.

I/O Schedulers

I/O is queued and scheduled in the block layer, either by classic schedulers (only present in Linux versions older than 5.0) or by the newer multi-queue schedulers. The classic schedulers are:

Noop: No scheduling (a no-operation)

Deadline: Enforce a latency deadline, useful for real-time systems

CFQ: The completely fair queueing scheduler, which allocates I/O time slices to processes, similar to CPU scheduling

A problem with the classic schedulers was their use of a single request queue, protected by a single lock, which became a performance bottleneck at high I/O rates. The multi-queue driver (blk-mq, added in Linux 3.13) solves this by using separate submission queues for each CPU, and multiple dispatch queues for the devices. This delivers better performance and lower latency for I/O versus classic schedulers, as requests can be processed in parallel and on the same CPU as the I/O was initiated. This was necessary to support flash memory-based and other device types capable of handling millions of IOPS [90].

Multi-queue schedulers available include:

None: No queueing

BFQ: The budget fair queueing scheduler, similar to CFQ, but allocates bandwidth as well as I/O time

mq-deadline: A blk-mq version of deadline

Kyber: A scheduler that adjusts read and write dispatch queue lengths based on performance, so that target read or write latencies can be met

The classic schedulers and the legacy I/O stack were removed in Linux 5.0. All schedulers are now multi-queue.

Disk I/O Performance

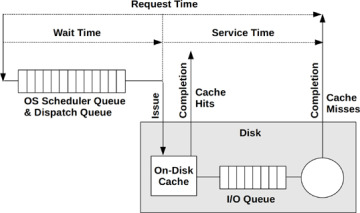

Figure 9-2 shows a disk I/O with operating system terminology.

FIGURE 9-2 Disk I/O

From the operating system, wait time is the time spent in the block layer scheduler queues and device dispatcher queues. Service time is the time from device issue to completion. This may include time spent waiting on an on-device queue. Request time is the overall time from when an I/O was inserted into the OS queues to its completion. The request time matters the most, as that is the time that applications must wait if I/O is synchronous.

A metric not included in this diagram is disk utilization. It may seem ideal for capacity planning: when a disk approaches 100% utilization, you may assume there is a performance problem. However, utilization is calculated by the OS as the time that disk was doing something, and does not account for virtual disks that may be backed by multiple devices, or on-disk queues. This can make the disk utilization metric misleading in some situations, including when a disk at 90% may be able to accept much more than an extra 10% of workload. Utilization is still useful as a clue, and is a readily available metric. However, saturation metrics, such as time spent waiting, are better measures of disk performance problems.

9.1.2 BPF Capabilities

Traditional performance tools provide some insight for storage I/O, including IOPS rates, average latency and queue lengths, and I/O by process. These traditional tools are summarized in the next section.

BPF tracing tools can provide additional insight for disk activity, answering:

What are the disk I/O requests? What type, how many, and what I/O size?

What were the request times? Queued times?

Were there latency outliers?

Is the latency distribution multi-modal?

Were there any disk errors?

What SCSI commands were sent?

Were there any timeouts?

To answer these, trace I/O throughout the block I/O stack.

Event Sources

Table 9-1 lists the event sources for instrumenting disk I/O.

Table 9-1 Event Sources for Instrumenting Disk I/O

Event Type |

Event Source |

Block interface and block layer I/O |

block tracepoints, kprobes |

I/O scheduler events |

kprobes |

SCSI I/O |

scsi tracepoints, kprobes |

Device driver I/O |

kprobes |

These provide visibility from the block I/O interface down to the device driver.

As an example event, here are the arguments to block:block_rq_issue, which sends a block I/O to a device:

# bpftrace -lv tracepoint:block:block_rq_issue

tracepoint:block:block_rq_issue

dev_t dev;

sector_t sector;

unsigned int nr_sector;

unsigned int bytes;

char rwbs[8];

char comm[16];

__data_loc char[] cmd;

Questions such as “what are the I/O sizes for requests?” can be answered via a one-liner using this tracepoint:

bpftrace -e 'tracepoint:block:block_rq_issue { @bytes = hist(args->bytes); }'

Combinations of tracepoints allow the time between events to be measured.

9.1.3 Strategy

If you are new to disk I/O analysis, here is a suggested overall strategy that you can follow. The next sections explain these tools in more detail.

For application performance issues, begin with file system analysis, covered in Chapter 8.

Check basic disk metrics: request times, IOPS, and utilization (e.g., iostat(1)). Look for high utilization (which is a clue) and higher-than-normal request times (latency) and IOPS.

If you are unfamiliar with what IOPS rates or latencies are normal, use a microbenchmark tool such as fio(1) on an idle system with some known workloads and run iostat(1) to examine them.

Trace block I/O latency distributions and check for multi-modal distributions and latency outliers (e.g., using BCC biolatency(8)).

Trace individual block I/O and look for patterns such as reads queueing behind writes (you can use BCC biosnoop(8)).

Use other tools and one-liners from this chapter.

To explain that first step some more: if you begin with disk I/O tools, you may quickly identify cases of high latency, but the question then becomes: how much does this matter? I/O may be asynchronous to the application. If so, that’s interesting to analyze, but for different reasons: understanding contention with other synchronous I/O, and device capacity planning.