Exploring the Code of the Survey Development Suite

- Survey Repository

- Survey Development Studio

- PocketSurvey

- Moving On

Chapter 3: Exploring the Code of the Survey Development Suite

At this point you're probably pretty eager to get into the code. We've covered the basics of what the Survey Development Suite does and how it can be used. We haven't covered how the application actually accomplishes all the things we've seen it doing. This chapter covers the following:

-

Infrastructure services

-

Error handling

-

Survey Repository

-

Survey Development Studio

-

PocketSurvey

Before taking a look at the application itself, we'll take a look at some of the core code that provides the basic foundation on which all the other code in the application is written.

The Survey Development Suite is divided into three parts: Survey Repository, which functions as a Web service back end, Survey Development Studio, which is a Windows Forms application, and PocketSurvey, which is a Pocket PC application for conducting surveys in a mobile situation. Each of these three parts is a separate, fully functioning application that also communicates with other pieces of the software suite. In this regard, we consider the collection of the three applications to be a single, enterprise-class application suite.

This chapter walks you through the process used to design the various pieces of the application and takes you on a tour of some of the most interesting highlights of the code that makes this application possible.

To make things as easy to grasp as possible, we'll start at the back end, with Survey Repository, and then we'll cover the Survey Development Studio Windows application. We'll finish up with coverage of the Pocket PC application.

This chapter takes a step-by-step approach to examining the code of the Survey Development Suite. People learn new technologies and techniques in very different ways. Some people prefer to be instructed without knowing anything about the new technology. Other people prefer to dive head-first into the code, gather a list of questions about the code, and then get more information. If the latter applies to you, you might want to open the Visual Studio .NET project that is on this book's CD and explore all the various projects within it. Spend an hour or so looking at the code and figuring out how everything fits together. When you're done, come back to this chapter and read it through from start to finish to fill in any gaps in your understanding of the code.

Survey Repository

As you know, Survey Repository is a Web service that provides a warehousing facility for survey profiles and survey runs. It is made up of two separate Web services (.asmx files): a login service and the repository service itself. This separation allows us to communicate with the login service via SSL and to keep the repository service communications clear for performance reasons.

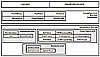

Take a look at the architectural diagram in Figure 3.1.

Figure

3.1 The logical structure of the Survey Repository Web service.

Figure

3.1 The logical structure of the Survey Repository Web service.

At the top level are the two service files Login.asmx and RepositoryService.asmx. These are the entry points into the Web service provided by Survey Repository. When these entry points are used, they in turn invoke any number of business classes represented by the second large box in Figure 3.1. These business components are used as an interface to the Object-Relational Mapping (ORM) mapper, which is contained in the lowest level, the infrastructure services.

Infrastructure Services

Whenever I sit down to come up with a design for a new Web site, one of the first things I do is come up with a list of all the services that the pages are going to need. Invariably, I come up with the same set of services every time, as virtually every data-driven Web application requires the same things:

Security

Tracing, monitoring, and instrumentation

Data access

Application-specific services

If you build applications with an architecture-first model, you will find that not only will your applications be quicker and easier to code but you may be able to reuse a lot of your infrastructure code for the next application.

For example, the data access services that I used for this application are an evolution of various data abstraction methods that I have been using for over two years. Each time I get to reuse the code, I find room for enhancements and improvements.

Code Tour:The SecurityServices Project

The SecurityServices project should be available within the SurveyV1 solution. The purpose of this library is to abstract access to the security system used by the application. When you do this, you are in a better position to grow or change the security model in the future without affecting the entire application.

SecurityServices provides the classes listed in Table 3.1 (as well as some additional code that you'll see when we take a closer look).

SAMS.Survey.SecurityServices Classes

|

Class Name |

Description |

|

SecurityHelper |

This class provides several static methods that assist in performing standard security tasks, such as storing and retrieving authentication tokens. |

|

User |

This class is a business class that represents a single user. It is handed to an ObjectRelator class for interfacing with the database. |

|

PermissionList |

This class is a business class. It is used to obtain a list of permissions from the database and to provide that same list to Web service and/or GUI components. |

Code Tour: The SecurityHelper Class

When designing a class that abstracts security tasks, you need to take the time to figure out exactly what tasks you will need to have performed and where those tasks should be performed.

The following are two of the most interesting methods of the SecurityHelper class:

SetIdentityContext—This method stores user information in the CallContext class, making it available to subsequent method calls.

GetUserIdFromToken—This method takes a string that contains an authentication token and returns the corresponding user ID, if there is one.

For the moment, let's focus on the last method, GetUserIdFromToken. In order to understand what this method does, you need to understand how the security system works for the Web service.

I needed a way to make sure that the password and username information remained secure, but I didn't want to incur the overhead of using SSL for every single transaction with the Web service. Knowing this, I couldn't very well pass the username and password with each and every request.

I didn't want to enable session state because that could lead down a road from which I couldn't return: mostly because if someone left his or her Survey Development Studio application running overnight and then clicked somewhere the next morning, the request would fail with unpredictable results due to that user's session having expired.

Although I could have used Microsoft Web Services Enhancements 2.0 to get access to some of the most robust security features that can be used within Web services, I didn't feel the need to use it. I wanted a very simple solution. The target audience for this application is a network site managed by a company that is involved in producing and conducting opinion surveys. For the most part, it didn't need all the extras included in Web Services Enhancements 2.0.

The solution I ended up with was the concept of tokens. You may have seen this concept if you have looked at some of the early prototypes for the Favorites Service that was produced by Microsoft. It was a sample application produced for Cold Rooster Consulting. You can find the documentation and a working demo of this sample at http://www.coldrooster.com.

A token, in our daily lives, is some piece of proof or evidence. In New York or Boston, a token might be proof that you are allowed to get onto the subway system. A token in the sense of a Web service is a piece of evidence that verifies that a given user is allowed to access the Web service.

The way a token works is fairly simple, as illustrated in Figure 3.2.

Figure

3.2 The login and token assignment process.

Figure

3.2 The login and token assignment process.

As you can see in Figure 3.2, the client application first makes contact with the login Web service by providing a set of credentials that includes a username and password. If this were a more complex application, a client might be required to provide a CD or license key to prove that the client application itself is legitimate. After the credentials have been validated, a token (in this case, a GUID) is returned to the client. The client is then able to pass that token to the repository Web service to gain access to the various methods exposed by that service. If a call to that service doesn't contain a valid security token, the client performing the action receives an error.

Behind the scenes, a lot is going on. First, when a set of credentials is received by Login.asmx (you will see the code for this later in this chapter), a call is made to the database to validate the username and password combination. If it is valid, a token (or GUID) is generated. That generated GUID is then stored in the ASP.NET application cache for some period (this application defaults to one hour), along with information on which user that GUID belongs to.

When a request comes in to the repository Web service, a check is made against the ASP.NET application cache for the supplied token GUID. If the token exists in the cache, the call is allowed to proceed as normal. Otherwise, the call is rejected. Listing 3.1 shows the code that obtains the valid user ID by looking up the authentication token in the ASP.NET application cache.

Listing 3.1 SurveyV1\SecurityServices\SecurityHelper.cs The GetUserIdFromToken and SetIdentityContext Methods

public static int GetUserIdFromToken( string userToken )

{

System.Web.Caching.Cache cache = System.Web.HttpContext.Current.Cache;

if (cache != null)

{

string securityKey = "Security-" + userToken;

if (cache[securityKey] == null)

return -1;

PermissionList pl = (PermissionList)cache[securityKey];

return pl.UserId;

}

else

{

return -1;

}

}

public static void SetIdentityContext( int userId )

{

IdentityContext ic = new IdentityContext();

ic.UserKey = userId.ToString();

// if we ever need to have more information about the user contained in the method

// execution chain, we can just add it to the identity context.

ic.DisplayName = userId.ToString();

CallContext.SetData("SAMS_SURVEY_IDENTITY", ic );

}

You can see in Listing 3.1 that the key index used for the application cache contains the prefix Security. This is not completely necessary because GUIDs are guaranteed to be completely unique and never overlap any other code using the same cache. However, if someone is using an administrative tool to look at the contents of the application cache and that person sees hundreds of seemingly random GUIDs lying around, he or she might not know what they're for. With the method used in Listing 3.1, anyone examining the cache should immediately know the purpose of the GUIDs.

Also worth noting is that we're not simply storing the user's ID in the cache. We're actually storing the list of that user's permissions. Whenever a user is authenticated against the system, a call is made that obtains all of the user's security privileges. Those privileges are then placed in the cache, to be associated with the authentication token. This enables every single method of any Web service within this AppDomain class to be able to know what a given user can and cannot do, without making additional database calls.

Call Contexts

In Listing 3.1, you might have noticed the SetIdentityContext method. This method creates an instance of the IdentityContext class and then places it in the call context with the following statement:

CallContext.SetData("SAMS_SURVEY_IDENTITY", ic );

In order to get access to the CallContext class, you need to reference the System.Runtime.Remoting.Messaging namespace, which contains the code that makes call contexts work.

What is a call context? You can think of it as a stack that sits on top of a chain of execution. The first time you invoke a method within the .NET Framework, a call context is created. This context is attached to, and available from, every subsequent method call made after the context is created. This allows the remoting infrastructure to pass additional information between process boundaries. However, it has a handy side effect of working on nonremote method execution as well.

After you place data in a call context, the data becomes available to every method called thereafter, as well as to methods called by those methods, and so on throughout a deep level of recursion.

To place data in a call context, you use the SetData method. This allows you to place an object of any data type into a named slot that is represented by a string. In the case of the code in Listing 3.1, the named slot is "SAMS_SURVEY_IDENTITY", but you are free to use any name you like. The only caveat is that you need to make sure there is a good chance that the name is unique. If your application is making use of another API that utilizes call contexts, the last thing you want to do is place your data in a slot expected by the API.

To retrieve data from the call context, you use the GetData method. This method returns an instance of an object of varying data type. It is up to you, the programmer, to know ahead of time the type of data that you placed in the call context.

Although using a call context can be handy, it can also have some serious drawbacks. The main drawback of call contexts is that their data is propagated each time a method is invoked. The more methods that are invoked, the more times data must be passed along the stack. If you rely too heavily on call contexts, you might end up degrading the performance of your applications.

A good rule of thumb to use with call contexts is to use them only when you know that the information needs to be available to any method, and the information you are passing along the stack has a very small memory footprint, such as a single integer or a short string.

Code Tour: The User Class

The User class is a business object that serves as a container for user-related information. In addition to holding information about a given user, it provides various methods that are applicable to users, such as Validate, Create, Update, and Delete. This class makes use of the ORM tools contained within the data access layer (you will be seeing those later in this chapter). Listing 3.2 contains the User class. Before you see the code for the User class, take a look at Tables 3.2 and 3.3, which list the properties and methods of the class.

User Class Methods

|

Method |

Description |

|

Validate |

This method is used to determine the validity of the current username and password combination. If the user is valid, a value greater than zero is returned. |

|

Create |

This method uses the values in the FullName, UserName, and Password properties to create a new user. |

|

Delete |

This method deletes the user indicated by the current value of the UserId property. |

|

Update |

This method updates the current user indicated by the UserId property with the values contained in the FullName, UserName, and Password properties. |

|

Load |

This method loads a specific user indicated by the UserId property. The results of the load operation populate the UserName, FullName, and Password properties. |

User Class Properties

|

Property |

Description |

|

UserId |

Indicates the current value of the user's ID. |

|

FullName |

Gets or sets the user's full name. |

|

UserName |

Gets or sets the login name of the user. |

|

Password |

Gets or sets the user's password. |

Listing 3.2 SurveyV1\SecurityServices\User.cs The User Class

using System;

using System.Data;

using SAMS.Survey.Core.MonitorServices;

using SAMS.Survey.Core.ObjectRelationalDb;

namespace SAMS.Survey.SecurityServices

{

public class User : IRelatable

{

private int userId;

private string fullName;

private string userName;

private string password;

public User()

{

}

public int Validate()

{

SqlRelator sr = new SqlRelator();

sr.Relate( this, "Validate" );

return this.UserId;

}

public void Create()

{

SqlRelator sr = new SqlRelator();

sr.Relate( this, RelationType.Insert );

}

public void Delete()

{

SqlRelator sr = new SqlRelator();

sr.Relate( this, RelationType.Delete );

}

public void Update()

{

SqlRelator sr = new SqlRelator();

sr.Relate( this, RelationType.Update );

}

}

}

Note that I've stripped from Listing 3.2 the code that contains the public property definitions for the private members, as it is just straightforward get and set accessors.

A few things about the User class should stand out right away when you look at Listing 3.2. The first is that it implements a marker interface called IRelatable. This interface tells the ORM mapper that the class is eligible for interfacing with the database through an ORM. It is actually nothing more than an empty marker. While we could use an abstract base class or even a custom code attribute to perform such marking, the interface allows us to implement our own hierarchy if we chose while still maintaining the hierarchy. Also, testing for the implementation of an interface on a class instance is much faster than using reflection to query the list of custom attributes on a class.

The other thing that stands out in Listing 3.2 is that the class makes no use of stored procedures. In fact, it has absolutely no built-in knowledge of how to persist itself. As I'll discuss later in this chapter, this is a key point in true object-ORM. Instead of invoking stored procedures directly, the object simply tells the object relator "relate me to the database, using this mapping." The mapping is indicated by the RelationType enumeration.

Code Tour: The PermissionList Class

Just like User, PermissionList is a business class that makes use of the ORM mapper to communicate with the database. Its specific purpose is to retrieve the list of permissions associated with a given user.

Listing 3.3 contains the PermissionList class definition.

Listing 3.3 SurveyV1\SecurityServices\PermissionList.cs The PermissionList Class

using System;

using System.Data;

using System.Reflection;

using SAMS.Survey.Core.MonitorServices;

using SAMS.Survey.Core.ObjectRelationalDb;

using Microsoft.ApplicationBlocks.ExceptionManagement;

namespace SAMS.Survey.SecurityServices

{

[Serializable()]

public class PermissionList : MarshalByRefObject, IRelatableSet

{

private DataSet internalData;

private int userId;

public PermissionList()

{

internalData = new DataSet();

}

public DataSet ResultSet

{

get

{

return internalData;

}

set

{

internalData = value;

}

}

public int UserId

{

get

{

return userId;

}

set

{

userId = value;

}

}

public void FetchPermissions( int userId )

{

this.userId = userId;

SqlRelator sr = new SqlRelator();

sr.Relate( this, RelationType.Select );

SystemTrace.TraceVerbose("Selected user {0} permissions, Tables returned: {1}",

userId, internalData.Tables.Count);

}

public bool HasPermission( int permissionId, int accessMode )

{

SystemTrace.MethodStart( MethodBase.GetCurrentMethod() );

if (internalData.Tables.Count == 0)

{

ExceptionManager.Publish(

new InvalidOperationException(

"Cannot check for unfetched permissions.") );

}

else

{

DataTable perms = internalData.Tables[0];

DataRow[] permission =

perms.Select("PermissionId=" + permissionId.ToString());

if ((permission.Length ==0) || (permission == null))

{

SystemTrace.TraceVerbose(

"Permission check failed due to missing " +

"permission {0}, total rows available : {1}",

permissionId, perms.Rows.Count);

return false;

}

else

{

return SecurityHelper.CheckAccess(

(int)permission[0]["Access"],

accessMode );

}

}

return false;

}

}

}

There is some code in the PermissionList class that you haven't yet seen. Some of the methods belong to the SystemTrace class that we'll be discussing in the next section of this chapter. Those methods are all about tracing and making the job of debugging the application easier.

The FetchPermissions method works fairly simply. It relates the current instance of PermissionList to the database, using the Select ORM. This obtains all the permissions that the current user (indicated by the UserId property) has.

The HasPermission method is a bit more complex than FetchPermissions. It uses the internalData object, which is a data set, to look up all the permissions available to the user. If one of those permissions is the permission indicated by the argument, then the user has that permission.

There is a catch, however. Our system not only supports the notion of a yes/no type of permission, but it also supports the notion of access modes. For example, it is possible for a user to have a permission called Survey Profiles, but that user may only have the Read access mode. This person then has read-only access to the profiles contained within the repository. However, another person might have the same permission, but with a higher access level. With this system in place, administrators have the ability to fine-tune what each user can perform. Because our system is designed for role-based security, it is easy to manage as well as flexible.

The MonitorServices Project

The MonitorServices project is a project that contains classes that provide for unified tracing, easier debugging, and general monitoring-related utilities, such as an IdentityContext class. We will take a closer look at the MonitorServices project later in this chapter, when we take a tour of the unified tracing system in the application.

The ObjectRelationalDb Project

The ObjectRelationalDb project contains, as I'm sure you guessed, all the classes required to create ORM and to use these mappings to perform database operations in a seamless, transparent way that makes writing business objects a snap. We'll discuss this project in more detail in the code tour "A Look at the ORM Schema," after some discussion on the concepts surrounding ORM.

Data Access with ORM

In the following sections, we'll take a look at accessing data using an object-relational model. We'll compare this model to the standard procedural model for data access and talk about the benefits and drawbacks of using ORM.

What Is ORM?

When most programmers think about data access, they think about stored procedures, parameters, and SQL statements. They think about how to write code that wraps around a stored procedure or around SQL statements so that the tedium of accessing the database is taken away, leaving the programmers free to think about the overall business model of the application.

One particular train of thought on the subject of data access deals with the idea of ORM. This concept, as illustrated in Figure 3.3, deals with the mapping of information contained in the world of classes, instances, and objects to information contained in the world of a relational database, consisting of tables, columns, and rows.

Figure

3.3 The SurveyProfile typed data set.

Figure

3.3 The SurveyProfile typed data set.

In its purest form, ORM implies that class instances are mapped to rows within database tables. Columns within those tables are mapped to public fields or properties on the class instance. When more than one row of data results from a query operation, the set of rows is then mapped into a collection of objects, and each object in the collection is an object that maps directly to one and only one row within the table.

As with all good programming theories, with ORM there is often a balance between the pure theory behind the solution and the practicality of implementing the solution. Often, implementations of ORM make certain sacrifices in pure OOP design in order to achieve some gains in performance, usability, or flexibility.

For example, an implementation from Microsoft that is part of a technical preview of a suite of tools called ObjectSpaces does an excellent job of mapping class instances to tables, columns, and rows. However, it only works with SQL Server Yukon (take a look at http://msdn.microsoft.com/library/default.asp?url=/library/en-us/dnsq190/html/sql_ovyukondev.asp for an overview of Yukon and its impact on developers) and doesn't currently support stored procedures. This is one of the tradeoffs made in order to place an implementation as close to the pure theory of ORM as possible. Microsoft may indeed add stored procedure support for its ObjectSpaces library in the future; in this case, you'll be well versed in the concepts involved, having looked at the code contained in this section of the book.

Why Use ORM?

If there are inherent performance concerns with building a system that implements the pure vision of ORM, why should we bother using it? It has quite a few benefits, as described in the following sections.

Provider Agnostic

In a true implementation of ORM, it should be possible to create business objects that have absolutely no embedded information about how to persist themselves, other than the fact that they can be persisted. For example, a non-ORM business object might have a Delete method. This Delete method might create an instance of a Command object, invoke the command, and then return some result. The problem with this is that if the database information changes, the Delete method could become completely invalid. If you decouple the business object from the means by which that object is persisted to some data source, the business objects can be freely versioned without worrying about the database. In addition, the database information can be changed easily without negatively affecting the entire collection of business objects in the application.

Declarative Persistence

Another of the incredible benefits of using an ORM model is that you can simply declare the mapping. Instead of writing 20 lines of code to create a set of command parameters, instantiate a connection, open the connection, and so on, you can simply declare, through some means, sufficient information to automatically map instance data to relational data. Some implementations use proprietary data formats (for example, J2EE's Container-Managed Persistence [CMP] uses meta-data files that are contained in .jar archives), and others use standard XML data to list the mapping information (for example, our implementation, Microsoft's ObjectSpaces implementation).

Code Simplicity

A side effect of storing all the persistence information in some meta-data mapping (XML, .jar file, and so on) is that the code required to actually perform a persistence operation is minimal. In general, the pattern is to create an instance of the mapper (or whatever tool you're using). When you have an instance of a mapper, you simply pass to the mapper the instance of the object you want to persist, along with some helper information, and the operation is performed for you.

If you're an architect building a framework on which a team of junior programmers will be building an application, this kind of scenario can be a lifesaver. The simpler it is for your programmers to write fundamental, core code for the application, the less chance there is of bugs creeping up.

Scalability

If ORM is implemented properly, you can actually change everything about your back-end database without ever having to recompile your business or data-tier objects. You might be thinking, "I never change columns or tables after I release a product." That might be true, but you're in the minority. The reality is that things change. You might upgrade your database server from Oracle 8 to Oracle 9, from SQL 7 to SQL 2000. This upgrade might cause some subtle change that breaks one of your stored procedure invocations. If all you have to do is modify an XML file or just recompile the assembly that contains the affected object, your life will be a lot easier.

The implementation of ORM that I've gone with for this book is a little bit different than the pure concept of what ORM is. Instead of mapping instance fields to table columns, I've decided to map instance fields to stored procedure parameters. This supports my ORM implementation as the code looks like any other ORM implementation, and I can still use stored procedures to give the application the most performance and flexibility possible.

ORM Versus CMP

Aside from being different acronyms, what exactly do ORM and CMP mean, and what is the difference between the two? ORM is pretty much exactly what it sounds like: You have an instance of an object, and the database access is performed by relating individual pieces of that object to the database in some fashion. Some implementations, such as the one used in this book, relate public class members to stored procedure parameters. Other implementations, such as Microsoft's ObjectSpaces, relate individual objects and their public members to SQL statements that are then executed on the database.

CMP differs from ORM in some minor ways. The concept of CMP involves an object instance and a container. The container is an abstraction of the underlying physical data storage medium. This container could be an abstraction of a relational database, but it could also be an abstraction of an XML file, a folder containing multiple files on disk, a Microsoft message queue, or even a Web service.

The two concepts ORM and CMP both have the same core idea: that the business or data object that is being persisted or related has no direct link to the underlying storage medium. The object instance doesn't know if it is going to be stored in a database, stored in a file on disk, or transmitted via SOAP to a Web service. Both CMP and ORM rely on this concept as a foundational aspect of their respective design patterns.

Where the two ideas begin to diverge is in the concept of how communication with the data source takes place. The traditional ORM model maps a single instance of an object to stored procedure parameters or to a SQL statement that is then executed. With CMP, the "container" model is more prevalent; an object instance is placed into a container, and that's all that the programmer ever sees. The act of inserting an object into a container triggers some functionality on the container that will determine what kind of persistence operation to perform. The data contained on the object combined with meta-data stored somewhere provides information about how to complete the persistence operation.

In reality, there are almost no pure implementations of either ORM or CMP. The Java implementation of CMP requires that the meta-data for persistence operations be stored in a .jar file on the server. Microsoft's ObjectSpaces uses attributes and meta-data to convert an object instance into a SQL statement that can then be executed against the database server. The implementation of ORM in this book uses XML meta-data stored embedded in assemblies; this meta-data is used to relate public member data to stored procedure parameters to interact with the database.

Code Tour: A Look at the ORM Schema

I've experimented with quite a few different variations on CMP and ORM. A previous version of CMP that I used had the mapping data stored in an XML file on disk. This file was opened upon application startup and was used to build an in-memory cache of mapping data. This cache was then used to dynamically create stored procedures, as needed by the application.

The problem I found with this approach is that the single XML file could get extremely large, especially when I had dozens of different assemblies all using this file for their own persistence information.

To make things easier to organize, I experimented with using one XML file per assembly. This made things easier to read, but I ended up with a stack of XML files sitting in my Web application's root directory.

I didn't feel comfortable with the plain-text files sitting in the application directory. The version I've implemented for this book actually embeds the ORM XML file directly in the assembly as a resource. This resource is then read via reflection and used to create the appropriate stored procedure whenever the ORM mapper is invoked.

Listing 3.4 contains a sample of an ORM that exists in the Survey Development Suite.

Listing 3.4 SurveyV1\SecurityServices\ORM.xml The SecurityServices Assembly's ORM.xml File

<relationalmapping>

<type fullname="SAMS.Survey.SecurityServices.User">

<commandmap storedproc="SVY_Validate_User" multiple="false" type="Validate">

<propertymap member="UserName"

dbtype="Varchar"

dbsize="8" parameter="@UserName"

direction="Input"></propertymap>

<propertymap member="Password"

dbtype="Varchar"

dbsize="8" parameter="@Password"

direction="Input"></propertymap>

<propertymap member="UserId" dbtype="Int"

dbsize="4" parameter="@UserId"

direction="Output"></propertymap>

<propertymap member="FullName" dbtype="Varchar"

dbsize="40" parameter="@FullName"

direction="Output"></propertymap>

</commandmap>

</type>

</relationalmapping>

The first important element here is the type element. This element is the root of a single ORM. It begins the mapping from the instance of a .NET Framework type to multiple stored procedures.

For each type, there can be an unlimited number of stored procedures to invoke. By default, the system has an enumeration for the four CRUD (create, retrieve, update, delete) operations: Select, Insert, Update, and Delete. I've named them Select, Insert, Update, and Delete because these names closely resemble the SQL statements that represent the kinds of operation they perform.

The mapping between a .NET Framework type (which can be any type that implements either IRelatable or any interface that inherits from it) and a stored procedure is defined by the <commandmap> element. The type attribute on the <commandmap> element indicates the kind of relational operation. It can be something custom, as in the preceding Validate command mapping, or it can be one of the pre-recognized keywords, such as Select or Update.

Beneath the <commandmap> element is the <propertymap> element. This element declares a mapping between a particular field on the given type and a parameter on the stored procedure. The ORM system developed for this book supports both input and output parameters, but the property or field on the object instance must be public; otherwise, the attempt to reflect data from that property will fail and cause undesirable results when communicating with the database.

Code Tour: The ObjectRelator Class

The ObjectRelator class is an abstract class that provides the basic framework for building your own ObjectRelator class. It defines two methods:

public virtual void Relate( IRelatable relatee, RelationType relationType ) public virtual void Relate( IRelatable relatee, string relationKey )

These two overloads provide the basis for all ORM in the entire system. Any class that wishes to be an ObjectRelator class must implement these two methods. The methods allow us to relate an instance object to the database either using one of the four CRUD operations or through some custom-defined operation that corresponds to the type attribute on the <commandMap> element in the ORM.xml embedded resource.

The abstract base class ObjectRelator is key to implementing a provider-agnostic implementation of ORM.

Code Tour: The SqlRelator Class

SqlRelator is an implementation of the abstract class ObjectRelator. Although the implementation I wrote is specific to Microsoft SQL Server, the infrastructure doesn't limit the data support to just SQL. With very little extra code, SqlRelator could be adapted to an OracleRelator class (although some specific code regarding CLOBs would have to be written).

The code for SqlRelator is arguably some of the most complex code in the entire Survey Development Suite, mostly because of its heavy reliance on reflection. If you haven't used reflection before or aren't all that familiar with it, you might want to take a moment to brush up on the basics of reflection with the tutorials at http://samples.gotdotnet.com/quickstart/howto/doc/GetTypes.aspx, or you can consult the MSDN documentation at http://msdn.microsoft.com/library/default.asp?url=/library/en-us/cpguide/html/cpconReflectionOverview.asp.

Listing 3.5 shows a protected helper method that is provided by the ObjectRelator class. This method is responsible for fetching a type map from the embedded ORM.xml file in a given type's assembly.

Listing 3.5 SurveyV1\ObjectRelationalDb\ObjectRelator.cs The FetchTypeMap Method

private ORMTypeMapping FetchTypeMap( IRelatable relatee )

{

SystemTrace.MethodStart( MethodBase.GetCurrentMethod() );

Type t = relatee.GetType();

Assembly sourceAssembly = t.Assembly;

string resourceName = t.Namespace + ".ORM.xml";

XmlDocument xDoc = new XmlDocument();

StreamReader xmlRaw = new StreamReader(

sourceAssembly.GetManifestResourceStream( resourceName ) );

xDoc.Load( xmlRaw );

string query = "//type[@fullname='" + t.FullName + "']";

XmlNode typeMapNode = xDoc.DocumentElement.SelectSingleNode( query );

if (typeMapNode != null )

{

ORMTypeMapping typeMap = new

ORMTypeMapping( typeMapNode );

return typeMap;

}

else

{

SystemTrace.TraceError("Failed to load type map for {0}", t.FullName);

ExceptionManager.Publish(new

NullReferenceException("Unable to fetch type map for " + t.FullName));

}

return null;

}

There are a couple interesting tricks going on here with reflection. Listing 3.5 all hinges on the basic fact that any given type within the .NET Framework knows from which assembly it was loaded. We use that information to get a handle on that assembly. With that, we can obtain resource streams from that assembly. The particular resource stream we're looking for is the ORM.xml file that is (we're hoping) embedded in the assembly.

When we have an XmlDocument instance, created from the ORM.xml file that we loaded from the assembly, we can look for a type mapping that matches the name of the type passed to this function. Finally, when we have the XmlElement element that contains the entire type mapping, we pass that as a constructor argument to the ORMTypeMapping class and return the newly constructed instance to the Relate method, which is shown in Listing 3.6.

Listing 3.6 SurveyV1\ObjectRelationalDb\SqlRelator.cs SqlRelator's Relate Method

public override void Relate( IRelatable relatee, string relationKey )

{

SystemTrace.MethodStart( MethodBase.GetCurrentMethod() );

ORMTypeMapping typeMap = FetchTypeMap( relatee );

ORMCommandMap cmdMap = typeMap.GetMapByName( relationKey );

SqlCommand cmd = BuildCommandFromTypeMap( relatee, typeMap , relationKey );

conn.Open();

if (cmdMap.ReturnsMultiple)

{

SqlDataAdapter da = new SqlDataAdapter( cmd );

IRelatableSet relateSet = (IRelatableSet)relatee;

da.Fill( relateSet.ResultSet );

}

else

{

cmd.ExecuteNonQuery();

}

AssignOutputValuesToObject( cmd, relatee, typeMap, relationKey );

conn.Close();

}

This method should be fairly easy to follow. The first thing it does is try to retrieve a type mapping for the object it is trying to relate via the FetchTypeMap method. When the map has been retrieved, we can then use the BuildCommandFromTypeMap method to create an instance of the SqlCommand class from the ORM data.

Listing 3.7 shows the remainder of the methods for the SqlRelator implementation.

Listing 3.7 SurveyV1\ObjectRelationalDb\SqlRelator.cs The AssignOutputValuesToObject Method and Other Helper Methods

private void AssignOutputValuesToObject( SqlCommand cmd,

IRelatable relatee,

ORMTypeMapping typeMap,

string relationKey )

{

SystemTrace.MethodStart( MethodBase.GetCurrentMethod() );

ORMCommandMap ocm = typeMap.GetMapByName( relationKey );

foreach (object ob in ocm.PropertyMaps)

{

ORMPropertyMap propMap = (ORMPropertyMap)ob;

if (( propMap.DataDirection == ParameterDirection.Output) ||

( propMap.DataDirection == ParameterDirection.InputOutput ) )

{

PropertyInfo prop;

Type t = relatee.GetType();

prop = t.GetProperty( propMap.MemberName );

if (prop != null)

{

if ( cmd.Parameters[ propMap.Parameter ].Value != DBNull.Value)

{

prop.SetValue( relatee, cmd.Parameters[ propMap.Parameter ].Value, null );

}

}

else

{

ExceptionManager.Publish(

new NullReferenceException(

"Missing member " + t.FullName + "." + propMap.MemberName) );

}

}

}

}

private SqlCommand BuildCommandFromTypeMap( IRelatable relatee, ORMTypeMapping typeMap,

string relationKey )

{

SystemTrace.MethodStart( MethodBase.GetCurrentMethod() );

ORMCommandMap ocm = typeMap.GetMapByName( relationKey );

if (ocm != null)

{

SqlCommand cmd = new SqlCommand( ocm.StoredProcedure, conn );

cmd.CommandType = CommandType.StoredProcedure;

foreach (object ob in ocm.PropertyMaps)

{

ORMPropertyMap propMap = (ORMPropertyMap)ob;

SqlParameter newParam = CreateParameterFromPropertyMap( propMap );

if ((newParam.Direction == ParameterDirection.Input) ||

(newParam.Direction == ParameterDirection.InputOutput) )

{

SetParameterValue( newParam, relatee, propMap.MemberName );

}

cmd.Parameters.Add( newParam );

}

return cmd;

}

else

{

ExceptionManager.Publish(

new NullReferenceException(

"No such command mapping: " + typeMap.FullName + ":" + relationKey) );

}

return null;

}

private SqlParameter CreateParameterFromPropertyMap( ORMPropertyMap propMap )

{

SystemTrace.MethodStart( MethodBase.GetCurrentMethod() );

SqlParameter param = new SqlParameter();

param.ParameterName = propMap.Parameter;

param.SqlDbType = propMap.SqlDataType;

param.Direction = propMap.DataDirection;

if (propMap.Size != -1)

param.Size = propMap.Size;

return param;

}

private void SetParameterValue( SqlParameter param, IRelatable relatee, string member )

{

SystemTrace.MethodStart( MethodBase.GetCurrentMethod() );

PropertyInfo propInfo;

Type t = relatee.GetType();

propInfo = t.GetProperty( member );

if (propInfo != null )

{

param.Value = propInfo.GetValue( relatee, null );

}

else

{

SystemTrace.TraceError("Read failed on member {0} on type {1}",

member, t.FullName);

}

}

string relationKey )

{

SystemTrace.MethodStart( MethodBase.GetCurrentMethod() );

ORMCommandMap ocm = typeMap.GetMapByName( relationKey );

if (ocm != null)

{

SqlCommand cmd = new SqlCommand( ocm.StoredProcedure, conn );

cmd.CommandType = CommandType.StoredProcedure;

foreach (object ob in ocm.PropertyMaps)

{

ORMPropertyMap propMap = (ORMPropertyMap)ob;

SqlParameter newParam = CreateParameterFromPropertyMap( propMap );

if ((newParam.Direction == ParameterDirection.Input) ||

(newParam.Direction == ParameterDirection.InputOutput) )

{

SetParameterValue( newParam, relatee, propMap.MemberName );

}

cmd.Parameters.Add( newParam );

}

return cmd;

}

else

{

ExceptionManager.Publish(

new NullReferenceException(

"No such command mapping: " + typeMap.FullName + ":" + relationKey) );

}

return null;

}

private SqlParameter CreateParameterFromPropertyMap( ORMPropertyMap propMap )

{

SystemTrace.MethodStart( MethodBase.GetCurrentMethod() );

SqlParameter param = new SqlParameter();

param.ParameterName = propMap.Parameter;

param.SqlDbType = propMap.SqlDataType;

param.Direction = propMap.DataDirection;

if (propMap.Size != -1)

param.Size = propMap.Size;

return param;

}

private void SetParameterValue( SqlParameter param, IRelatable relatee, string member )

{

SystemTrace.MethodStart( MethodBase.GetCurrentMethod() );

PropertyInfo propInfo;

Type t = relatee.GetType();

propInfo = t.GetProperty( member );

if (propInfo != null )

{

param.Value = propInfo.GetValue( relatee, null );

}

else

{

SystemTrace.TraceError("Read failed on member {0} on type {1}",

member, t.FullName);

}

}

In the first method in Listing 3.7, we see some code that maps the output parameters from a SQL stored procedure onto object instance properties. This enables us to place values that will be used as input to a stored procedure on an object instance, and we can store output and return values from the stored procedure on the same object instance. For example, to validate a user, we might want to pass the username and password, invoke the stored procedure, and then have a user ID on the same object instance populated when the stored procedure has completed.

The BuildCommandFromTypeMap method is a helper method that takes as input an ORMTypeMapping instance, a string indicating the type of relation being performed, and a reference to a relatable object (that is, an object implementing IRelatable). Similarly, the CreateParameterFromPropertyMap method helps out by taking an ORMPropertyMap instance and returning a complete and instantiated SqlParameter instance.

SetParameterValue makes use of the PropertyInfo reflection class in order to set the value for a specific parameter on a given IRelatable instance.

The PropertyInfo Class and Reflection

The ability for the Survey Repository application to relate live, in-memory instances of objects to the database hinges on the fact that the .NET Framework allows you not only to write code that inspect data types at runtime but to write code that can examine various members of an object at runtime.

Most of this work would not be possible without the use of the PropertyInfo class. The reflection process uses this class to obtain information about a particular class member. Not only can it query information about a class member, but it can be used to get and set the value of that member. This allows code to dynamically query and set properties at runtime. This dynamic query and set behavior allows the ObjectRelator class (and of course the SqlRelator class) to transfer information back and forth between the database and a class instance. Table 3.4 illustrates some of the properties of the PropertyInfo class.

PropertyInfo Class Methods

|

Method |

Description |

|

GetAccessors |

Returns an array of MethodInfo objects that indicate the get and set accessors for the current property. |

|

GetCustomAttributes |

Returns an array of objects that represent the custom attributes defined on the current property. |

|

GetGetMethod |

Returns a MethodInfo object for the get accessor for this property. |

|

GetIndexParameters |

Returns an array of index parameters for the property (if they exist) |

|

GetSetMethod |

Returns a MethodInfo object for the set accessor for this property. |

|

GetValue |

Gets the real value of the property as it exists on a given instance of an object that defines this property. |

|

IsDefined |

Indicates whether an attribute is defined on the current property. |

|

SetValue |

Sets the value of the property on an object instance. This method takes an instance and a value as arguments. |

Table 3.5 lists some of the methods on the PropertyInfo class.

PropertyInfo Class Methods

|

Method |

Description |

|

GetAccessors |

Returns an array of MethodInfo objects that indicate the get and set accessors for the current property. |

|

GetCustomAttributes |

Returns an array of objects that represent the custom attributes defined on the current property. |

|

GetGetMethod |

Returns a MethodInfo object for the get accessor for this property. |

|

GetIndexParameters |

Returns an array of index parameters for the property (if they exist) |

|

GetSetMethod |

Returns a MethodInfo object for the set accessor for this property. |

|

GetValue |

Gets the real value of the property as it exists on a given instance of an object that defines this property. |

|

IsDefined |

Indicates whether an attribute is defined on the current property. |

|

SetValue |

Sets the value of the property on an object instance. This method takes an instance and a value as arguments. |

As you can see, the PropertyInfo class provides a wealth of power and functionality for dealing with live, runtime information about a data type, its members, and the values of those members as they exist on object instances.

Error Handling

Survey Repository makes use of the Microsoft application block for exception management (you can find reference material at http://msdn.microsoft.com/library/default.asp?url=/library/en-us/dnbda/html/emab-rm.asp). This is one of the published recommended practices from Microsoft. Microsoft also has application building blocks for other common tasks, including data access.

Even though the Microsoft application block is part of the solution that comes on this book's CD, the solution is still pointing to the Program Files directory for the application block. In other words, you need to have the Microsoft application block for the .NET Framework installed on your PC before you compile the application.

I chose to use the Microsoft application block because with it, the method of throwing exceptions becomes completely decoupled from the method of storing the information contained in those exceptions. For example, if you were to write a standard try/catch block without the aid of an application block, it might look something like this:

try

{

// perform some code that might fail

}

catch (Exception ex)

{

// do something with the exception

}

Although this might look elegant at first glance, it can become a maintenance nightmare. What happens if you want to store exceptions in a database? What do you do if you want to email the contents of certain high-priority failures (such as database failures) to a system administrator? Another possibility might even be to publish the contents of an exception to a system administrator's cellular phone via SMS messaging.

We can use the Microsoft application block for publishing exceptions, as in the following example:

try

{

// perform some code that might fail

}

catch (Exception ex)

{

ExceptionManager.Publish( ex );

}

In this example, we simply call ExceptionManager.Publish. What information gets published and to where it gets published is all contained within the Web.config file (or an app.config or a machine.config file). The big savings here is in maintenance. Let's say you've written 10,000 lines of code for a Web application back end. You then decide that instead of writing all your trapped exceptions to the Windows event log, you want to write them to a database and email certain types of trapped exceptions to a system administrator. Instead of having to sift through all 10,000 lines of code and paste new code into every single location, all you have to do is modify the application's XML configuration file to add a new exception publisher.

Survey Repository, as it comes on the CD that accompanies this book, doesn't make use of custom publishers. Out of the box, it actually doesn't set any of the application block's configuration parameters. Later on we'll tweak various settings with the application to see how they affect things.

Code Tour: Unified Tracing with the SystemTrace Class

Unified tracing is a concept that, until recently, most programmers didn't recognize the need for. If you're writing a Windows Forms application or a console application, you are probably familiar with the System.Diagnostics.Trace class. This class is used to write trace messages. You can store trace messages in text files if you like, or you can simply watch those messages appear in the output window while Visual Studio .NET is debugging the application.

Those of you who have built and debugged ASP.NET applications know that there is a Trace class available to ASP.NET pages. The problem is that this class is not the same as the Trace class available to Windows applications, class libraries, and console applications.

In the MonitorServices project, there is a class called SystemTrace. This class creates a wrapper around the common task of writing messages to a trace log. This wrapper not only writes messages to the trace log provided by System.Diagnostics.Trace, but it writes trace messages to a System.Web.TraceContext class instance. This is the class that makes possible all the additional information you can see at the bottom of ASP.NET pages when tracing page output is enabled.

Aside from a few overloads, all the code for SystemTrace eventually boils down to the code in Listing 3.8.

Listing 3.8 SurveyV1\MonitorServices\SystemTrace.cs The Trace Method on the SystemTrace Class

[Conditional("TRACE")]

public static void Trace( TraceLevel messageLevel,

string message,

params Object[] paramData )

{

if (messageLevel <= traceSwitch.Level)

{

message = ( message == null ? string.Empty : message );

message = MonitorUtilities.SafeFormat( message, paramData );

try

{

IdentityContext ic = (IdentityContext)CallContext.GetData("SAMS_SURVEY_IDENTITY");

string userId = (ic == null ? "--No User Context--" : ic.UserKey );

string userMessage = MonitorUtilities.SafeFormat("[{0}]{1}", userId, message );

System.Diagnostics.Trace.WriteLine( userMessage );

System.Web.HttpContext webContext = System.Web.HttpContext.Current;

if (webContext != null )

{

if ( messageLevel == TraceLevel.Error )

{

webContext.Trace.Warn( userMessage );

}

else

webContext.Trace.Write( userMessage );

}

}

catch

{

// exceptions that occur during tracing should be absorbed to

// avoid creating an infinite loop trying to trace an error

// that occurs during tracing.

}

}

}

Despite the small amount of code in Listing 3.8, there is actually a fair bit of technology being employed here. The first thing you might notice is the use of TraceLevel. It is an enumeration that is defined by the .NET Framework that can be controlled by XML tags in an application's configuration file through the use of trace switches.

A trace switch is aNET;trace switches> piece of functionality that the .NET Framework provides to all applications. To define a trace switch, you simply create a small subsection of an application's configuration file, such as the following:

<system.diagnostics> <switches> <add name="SystemTrace" value="4"></add> </switches> </system.diagnostics>

This code looks fairly simple. In the system.diagnostics section, you make use of the switches section. Within the switches section, a standard name/value pair collection is defined. In this case, you define a key called SystemTrace and a value. The key is arbitrary and completely up to the programmer. You can define multiple switches within a single application for multiple purposes if you like, or you can do as I've done here and create a single, central value that indicates the trace value. The trace value itself can be any of four different values, each corresponding to one of the TraceLevel enumeration values, which are listed in Table 3.6.

TraceLevel Enumeration Values

|

Enumeration |

Value |

Description |

|

Off |

0 |

No tracing should be used. |

|

Error |

1 |

Only errors should be traced. |

|

Warning |

2 |

Warnings and errors should be included in the trace log. |

|

Info |

3 |

Warnings, errors, and informational messages should be included in the trace log. |

|

Verbose |

4 |

Warnings, errors, informational messages, and verbose trace messages such as debug print statements should be traced. This enumeration value essentially indicates that everything should be included in the trace log. |

Another piece of the code that might stand out is the use of a class called IdentityContext. I wrote this class as part of the MonitorServices project. It is a serializable class that implements the ILogicalThreadAffinitive interface. Its sole purpose is to simply store information while being passed along inside the call context.

If you're not familiar with call contexts, you might want to check out some samples that deal with remoting. In essence, you can think of a call context as a portable stack. The items in the stack are popped off each time a method is invoked, making those items available to the method body. When another method is called, that same stack is passed to the called method. In other words, by placing information in a call context, you guarantee that information will be available to all subsequent method calls, regardless of how nested those method calls are, or where those calls go, even across remoting and process boundaries.

The use of call contexts does have some drawbacks. Information passed in a stack to each method call can be fairly expensive. To keep your application performing optimally and still take advantage of call contexts, the data you pass along on a context should be as small as possible.

In the case of the Survey Repository application, we are passing an IdentityContext instance on the call context. This class simply contains the authorization token supplied by the user and the user's real name. By passing this on the call context, we can assure that anywhere in the back end of the application, our code knows who is invoking that code. If something goes wrong and we need to trace information about an exception, we can also trace information about which user invoked the method that had a problem. This becomes an invaluable troubleshooting tool.

In Listing 3.8, once the code has built a suitable string to be traced, making use of an IdentityContext instance, if one is available, it performs the actual trace. This trace is performed by writing to the System.Diagnostics.Trace class as well as the System.Web.TraceContext class. By sending the text to both of those classes, we can be sure that all our trace messages will show up in anything that makes use of a trace writer, as well as on the output of an ASP.NET page if tracing is enabled.

Without unified tracing, the tracing details on the ASP.NET page output are limited to only those events that take place within the code-behind class itself. By using unified tracing, code in the back end, as low as the database layer itself, can write information that will appear on the output of the ASP.NET page trace.

The Survey Repository Database

The Survey Repository database is pretty simple as far as databases go. Its sole purpose is to store and version survey profiles, survey runs, users, and associated user security settings, such as roles and permissions.

One important thing to keep in mind is that we are not actually storing any information about the survey profiles or the runs themselves. The database doesn't store the list of questions or the list of respondents. Instead, the database simply stores the XML serialization of the typed data sets, along with some indexing and version information to make retrieval and browsing easier.

Stored Procedures in Survey Repository

Table 3.7 lists the stored procedures that have been developed to support the Survey Repository Web services.

Stored Procedures in the Survey Repository SQL 2000 Database

|

Stored Procedure |

Description |

|

SVY_Add_RevisionRun |

Adds a new revision of a run to the database. The run XML file is passed along with the survey profile and revision ID. |

|

SVY_CheckInProfile |

Sets the status of a profile to checked-in. |

|

SVY_CreateProfile |

Creates a new survey profile. |

|

SVY_Delete_User |

Deletes a user (and his or her related information) from the system. |

|

SVY_Get_AllRevisions |

Obtains all the revision indexing information for all profiles in the system. |

|

SVY_Get_Effective_ Permissions |

Gets the effective permissions granted to a user by virtue of the user's role membership. |

|

SVY_Get_Profile |

Gets a specific profile from the system. Note that the XML data belongs to a revision, not to the profile itself. |

|

SVY_Get_ProfileRuns |

Gets a list of all runs that belong to a given survey profile. |

|

SVY_Get_UserProfiles |

Gets a list of all profiles that are visible to the given user. This includes profiles the user created and nonprivate profiles created by others. |

|

SVY_GetProfiles |

Gets all profiles in the system. |

|

SVY_GetMaxRevisionId |

Obtains the highest revision ID from the profile history for a given profile. |

|

SVY_GetProfileHistory |

Gets all the different versions of a given profile in the system. |

|

SVY_GetProfileRevision |

Gets the indexing data for a specific revision of a profile. |

|

SVY_GetRun |

Obtains all the information, including the serialized XML, for a given run. |

|

SVY_GetSurveyProfile |

Gets all the indexing data for a specific survey profile. |

|

SVY_Update_User |

Commits changes to a specific user. |

|

SVY_Validate_User |

Validates a given user's password and username. If the user is valid, the user ID is returned. |

Tables in Survey Repository

Table 3.8 briefly summarizes the tables contained within the Survey Repository database and the purpose of each. You'll see for yourself how these tables are used throughout the book, as you spend more time working with the application code.

Tables in the Survey Repository Database

|

Table |

Description |

|

SVY_Categories |

Contains all the permission categories. |

|

SVY_Permissions |

Has a list of all the permissions and their descriptions. |

|

SVY_ProfileHistory |

Stores the revision history of each survey profile in the system. Each row of this table contains an XML string that can be turned into a disk file containing a survey profile. |

|

SVY_RolePermissions |

Stores a mapping of all the permissions that have been granted to the various roles. |

|

SVY_Roles |

Contains an index of roles and their descriptions. |

|

SVY_Runs |

Contains all the various runs being managed by the repository. Each row of this table contains an XML string that can be used to produce a disk file containing a survey run. |

|

SVY_SurveyProfiles |

Is a top-level index of all the profiles being managed by the repository. |

|

SVY_UserRoles |

Maps the roles granted to users. |

|

SVY_Users |

Is an index of all the users in the system, including their usernames and passwords. |

Listing 3.9 shows some of the most interesting stored procedures found in the database, in no particular order. You will be seeing more of these and learning more about their purposes and functions later. To keep the SQL as portable as possible, there are very few fancy tricks in the stored procedures and there is very little, if any, SQL Server–specific code.

Listing 3.9 Selected Stored Procedures in the Survey Repository Database

CREATE PROCEDURE SVY_Validate_User

@UserName varchar(8),

@Password varchar(8),

@UserId int output,

@FullName varchar(40) output

AS

SELECT @UserId = UserId, @FullName = FullName FROM

SVY_Users WHERE UserName = @UserName AND Password = @Password

IF @UserId IS NULL

BEGIN

SET @UserId = -1

SET @FullName = 'Invalid User'

END

GO

CREATE PROCEDURE SVY_GetProfileHistory

@ProfileId int

AS

SELECT h.ProfileID, h.RevisionId, h.RevisedBy,

h.RevisedOn, h.XMLSource, h.RevisionComment,

u.UserName, u.FullName

FROM SVY_ProfileHistory h INNER JOIN

SVY_Users u ON h.RevisedBy = u.UserId

ORDER BY h.RevisionId ASC

GO

CREATE PROCEDURE SVY_Get_AllRevisions

AS

SELECT p.ProfileId, p.CreatedBy, p.CreatedOn,

p.State, p.CheckedOutBy, p.CheckedOutOn,

p.ShortDescription, u.FullName as CreatedByName,

u2.FullName as CheckedOutByName,

ph.RevisionId, ph.RevisedBy, ph.RevisedOn, ph.RevisionComment

FROM SVY_SurveyProfiles p INNER JOIN SVY_Users u ON p.CreatedBy = u.UserId

INNER JOIN SVY_ProfileHistory ph ON p.ProfileId = ph.ProfileId

LEFT JOIN SVY_Users u2 ON p.CheckedOutBy = u2.UserId

ORDER BY p.ShortDescription ASC

GO

CREATE PROCEDURE SVY_CreateProfile

@ShortDescription varchar(50),

@LongDescription varchar(4000),

@CreatedBy int,

@Private bit,

@XMLSource text,

@ProfileId int output

AS

BEGIN TRANSACTION

INSERT INTO SVY_SurveyProfiles(ShortDescription,

LongDescription, CreatedBy,

Private, CreatedOn, State)

VALUES(@ShortDescription,

@LongDescription, @CreatedBy,

@Private, getdate(), 0)

SET @ProfileId = @@IDENTITY

INSERT INTO SVY_ProfileHistory(ProfileId, RevisionId,

RevisedBy, RevisedOn, RevisionComment, XMLSource)

VALUES(@ProfileId, 1, @CreatedBy, getdate(),

'Created', @XMLSource)

COMMIT TRANSACTION

GO

CREATE PROCEDURE SVY_Create_User

@UserName varchar(8),

@Password varchar(8),

@FullName varchar(40),

@UserId int output

AS

INSERT INTO SVY_Users(UserName, Password, FullName)

VALUES(@UserName, @Password, @FullName)

SET @UserId = @@IDENTITY

GO

As you can see, there really isn't anything particularly complex going on in the stored procedures. The code uses a lot of joins and filters. The only transaction that Survey Repository makes use of is a transaction used when creating a new survey profile. When the stored procedure to create a new profile is called, it creates a new profile as well as the first revision of that profile. Other than that, the stored procedures all perform pretty basic INSERT, UPDATE, DELETE, and SELECT operations.