Data Just Right LiveLessons (Video Training)

- By Michael Manoochehri

- Published Dec 26, 2013 by Addison-Wesley Professional. Part of the LiveLessons series.

Downloadable Video

- Sorry, this book is no longer in print.

- About this video

Accessible from your Account page after purchase. Requires the free QuickTime Player software.

Videos can be viewed on: Windows 8, Windows XP, Vista, 7, and all versions of Macintosh OS X including the iPad, and other platforms that support the industry standard h.264 video codec.

Register your product to gain access to bonus material or receive a coupon.

Description

- Copyright 2014

- Edition: 1st

- Downloadable Video

- ISBN-10: 0-13-380714-2

- ISBN-13: 978-0-13-380714-1

5+ Hours of Video Instruction

Overview

Data Just Right LiveLessons provides a practical introduction to solving common data challenges, such as managing massive datasets, visualizing data, building data pipelines and dashboards, and choosing tools for statistical analysis. You will learn how to use many of today's leading data analysis tools, including Hadoop, Hive, Shark, R, Apache Pig, Mahout, and Google BigQuery.

Data Just Right LiveLessons shows how to address each of today's key Big Data use cases in a cost-effective way by combining technologies in hybrid solutions. You'll find expert approaches to managing massive datasets, visualizing data, building data pipelines and dashboards, choosing tools for statistical analysis, and more. These videos demonstrate techniques using many of today's leading data analysis tools, including Hadoop, Hive, Shark, R, Apache Pig, Mahout, and Google BigQuery.

Data Engineer and former Googler Michael Manoochehri provides viewers with an introduction to implementing practical solutions for common data problems. The course does not assume any previous experience in large scale data analytics technology, and includes detailed, practical examples.

Skill Level

- Beginner

What You Will Learn

- Mastering the four guiding principles of Big Data success—and avoiding common pitfalls

- Emphasizing collaboration and avoiding problems with siloed data

- Hosting and sharing multi-terabyte datasets efficiently and economically

- "Building for infinity" to support rapid growth

- Developing a NoSQL Web app with Redis to collect crowd-sourced data

- Running distributed queries over massive datasets with Hadoop and Hive

- Building a data dashboard with Google BigQuery

- Exploring large datasets with advanced visualization

- Implementing efficient pipelines for transforming immense amounts of data

- Automating complex processing with Apache Pig and the Cascading Java library

- Applying machine learning to classify, recommend, and predict incoming information

- Using R to perform statistical analysis on massive datasets

- Building highly efficient analytics workflows with Python and Pandas

- Establishing sensible purchasing strategies: when to build, buy, or outsource

- Previewing emerging trends and convergences in scalable data technologies and the evolving role of the "Data Scientist"

Who Should Take This Course

Professionals who need practical solutions to common data challenges that they can implement with limited resources and time.

Course Requirements

- Basic familiarity with SQL

- Some experience with a high-level programming language such as Java, JavaScript, Python, R

- Experience working in a command line environment

Table of Contents

Lesson 1:

Four Rules for Data Success describes why "Big Data" is such a hot concept right now. We take a look at four useful strategies for tackling big data problems, followed by examples of what a Big Data pipeline application looks like. Finally, we will take a few minutes to imagine what an ideal database system might look like.

Lesson 2:

Hosting and Sharing Terabytes of Raw Data describes how to solve the common challenge of hosting and sharing tons of files. This lesson starts with a discussion about why this task can be so challenge. Next, we will take a look at some common data formats and how to use infrastructure as a service technology to host and share files. We end this lesson by touching on common formats used in data serialization.

Lesson 3:

Building a NoSQL-Based Web App to Collect Crowd-Sourced Data introduces the technology necessary to handle huge volumes of constantly growing data. We start this lesson by learning about the history and use of relational databases. Next, we learn about why the relational model isn't always the best technology for handling large volumes of data. We then introduce a collection of technologies that break from the relational model, and demonstrate how to work with the key-value datastore, Redis. Finally, we take a look at future trends in database technology.

Lesson 4:

Strategies for Dealing with Data Silos covers why data becomes stranded in different locations, and what to do about it. This lesson starts with a discussion of the history and meaning of business intelligence. Next, we take a look at how Hadoop is affecting data warehousing. We then discuss ways in which Data Silos Can Be Good. We end this lesson by discussing technological convergence and the future of the Business Intelligence concept.

Lesson 5:

Using Hadoop, Hive, and Shark to Ask Questions about Large Data expands on the concepts of the previous lesson by providing an introduction to the data warehousing application Apache Hive. This lesson shows how to load and query data in Hive. It will also provide a short introduction to AMPLab's Shark, as well as take a look at new solutions for data warehousing in the cloud.

Lesson 6:

Building a Data Dashboard with Google BigQuery starts with an introduction to the concept of analytical databases. We take a look at Google's Dremel project, as well as the BigQuery API. We will run a BigQuery query and retrieve the result. We then learn how to visualize BigQuery result sets. Finally, we discuss the future of analytical query engines.

Lesson 7:

Visualization Strategies for Exploring Large Datasets introduces the concepts and history of data visualization. We begin this lesson with the goals of visualization, and a discussion of some of the pitfalls. We follow this with a collection of strategies for dealing with visualization of very large datasets. We then build interactive visualizations with R and ggplot2, and learn how to build 2D plots with Python and matplotlib. We will conclude this lesson by learning how to build interactive visualizations for the Web with D3.js.

Lesson 8:

Putting it together: MapReduce Data Pipelines describes how to bring together many of the concepts in our previous lessons by demonstrating how to pipe data from one place to another. We start by learning how to write a simple data pipeline script using UNIX command line tools. We then learn about how the Hadoop MapReduce framework works. Next, we write a Hadoop streaming MapReduce job in Python. We then get even more complex, by writing a multistep MapReduce job using the mrjob Python library. Finally, we will take a look at running mrjob scripts on Amazon's Elastic MapReduce.

Lesson 9:

Building Data Transformation Workflows with Pig and Cascading covers the challenges of building complex data workflows. We learn how to write a workflow script using Apache Pig. We then learn how to write data processing workflow applications with the Cascading library. Finally, we end the lesson with a discussion about when to use Pig versus Cascading.

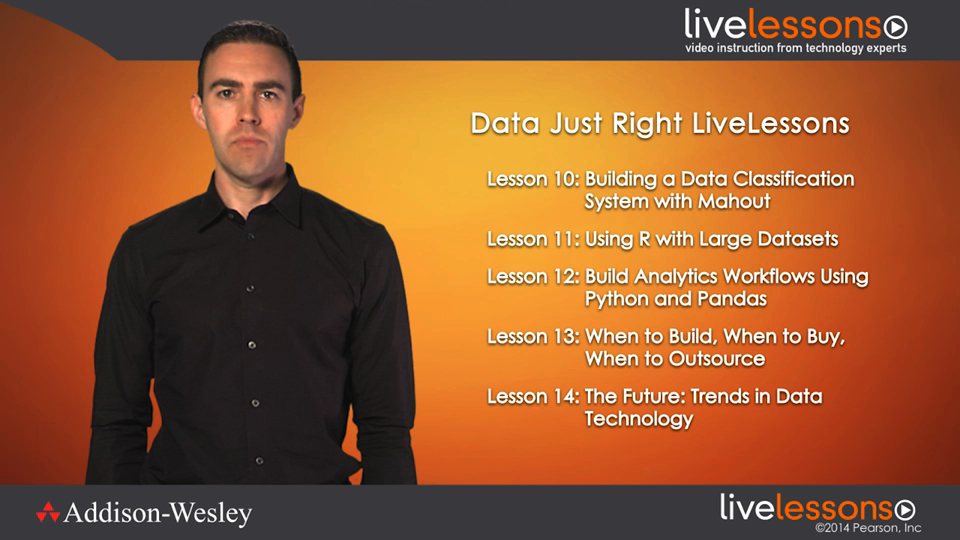

Lesson 10:

Building a Data Classification System with Mahout, we take a look at some of the common use cases and limitations of machine learning technology. We then demonstrate some of the most common machine learning algorithms, such as bayesian classification, clustering, and recommendation engines. Next we introduce the Apache Mahout project, and demonstrate Bayesian classification. Finally, we take a look at some new innovations in open-source machine learning, including MLbase.

Lesson 11:

Using R with Large Datasets covers methods for understanding how memory is used by R. Next, we learn how to work with large matrices using R's bigmemory package. We then learn how to manipulate large data frames with ff, and how to run a linear regression over large datasets using biglm. Finally, we take a look at how to interface with Hadoop using R.

Lesson 12:

Building Analytics Workflows Using Python and Pandas starts with a discussion of when to choose one programming language for analytics over another. Next, we introduce the workhorse Python libraries of NumPy and SciPy, followed by the use of the Pandas library for analyzing time series data. We end this lesson by introducing the iPython notebook, which is becoming a ubiquitous tool in the data analytics world.

Lesson 13:

When to Build, When to Buy, When to Outsource teaches how to understand data problems in order to make the best decisions about purchasing. This lesson will cover guidelines for the build versus buy problem. Next, we take a look at the differences between public versus private data centers. We discuss the potential hidden costs of open-source software. Finally, we learn about the use and viability of analytics as a service technologies.

Lesson 14:

The Future: Trends in Data, we travel we first revisit some of the trends driving innovation in data analytics technology. We then discuss how the success of the open-source Apache Hadoop project has caused disruption in the data analytics space. Next, we take a look at analytics technologies in the cloud. The future isn't just about technology–so we take a look at the evolving definition of "data scientist." Finally we take a look at how converging technologies are setting the stage for future innovations in data analysis.

LiveLessons Video Training series publishes hundreds of hands-on, expert-led video tutorials covering a wide selection of technology topics designed to teach you the skills you need to succeed. This professional and personal technology video series features world-leading author instructors published by your trusted technology brands: Addison-Wesley, Cisco Press, IBM Press, Pearson IT Certification, Prentice Hall, Sams, and Que. Topics include: IT Certification, Programming, Web Development, Mobile Development, Home & Office Technologies, Business & Management, and more. View All LiveLessons http://www.informit.com/imprint/series_detail.aspx?ser=2185116

More Information