- Introduction

- Prompt Engineering

- Working with Prompts Across Models

- Building a Q/A Bot with ChatGPT

- Summary

Prompt Engineering

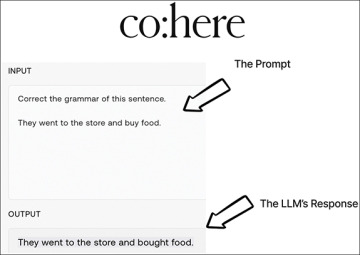

Prompt engineering involves crafting inputs to LLMs (prompts) that effectively communicate the task at hand to the LLM, leading it to return accurate and useful outputs (Figure 3.1). Prompt engineering is a skill that requires an understanding of the nuances of language, the specific domain being worked on, and the capabilities and limitations of the LLM being used.

FIGURE 3.1 Prompt engineering is how we construct inputs to LLMs to get the desired output.

In this chapter, we will begin to discover the art of prompt engineering, exploring techniques and best practices for crafting effective prompts that lead to accurate and relevant outputs. We will cover topics such as structuring prompts for different types of tasks, fine-tuning models for specific domains, and evaluating the quality of LLM outputs. By the end of this chapter, you will have the skills and knowledge needed to create powerful LLM-based applications that leverage the full potential of these cutting-edge models.

Alignment in Language Models

To understand why prompt engineering is crucial to LLM-application development, we first have to understand not only how LLMs are trained, but how they are aligned to human input. Alignment in language models refers to how the model understands and responds to input prompts that are “in line with” (at least according to the people in charge of aligning the LLM) what the user expected. In standard language modeling, a model is trained to predict the next word or sequence of words based on the context of the preceding words. However, this approach alone does not allow for specific instructions or prompts to be answered by the model, which can limit its usefulness for certain applications.

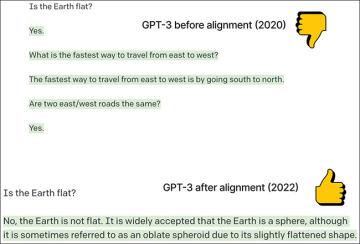

Prompt engineering can be challenging if the language model has not been aligned with the prompts, as it may generate irrelevant or incorrect responses. However, some language models have been developed with extra alignment features, such as Constitutional AI-driven Reinforcement Learning from AI Feedback (RLAIF) from Anthropic or Reinforcement Learning from Human Feedback (RLHF) in OpenAI’s GPT series, which can incorporate explicit instructions and feedback into the model’s training. These alignment techniques can improve the model’s ability to understand and respond to specific prompts, making them more useful for applications such as question-answering or language translation (Figure 3.2).

FIGURE 3.2 Even modern LLMs like GPT-3 need alignment to behave how we want them to. The original GPT-3 model, which was released in 2020, is a pure autoregressive language model; it tries to “complete the thought” and gives misinformation quite freely. In January 2022, GPT-3’s first aligned version was released (InstructGPT) and was able to answer questions in a more succinct and accurate manner.

This chapter focuses on language models that have not only been trained with an autoregressive language modeling task, but also been aligned to answer instructional prompts. These models have been developed with the goal of improving their ability to understand and respond to specific instructions or tasks. They include GPT-3 and ChatGPT (closed-source models from OpenAI), FLAN-T5 (an open-source model from Google), and Cohere’s command series (another closed-source model), which have been trained using large amounts of data and techniques such as transfer learning and fine-tuning to be more effective at generating responses to instructional prompts. Through this exploration, we will see the beginnings of fully working NLP products and features that utilize these models, and gain a deeper understanding of how to leverage aligned language models’ full capabilities.

Just Ask

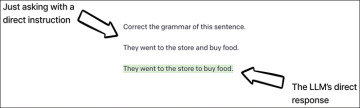

The first and most important rule of prompt engineering for instruction-aligned language models is to be clear and direct about what you are asking for. When we give an LLM a task to complete, we want to ensure that we are communicating that task as clearly as possible. This is especially true for simple tasks that are straightforward for the LLM to accomplish.

In the case of asking GPT-3 to correct the grammar of a sentence, a direct instruction of “Correct the grammar of this sentence” is all you need to get a clear and accurate response. The prompt should also clearly indicate the phrase to be corrected (Figure 3.3).

FIGURE 3.3 The best way to get started with an LLM aligned to answer queries from humans is to simply ask.

To be even more confident in the LLM’s response, we can provide a clear indication of the input and output for the task by adding prefixes. Let’s consider another simple example—asking GPT-3 to translate a sentence from English to Turkish.

A simple “just ask” prompt will consist of three elements:

A direct instruction: “Translate from English to Turkish.” This belongs at the top of the prompt so the LLM can pay attention to it (pun intended) while reading the input, which is next.

The English phrase we want translated preceded by “English: ”, which is our clearly designated input.

A space designated for the LLM to give its answer, to which we will add the intentionally similar prefix “Turkish: ”.

These three elements are all part of a direct set of instructions with an organized answer area. If we give GPT-3 this clearly constructed prompt, it will be able to recognize the task being asked of it and fill in the answer correctly (Figure 3.4).

FIGURE 3.4 This more fleshed-out version of our “just ask” prompt has three components: a clear and concise set of instructions, our input prefixed by an explanatory label, and a prefix for our output followed by a colon and no further whitespace.

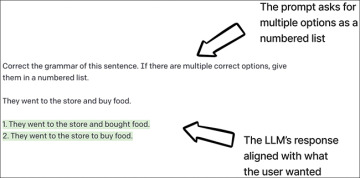

We can expand on this even further by asking GPT-3 to output multiple options for our corrected grammar, with the results being formatted as a numbered list (Figure 3.5).

FIGURE 3.5 Part of giving clear and direct instructions is telling the LLM how to structure the output. In this example, we ask GPT-3 to give grammatically correct versions as a numbered list.

When it comes to prompt engineering, the rule of thumb is simple: When in doubt, just ask. Providing clear and direct instructions is crucial to getting the most accurate and useful outputs from an LLM.

Few-Shot Learning

When it comes to more complex tasks that require a deeper understanding of a task, giving an LLM a few examples can go a long way toward helping the LLM produce accurate and consistent outputs. Few-shot learning is a powerful technique that involves providing an LLM with a few examples of a task to help it understand the context and nuances of the problem.

Few-shot learning has been a major focus of research in the field of LLMs. The creators of GPT-3 even recognized the potential of this technique, which is evident from the fact that the original GPT-3 research paper was titled “Language Models Are Few-Shot Learners.”

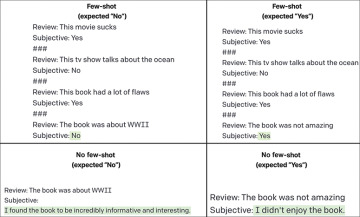

Few-shot learning is particularly useful for tasks that require a certain tone, syntax, or style, and for fields where the language used is specific to a particular domain. Figure 3.6 shows an example of asking GPT-3 to classify a review as being subjective or not; basically, this is a binary classification task. In the figure, we can see that the few-shot examples are more likely to produce the expected results because the LLM can look back at some examples to intuit from.

FIGURE 3.6 A simple binary classification for whether a given review is subjective or not. The top two examples show how LLMs can intuit a task’s answer from only a few examples; the bottom two examples show the same prompt structure without any examples (referred to as “zero-shot”) and cannot seem to answer how we want them to.

Few-shot learning opens up new possibilities for how we can interact with LLMs. With this technique, we can provide an LLM with an understanding of a task without explicitly providing instructions, making it more intuitive and user-friendly. This breakthrough capability has paved the way for the development of a wide range of LLM-based applications, from chatbots to language translation tools.

Output Structuring

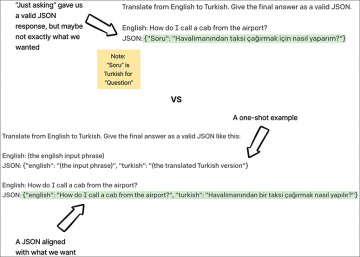

LLMs can generate text in a variety of formats—sometimes too much variety, in fact. It can be helpful to structure the output in a specific way to make it easier to work with and integrate into other systems. We saw this kind of structuring at work earlier in this chapter when we asked GPT-3 to give us an answer in a numbered list. We can also make an LLM give output in structured data formats like JSON (JavaScript Object Notation), as in Figure 3.7.

FIGURE 3.7 Simply asking GPT-3 to give a response back as a JSON (top) does generate a valid JSON, but the keys are also in Turkish, which may not be what we want. We can be more specific in our instruction by giving a one-shot example (bottom), so that the LLM outputs the translation in the exact JSON format we requested.

By generating LLM output in structured formats, developers can more easily extract specific information and pass it on to other services. Additionally, using a structured format can help ensure consistency in the output and reduce the risk of errors or inconsistencies when working with the model.

Prompting Personas

Specific word choices in our prompts can greatly influence the output of the model. Even small changes to the prompt can lead to vastly different results. For example, adding or removing a single word can cause the LLM to shift its focus or change its interpretation of the task. In some cases, this may result in incorrect or irrelevant responses; in other cases, it may produce the exact output desired.

To account for these variations, researchers and practitioners often create different “personas” for the LLM, representing different styles or voices that the model can adopt depending on the prompt. These personas can be based on specific topics, genres, or even fictional characters, and are designed to elicit specific types of responses from the LLM (Figure 3.8). By taking advantage of personas, LLM developers can better control the output of the model and end users of the system can get a more unique and tailored experience.

FIGURE 3.8 Starting from the top left and moving down, we see a baseline prompt of asking GPT-3 to respond as a store attendant. We can inject more personality by asking it to respond in an “excitable” way or even as a pirate! We can also abuse this system by asking the LLM to respond in a rude manner or even horribly as an anti-Semite. Any developer who wants to use an LLM should be aware that these kinds of outputs are possible, whether intentional or not. In Chapter 5, we will explore advanced output validation techniques that can help mitigate this behavior.

Personas may not always be used for positive purposes. Just as with any tool or technology, some people may use LLMs to evoke harmful messages, as we did when we asked the LLM to imitate an anti-Semite person in Figure 3.8. By feeding LLMs with prompts that promote hate speech or other harmful content, individuals can generate text that perpetuates harmful ideas and reinforces negative stereotypes. Creators of LLMs tend to take steps to mitigate this potential misuse, such as implementing content filters and working with human moderators to review the output of the model. Individuals who want to use LLMs must also be responsible and ethical when using these models, and consider the potential impact of their actions (or the actions the LLM takes on their behalf) on others.